Ada in Europe

We examine how existing and emerging regulation in the EU strengthens, supports or challenges interests of people and society – locally and globally.

Through our Brussels office, our work in Europe considers how the interests of people and society are met by data, digital and AI regulation. To do this, we provide evidence-based research, including technical research.

To find out more about our work, email our European Public Policy Lead Connor Dunlop.

The current environment

An overarching aim of the EU’s digital strategy is to rebalance power between big tech companies and society by addressing market imbalances and placing the responsibility and accountability on the large platforms known as ‘gatekeepers’.

EU digital legislation is key to this approach. The Digital Services Act sets rules on intermediaries’ obligations and accountability, while the Digital Markets Act seeks to ‘ensure these platforms behave in a fair way online’.

‘Gatekeepers’ are platforms that have a strong economic position, are active in multiple EU countries and act as a gateway between businesses and consumers in relation to core platform services. The European Commission has designated six companies as gatekeepers: Alphabet, Amazon, Apple, Meta, Microsoft and ByteDance.

Similarly, the EU’s data strategy centres on providing more equitable access to data through mechanisms such as data sharing and interoperability via the Data Act and Data Governance Act.

Other legislation will provide further protection. The AI Act will have a preventative scope, assigning obligations across the whole AI supply chain including post-market deployment. The AI Liability Directive will have a compensatory scope, that is, providing recompense if harm occurs.

Ada’s approach

The impact of initiatives – such as the Digital Services Act and the Digital Markets Act, and the AI Act and AI Liability Directive – will depend upon effective implementation and enforcement, including through the GDPR .

Our work currently focuses on regulatory capacity and institutional design. For example, we supported the EU institutions in conceptualising a strengthened ‘AI Office’ (rather than an ‘AI Board’ as originally proposed in the EU AI Act).

Our priorities for policy research

1. Governance including the EU AI Act and AI liability

As the EU AI Act has been adopted into law, we will study its implementation, including how its recitals and articles should be interpreted, to help ensure the Act meets its objective of protecting people and society from potential harms. Our previous work in this area includes convening, policy analysis and engagement on the AI Act. See our expert explainer, policy briefing and expert opinion.

The next big question for policymakers in the EU – and the UK – is how to allocate legal liability throughout an AI system’s supply chain. Ada has commissioned expert analysis around this, including how liability law could support a legal framework for AI.

In 2023, to support policymakers, we also published a paper on risk assessment and mitigation in AI; and a paper on how governments can reduce information asymmetries through monitoring and foresight functions.

Our three-year programme on the governance of biometrics provides legal analysis and public deliberation on issues of safeguards, use and risks of biometric data and technologies.

2. Ethics and accountability in practice

We monitor, develop, pilot and evaluate mechanisms for public and private scrutiny and accountability of data and AI. This includes our research on technical methods for auditing algorithmic systems; a collaboration with AI Now and the Open Government Partnership which surveyed algorithmic accountability policy mechanisms in the public sector; and a pilot of algorithmic impact assessments in healthcare.

3. Rethinking data and rebalancing power

Rethinking data and rebalancing power reconsidered the narrative, uses and governance of data. The expert working group – co-chaired by Professor Diane Coyle (Bennett Institute of Public Policy, Cambridge) and Paul Nemitz (Director, Principal Adviser on Justice Policy, EU Commission, and Member of the German Data Ethics Commission) – sought to answer a radical question:

What is a more ambitious vision for the future of data that extends the scope of what we think is possible?’

4. AI standards and civil society participation

Both the EU AI Act and forthcoming UK legislation will rely heavily on the creation of AI standards by international bodies like ISO.

Ada has previously researched how these standards processes can include more civil society voices as well as other innovations that might improve democratic control. We also hosted an expert roundtable on EU AI standards development and civil society participation.

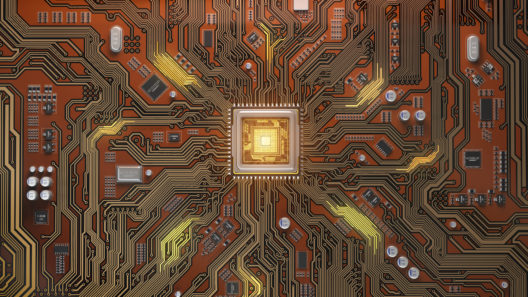

5. Foundation model governance

We first called for the inclusion of foundation models (or ‘general-purpose AI models’) in regulation in early 2022. Since then, our work has increasingly focused on the unique risk profile of foundation models, and the challenges posed for policymakers.

Our work to support both governments and the wider ecosystem in understanding the AI value chain and the (potentially) critical role of foundation models began with establishing a shared terminology through our explainer. We also identified the key considerations for public-sector deployment of models like OpenAI’s GPT-4 and its application interface, ChatGPT.

We have also explored if foundation model governance can learn from the regulation of similarly complex, novel technologies in the life sciences sector. This includes regulatory powers and potential mechanisms such as pre-market approval for foundation models.

Image credit: DKosig

Related publications

An EU AI Act that works for people and society

Five areas of focus for the trilogues

What is a foundation model?

This explainer is for anyone who wants to learn more about foundation models, also known as 'general-purpose artificial intelligence' or 'GPAI'.

AI assurance?

Assessing and mitigating risks across the AI lifecycle

Keeping an eye on AI

Approaches to government monitoring of the AI landscape

Allocating accountability in AI supply chains

This paper aims to help policymakers and regulators explore the challenges and nuances of different AI supply chains

Inclusive AI governance

Civil society participation in standards development

The value chain of general-purpose AI

A closer look at the implications of API and open-source accessible GPAI for the EU AI Act

Rethinking data and rebalancing digital power

What is a more ambitious vision for data use and regulation that can deliver a positive shift in the digital ecosystem towards people and society?

AI liability in Europe

Legal context and analysis on how liability law could support a more effective legal framework for AI

Expert explainer: The EU AI Act proposal

A description of the significance of the EU AI Act, its scope and main points

People, risk and the unique requirements of AI

18 recommendations to strengthen the EU AI Act

Expert opinion: Regulating AI in Europe

Four problems and four solutions

Three proposals to strengthen the EU Artificial Intelligence Act

Recommendations to improve the regulation of AI – in Europe and worldwide

Technical methods for regulatory inspection of algorithmic systems

A survey of auditing methods for use in regulatory inspections of online harms in social media platforms

Algorithmic accountability for the public sector

Learning from the first wave of policy implementation

The Citizens’ Biometrics Council

Report with recommendations and findings of a public deliberation on biometrics technology, policy and governance

Upcoming and previous events

EU AI standards development and civil society participation

In May 2023, the Ada Lovelace Institute hosted an expert roundtable on EU AI standards development and civil society participation.

Inform, educate, entertain… and recommend?

Exploring the use and ethics of recommendation systems in public service media