Algorithmic accountability for the public sector

Learning from the first wave of policy implementation

24 August 2021

The Ada Lovelace Institute (Ada), AI Now Institute (AI Now), and Open Government Partnerships (OGP) have partnered for the first global study of the initial wave of algorithmic accountability policy for the public sector.

As governments are increasingly turning to algorithms to support decision-making for public services, there is growing evidence that suggests that these systems can cause harm and frequently lack transparency in their implementation. Reformers in and outside of government are turning to regulatory and policy tools, hoping to ensure algorithmic accountability across countries and contexts. These responses are emergent and shifting rapidly, and they vary widely in form and substance – from legally binding commitments, to high-level principles and voluntary guidelines.

This report presents evidence on the use of algorithmic accountability policies in different contexts from the perspective of those implementing these tools, and explores the limits of legal and policy mechanisms in ensuring safe and accountable algorithmic systems.

This new research highlights that although this is a relatively new area of technology

governance, there is a high level of international activity and different governments are using

a variety of policy mechanisms to increase algorithmic accountability.

The report explores in detail the variety of policies taking shape in the public sector, and draws out six lessons for policymakers and industry leaders looking to deploy and implement algorithmic accountability policies effectively.

This research forms part of Ada’s wider work on algorithm accountability and the public sector use of algorithms. It builds on existing work on tools for assessing algorithmic systems, mechanisms for meaningful transparency on the use of algorithms in the public sector, and active research with UK local authorities and government bodies seeking to implement algorithmic tools, auditing methods and transparency mechanisms.

Related content

Algorithmic accountability for the public sector

Research with AI Now and the Open Government Partnership to learn from the first wave of algorithmic accountability policy.

Can algorithms ever make the grade?

The failure of the A-level algorithm highlights the need for a more transparent, accountable and inclusive process in the deployment of algorithms.

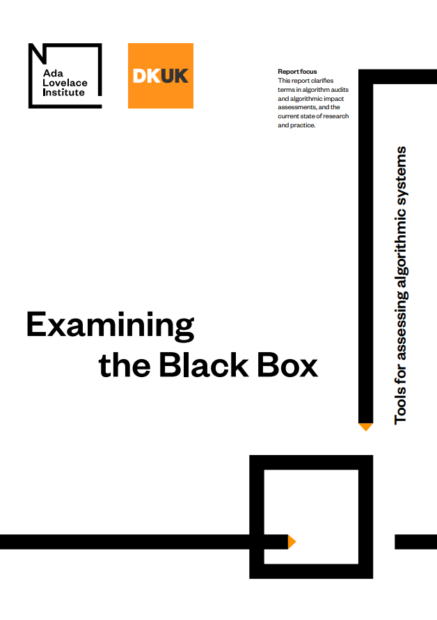

Examining the Black Box

Identifying common language for algorithm audits and impact assessments

What forms of mandatory reporting can help achieve public-sector algorithmic accountability?

A look at transparency mechanisms that should be in place to enable us to scrutinise and challenge algorithmic decision-making systems