Allocating accountability in AI supply chains

This paper aims to help policymakers and regulators explore the challenges and nuances of different AI supply chains

29 June 2023

Reading time: 103 minutes

Executive summary

Creating an artificial intelligence (AI) system is a collaborative effort that involves many actors and sources of knowledge. Whether simple or complex, built in-house or by an external developer, AI systems often rely on complex supply chains, each involving a network of actors responsible for various aspects of the system’s training and development.

As policymakers seek to develop a regulatory framework for AI technologies, it will be crucial for them to understand how these different supply chains work, and how to assign relevant, distinct responsibilities to the appropriate actor in each supply chain. Policymakers must also recognise that not all actors in supply chains will be equally resourced, and regulation will need to take account of these realities.

Depending on the supply chain, some companies (perhaps UK small businesses) supplying services directly to customers will not have the power, access or capability to address or mitigate all risks or harms that may arise.

This paper aims to help policymakers and regulators explore the challenges and nuances of different AI supply chains, and provides a conceptual framework for how they might apply different responsibilities in the regulation of AI systems. The paper seeks to address the following:

- Set out what is or is not distinctive about AI supply chains compared with other technologies.

- Examine high-level examples of different kinds of AI supply chains. Examples include:

- systems built in-house

- systems relying on another application programming interface (API)

- systems built for a customer (or fine-tuned for one).

- Provide the components for a general conceptual framework for how regulators could apply relevant, distinctive responsibilities to different actors in an AI supply chain.

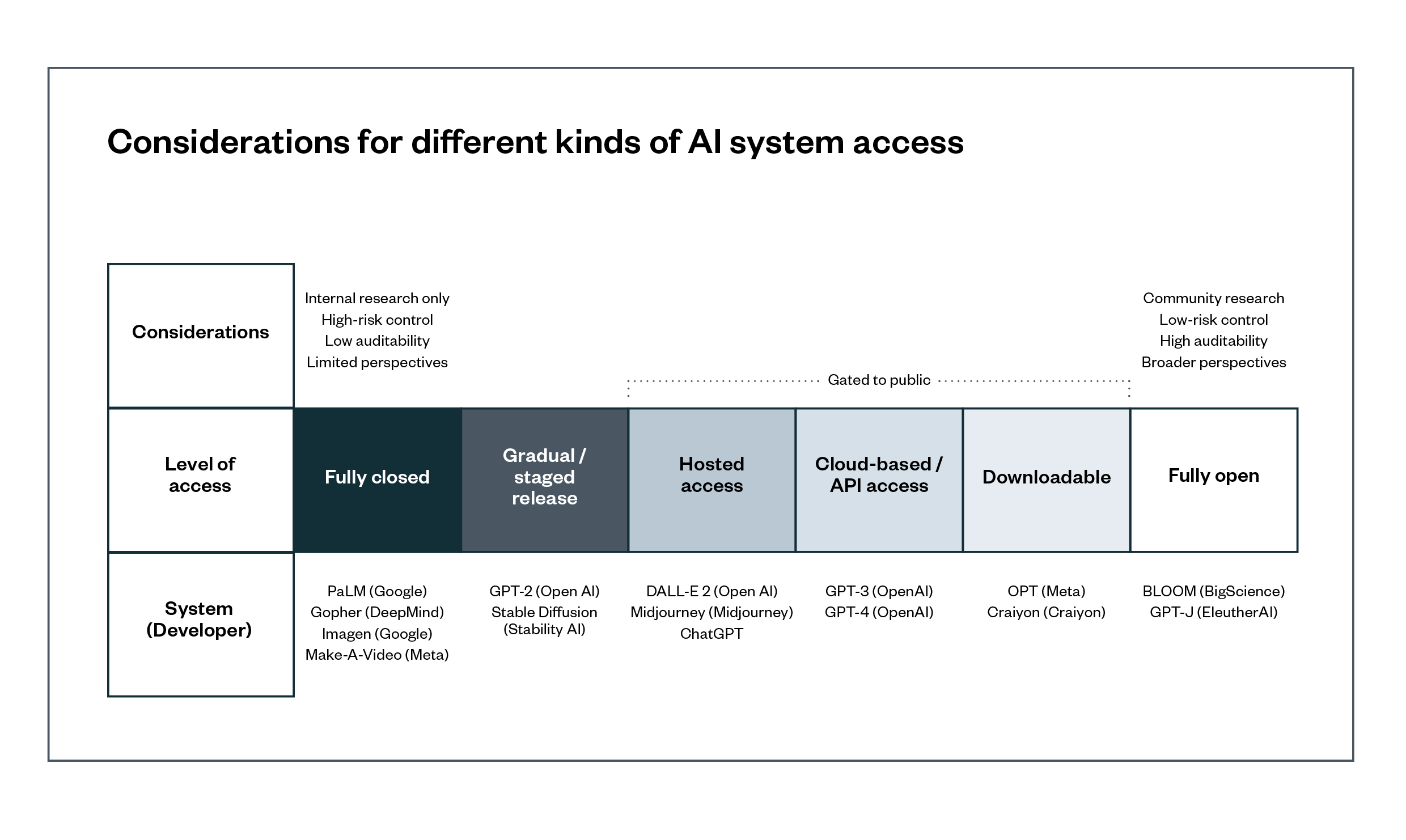

- Explore the unique complexities that ‘foundation models’ raise for assigning responsibilities to different actors in their supply chain, and how different mechanisms for releasing these models may complicate allocations of responsibility.

In this explainer we use the term ‘foundation models’ – which are also known as ‘general-purpose AI’ or ‘GPAI’. Definitions of GPAI and foundation models are similar and sometimes overlapping. We have chosen to use ‘foundation models’ as the core term to describe these technologies. We use the term ‘GPAI’ in quoted material, and where it’s necessary for a particular explanation.

Key findings

- Our evidence review suggests that AI system supply chains have many commonalities with other types of digital technologies, for example raw material mining for smart device hardware. However, there are some significant differences in the novelty, complexity and speed of ongoing change and adaptation of AI models, which make it difficult to standardise or even precisely specify their features. The scale and wide range of potential uses of AI systems can also make it more challenging to attribute responsibility (and legal liability) for harms resulting from complex supply chains.

- After discussing various types of AI supply chains, we describe a conceptual framework for assigning responsibility that focuses on principles of transparency, incentivisation, efficacy and accountability.

- To support this framework, regulators should mandate the use of various mechanisms that enable a flow of critical information. These mechanisms should also enable modes of redress up and down an AI system’s supply chain and identify new ways to incentivise these practices in supply chains.

- The advent of foundation models (such as OpenAI’s GPT-4) complicate the challenge of allocating responsibility. These systems enable a single model to act as a ‘foundation’ for a wide range of uses. We discuss how various aspects of these nascent systems (including who is designing them, how they are released and what information is made available about them) may impact the allocation of responsibilities for addressing potential risks.

- Finally, we discuss some of the challenges that open-source technologies raise for AI supply chains. We suggest policymakers focus on how AI systems are released into public use, which can help inform the allocation of responsibilities for addressing harms throughout an identified supply chain.

Introduction

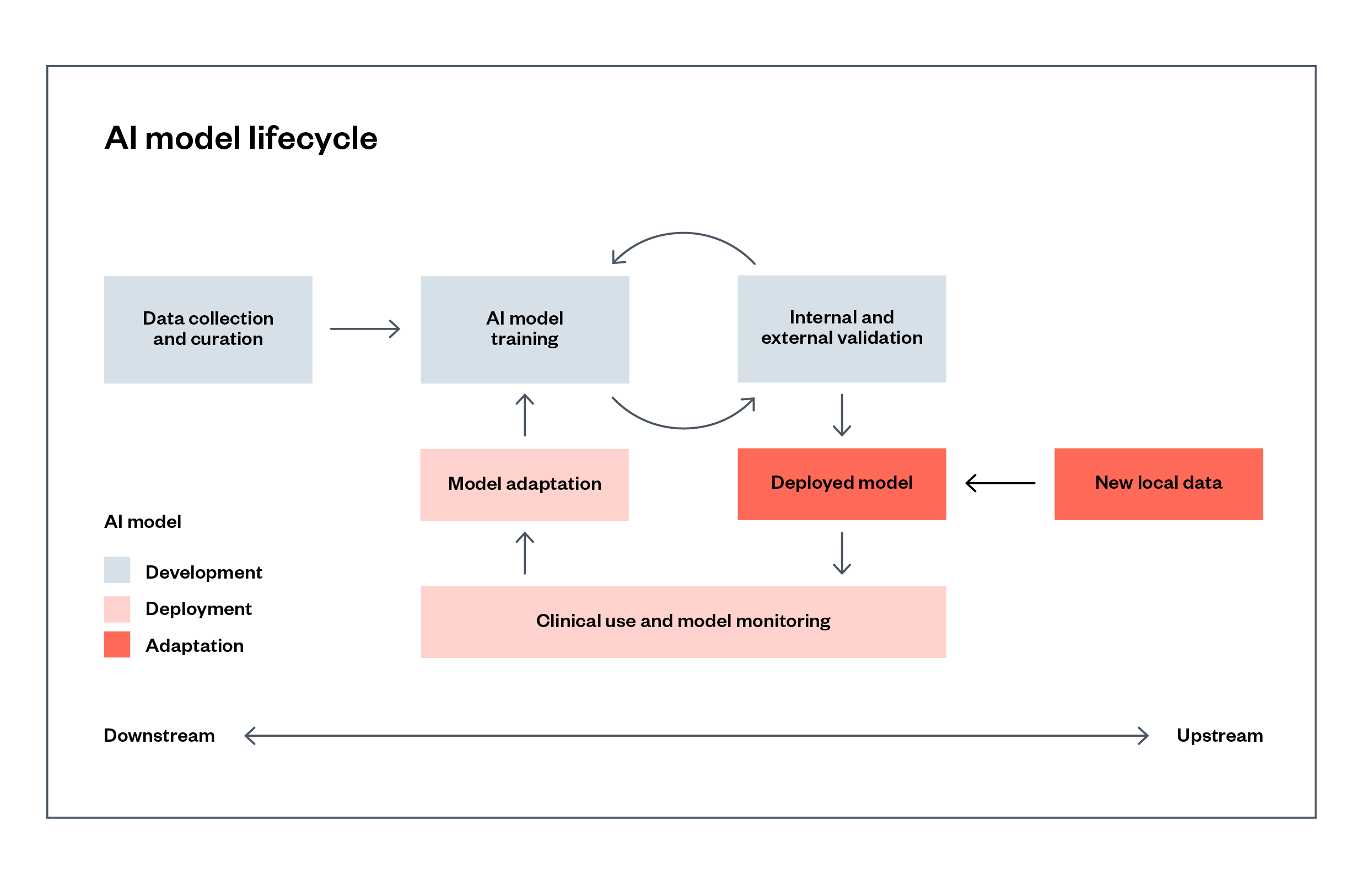

Developers and deployers of artificial intelligence (AI) systems have a variety of distinct responsibilities for addressing risks throughout the lifecycle of the system. These responsibilities range from problem definition to data collection, labelling, cleaning, model training and ‘fine-tuning’, through to testing and deployment (shown in Figure 1). While some developers undertake the entire process in-house, these activities are often carried out in part by different actors in a supply chain.[1]

To ensure AI systems are safe and fit for purpose, those within the supply chains must be accountable for evaluating and mitigating these different risks.

Figure 1: An abstract example of an AI system’s lifecycle (based on a system used in the COVID-19 pandemic)[2]

Every AI system will have a different supply chain, with variations depending on the sector, the use case, whether the system is developed in-house or procured, and how the system is made available to those who use it (for example, via an application programming interface (API), or made available via a hosted platform).

Actors within each supply chain will have differing but overlapping obligations to assess and mitigate these risks, and some will have more responsibility than others. This makes developing a single framework for accountability along supply chains for AI systems challenging.

The UK’s approach to AI regulation is largely focused on companies supplying products and services incorporating AI systems. It relies on existing statutory frameworks and independent, sector-specific regulators to mitigate risks, as they are judged best-placed to understand the context and apply proportionate risk-management measures.[3]

Many companies or public sector bodies deploying AI systems will, however, need information about the practices and policies behind its development from further up the supply chain to comply with their legal responsibilities. When issues are spotted, they will also need to have mechanisms in place to communicate those problems back up the supply chain to the supplier who is best placed to fix the problems.

Based on a rapid review of academic and grey literature (including preprints, reflecting how fast the field is moving), this paper discusses how to identify who should be primarily responsible for identifying and addressing different risks in AI supply chains. It also explores the mechanisms that may allow downstream actors to reach back up through the supply chain to flag issues that they cannot deal with in isolation. We aim to cover these four areas:

- Set out what is or is not distinctive about AI supply chains compared with other technologies.

- Examine examples of different kinds of AI supply chains (these are theoretical, and in practice many products or organisations will deal with multiple overlapping supply chains). Examples include:

- Systems built in-house.

- Systems relying on another API.

- Systems built for a customer (or fine-tuned for one).

- Provide the components for a general conceptual framework for how regulators could apply relevant, distinctive responsibilities to different actors in an AI supply chain.

- Explore the complexities that foundation models raise in terms of assigning responsibilities within the supply chain, and how different mechanisms for releasing these models may complicate allocations of responsibility.

What is or is not distinctive about AI supply?

Similarities between AI and other technology supply chains

Similarities between AI and other kinds of supply chains in the technology industry that are potentially of interest to regulators include:

- capital intensity and high returns to scale for production/training

- the need in some cases for access to specific, scarce inputs

- reliance on third-party software components and libraries

- questions relating to data protection and copyright law, where personal data and creative works are used for training and application of models

- a range of other fundamental or human rights issues, such as equality.

Capital intensity and high returns to scale

Like mining and large-scale industrial manufacturing, key processes in supplying state-of-the-art AI systems (particularly training large models and especially with foundation models) are likely to be capital-intensive and produce high returns to scale – that is, where outputs increase by more than the proportional change in all inputs.

This means there is a market limitation on the number of companies who can produce and sustain these systems, and this can lead to a potentially concentrated market (as we see with the cloud computing market, which is already intertwined with the training and operation of models), and hence competition law issues.

The literature corroborates this, suggesting that rapid advances in AI techniques over the last decade were primarily due to ‘significantly concentrated data and compute resources that reside in the hands of a few large tech corporations.’[4]

Other scholars point out that maintaining and developing these models will require sustained long-term rather than one-off investment:

‘Due to the benefits of scaling deep learning models, continuous improvements have been made to deep learning GPAI models driven by ever-larger investment to increase model size, computational resources for training and underlying dataset size, as well as advances in research.’[5]

This has potential ramifications for the AI research ecosystem. Some analysts have noted that the increased trend towards the use of deep learning models, requiring large training datasets and computational capabilities, benefits industrial over academic research, meaning ‘public interest alternatives for important AI tools may become increasingly scarce’.[6]

Scarce inputs

As with access to geographically sparse minerals such as antimony, baryte and rare earth elements,[7] many AI systems will depend on proprietary data inputs (such as large volumes of specific training data) that have been captured through access to existing specialised resources.

They may also depend on data collection practices that are hard for smaller companies to replicate (‘potentially from multiple sources and labelled or moderated, relating to many use-cases, contexts, and subjects’),[8] and analysed with compute resources (with ‘scarce expertise in model training, testing and deployment’[9]) that only a handful of major companies may have.

Again, in concentrated markets, there may be competition law questions about access to high-quality inputs and outputs, including specific datasets and high-end computation capability for training the largest models.

Example: illegally mined gold in hardware supply chains

The use of illegally mined gold from Brazil in technology manufacturing is an example of a supply chain with harmful rule breaking. This can happen despite (as with AI) the existence of supplier codes of conduct and audit processes.

A 2021 Brazilian federal police investigation found that ‘companies such as Chimet had been extracting illegal gold from the Kayapó indigenous land since 2015’, which potentially ‘ended up being used in the manufacture of tablets, phones, accessories for digital devices and even Xbox consoles’.[10] Microsoft and Amazon did not comment publicly, while Apple told reporters about its post hoc system of removing suppliers: ‘If a foundry or refiner cannot or does not want to meet our strict standards, we will remove it from our supply chain and, since 2009, we have guided the removal of more than 150 smelters and refineries.’[11]

Google reiterated ‘the rigor of [its] Supplier Code of Conduct […] demanding the search for ores “only from certified and conflict-free companies”’. But it ‘ruled out the adoption of protocols such as the audit by the Responsible Minerals Guarantee Process (RMAP),’ which involves an independent third-party assessing a company’s supply chain.[12] Organisations like the Responsible Minerals Initiative have developed best practice standards that provide guidance for the responsible sourcing of minerals in a supply chain, but companies will clearly need incentives to adopt them.

The Brazilian federal government, elected in early 2023, is planning legislation, and the Banco Central do Brasil and other government agencies ‘have been studying the adoption of the electronic tax receipts for buying and selling gold in order to track whether it was illegally mined’.[13]

This example could be considered analogous to requirements for detailed datasheets for AI models, which are documents that list details about a dataset such as: what data is included; how it was sourced; and how it should be used. It also highlights the importance of laws and regulations that establish the appropriate uses of data used to train an AI system, like data protection that covers the use of personal data and copyright law that covers the use of creative works.

Former US Federal Communications Commission Chair Tom Wheeler captured this concern, noting that machine learning ‘is nothing more than algorithmic analysis of enormous amounts of data to find patterns from which to make a high percentage prediction. Control of those input assets, therefore, can lead to control of the AI future’.[14]

The EU is attempting to address some of these scarcity issues through its European Data Strategy,[15] including legislation such as the Data Governance Act and Data Act.

Reliance on third-party software components and libraries

Like almost all software, AI systems are likely to be developed making extensive use of software libraries and components from third parties, to ‘benefit from the rich ecosystem of contributors and services built up around existing frameworks.’[16]

Researchers have noted: ‘Much software is too complex, relying on too many components, for any one person to fully understand or account for its workings.’[17] One analysis of commonly used deep learning frameworks found: ‘Caffe is based on more than 130 depending libraries … and TensorFlow and Torch depend on 97 Python modules and 48 Lua modules respectively.’[18]

These components can be used to introduce security vulnerabilities into end-systems. For example, researchers showed how a vulnerability reported in the ‘numpy’ Python library could be used to cause TensorFlow applications depending on it to crash. Other vulnerabilities could cause AI frameworks to misclassify inputs, and to enable an attacker to remotely compromise a system.[19]

To address these types of vulnerabilities, the US government is now limiting the procurement of ‘critical’ software unless it complies with standards issued by the US National Institute of Standards and Technology (NIST). This is to ‘enhance the security of the software supply chain’, including using automated tools to ‘check for known and potential vulnerabilities and remediate them’.

The USA will also include standards regarding: ‘maintaining accurate and up-to-date data, provenance (i.e., origin) of software code or components, and controls on internal and third-party software components, tools, and services present in software development processes, and performing audits and enforcement of these controls on a recurring basis.’[20]

This type of procedure may need to be considered by the UK and other governments in procuring AI systems for their own use, and in critical national infrastructure.

Data protection

Data protection issues arise wherever personal data is processed in a supply chain. For example, if a UK business purchases a database of marketing contacts from a UK or EU supplier, both parties must comply with data protection law (principally the General Data Protection Regulation (GDPR), which was transposed into UK law following Brexit).

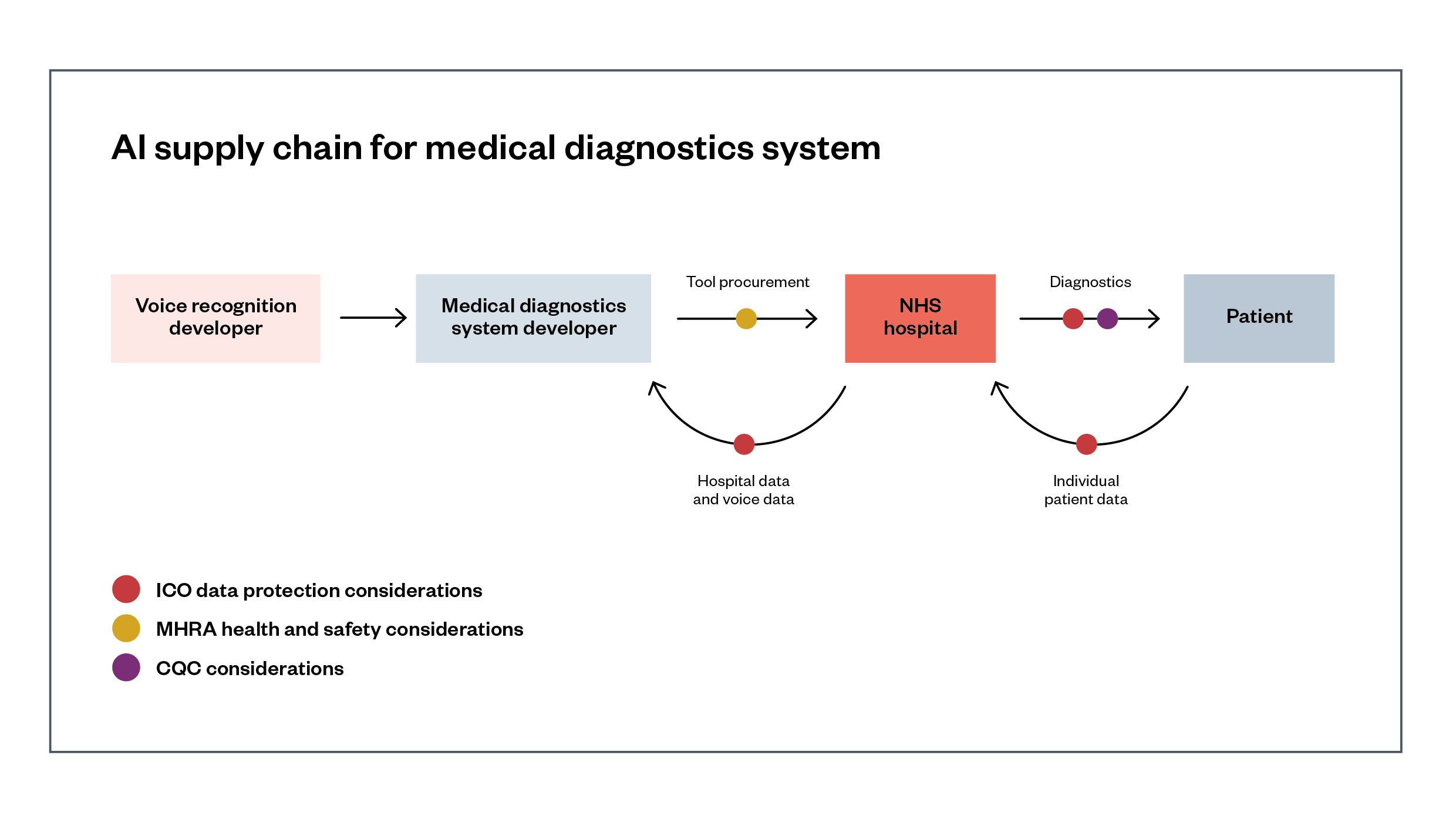

Figure 2 shows an example supply chain for a medical diagnostics system, where the developer and its hospital customers both process patients’ personal data to train and apply cancer detection models and voice recognition models.

Figure 2: AI supply chain for a medical diagnostics system

Description of Figure 2: A developer has created an AI-powered diagnostic tool which can assess an X-ray of a patient’s lungs for signs of cancer. They have procured a voice recognition model to incorporate into this tool from another company, which can understand doctors’ recorded voice comments on a patient to add to their diagnostics report. This diagnostics tool has been assessed for safety by the Medicines and Healthcare products Regulatory Agency (MHRA), while the models it contains have data sheets and model cards which can be produced to the ICO or EHRC if needed, as well as the Care Quality Commission (CQC) when the CQC is assessing care provision.

Where personal data is used in either training an AI model or in its application, it raises a range of data protection issues, including data quality, fairness and legality of processing, and fairness of automated decision-making.[21] Where personal data is involved, the technological drive to train ever-larger models with ever-greater quantities of data is in significant tension with the notions of minimisation and purpose specification that are enshrined in current data protection legislation. There may be trade-offs between principles, for example, data minimisation and statistical accuracy.[22]

This risk is beginning to be identified by jurisdictions around the world. In one of the first AI-related GDPR enforcement decisions, the Italian data protection authority temporarily blocked the US developer of AI chatbot Replika from processing the personal data of Italian users, due to risks to vulnerable users, especially children.[23]

Shortly afterward, the authority temporarily blocked OpenAI’s chatbot product ChatGPT from processing the personal data of Italian users until it put in place several protections. This includes: the availability of privacy policies for users of the service and those whose data was used for model training; a clear statement about the legal basis for those uses; the provision of tools for individuals to exercise their privacy rights; and (again) better protection for children.[24]

The European Data Protection Board – made up of the national supervisory authorities – has created a task force to ‘foster cooperation and to exchange information on possible enforcement actions’ relating to ChatGPT.[25]

For models trained on vast quantities of uncurated data scraped from the web, there could be fundamental issues for GDPR compatibility. These could relate to consent for the processing of sensitive personal data and the question of whether rectification of errors and the ‘right to erasure’ extend to the (hugely expensive) retraining of models, rather than the suppression of a specific output, as noted in the outcome of a related case on search engines at the EU Court of Justice, Google Spain.[26]

This may partly depend on the specific value of generative AI models to freedom of expression and other fundamental rights, and whether such benefits could be obtained through models trained on much more carefully curated datasets with explicit consent from data subjects.

An internal note allegedly leaked from Google suggests using small, carefully selected datasets can be an effective approach, compared to the use of ‘the largest models on the planet’ trained on a significant fraction of the entire world wide web.[27]

In the UK, the Information Commissioner’s Office (ICO) has identified the high risk of data processing in the context of AI use, publishing guidance which states: ‘In the vast majority of cases, the use of AI will involve a type of processing likely to result in a high risk to individuals’ rights and freedoms, and will therefore trigger the legal requirement for [organisations] to undertake a data protection impact assessment (DPIA).’ This must assess ‘risks to the rights and freedoms of individuals, including the potential for any significant social or economic disadvantage’.[28]

Scholars suggest that the current methods for ensuring prevention or mitigation of potential harms are inadequate: for example, the ‘right to an explanation’ frequently discussed in an AI/GDPR context is not sufficient to deal with often-cited ‘algorithmic harms’ around fairness, discrimination and opacity.

The scholars suggest the GDPR’s right to erasure and data portability, as well as its requirements for data protection by design, impact assessments and certifications/privacy seals, may be a better basis ‘to make algorithms more responsible, explicable, and human-centred’.[29]

There are also open questions about the data protection responsibilities of companies in some supply chains under the GDPR, as personal ‘data controllers’. Some major developers of AI technologies like Microsoft, Google and Amazon sell these products as a service to other companies (this is called ‘AI as a Service’, or AIaaS). When doing so, they commonly ask customers’ permission to use their submitted data to improve their products.

When this includes personal data of end users, although the terms of service of these providers may specify that they are acting as data processors, in reality they may be acting as controllers or joint controllers and face significant GDPR requirements.[30] This remains the case for as long as the UK retains a data protection framework largely mirroring the GDPR, though it is worth noting that the UK Government has proposed a significant update in its Data Protection and Digital Information Bill.

Under these conditions, AIaaS providers will need a specific legal basis for processing to improve their models (and should check where they are joint controllers for normal processing). If data supplied by customers includes special category data (such as health data), explicit consent from data subjects is likely to be the only option available.[31]

There are some open questions to be addressed in relation to these issues.

- How can companies ask customers to provide explicit consent as data subjects where they do not directly interact with passive third parties (where customers are, for instance, directly or indirectly surveilling a physical space)’?[32]

- Are providers able to adequately inform data subjects and get their explicit consent?[33]

- And how will data protection authorities verify key details about the use of personal data, given the sparse documentation typically published by developers?

Copyright

Businesses using copyrighted works anywhere in a supply chain must ensure they have permission from the copyright owners, or qualify under a limited number of ‘fair dealing’ exceptions under UK copyright law. For example, when an organisation buys photographs from a stock library, both parties are responsible for complying with any licence conditions set by the photograph’s owner, or verifying that they qualify for an exception (such as educational use).

There are large quantities of public domain works – text, audio and video – that are available for training AI models and are not subject to copyright, as well as copyright material released under licences permitting use (although these rarely give explicit permission for use in training AI models). Where other copyrighted works are used, questions of fair dealing and licensing will arise – as well as issues relating to the outputs of generative models.[34]

In the USA, there are mixed academic views as to whether their broad ‘fair use’ copyright exception would allow the use of copyrighted works to train models without the copyright holder’s consent.[35] International newspapers including The Economist have argued that companies such as Microsoft, which is now showing AI-produced summaries of articles in its search engine Bing, should have to license the use of such content.[36]

In the UK, a limited copyright exception allows text and data mining (TDM) for research for non-commercial purposes (section 29A of the Copyright, Designs and Patents Act 1988). The UK Intellectual Property Office (IPO) has proposed significantly widening this exemption,[37] and the UK Government has accepted the recommendations of the review by its Chief Scientific Adviser suggesting that it ‘should work with the AI and creative industries to develop ways to enable TDM for any purpose’.[38]

The EU has already introduced such a reform, also allowing commercial TDM on an opt-out basis, in Article 4 of the Copyright in the Digital Single Market Directive (2019/790/EU).

The spawning.ai website has gathered opt-outs for 78 million works, mainly from organisations such as Shutterstock. The developers of one of the most popular text-to-image AI systems, Stable Diffusion, announced they will honour these opt-outs in training future models.[39] However, European artists’ associations have argued this is insufficient protection, and have lobbied for the EU’s AI Act to require explicit, informed consent from the authors of works.[40]

Current copyright regimes are unlikely to find images produced by AI systems such as Stable Diffusion ‘in the style of’ a specific artist to be an infringement of copyright. But they will give protection to AI-generated images which contain copyrighted elements, such as cartoon superheroes.[41]

The US Copyright Office has issued policy guidance that under US law, copyright will apply to the human-authored elements of AI-generated works where an author has ‘select[ed] or arrange[d] AI-generated material in a sufficiently creative way’, or ‘modif[ied] material originally generated by AI technology to such a degree that the modifications meet the standard for copyright protection’.[42]

Human rights

Businesses’ responsibility to respect human rights throughout their supply chains is enshrined under the United Nations’ Guiding Principles on Business and Human Rights, which says they should ‘avoid infringing on the human rights of others and should address adverse human rights impacts with which they are involved’.[43]

In the UK and EU, data protection regulation further states that ‘data protection aims to protect individuals’ rights and freedoms with regard to the processing of their personal data, not just their information rights’ – including, for example, the right to non-discrimination.[44]

This means that, particularly where governments are using AI systems for decision-making, they will need to consider the full range of human rights issues raised. As a recent Chatham House report concluded: ‘While human rights do not hold all the answers, they ought to be the baseline for AI governance. International human rights law is a crystallization of ethical principles into norms, their meanings and implications well-developed over the last 70 years.’[45]

For example, state responses to AI-generated disinformation must be carefully guided by the impact on freedom of expression, as, for example, over-moderating online content can inadvertently remove valuable political speech.[46] Similarly, the use of AI-based tools such as facial recognition and gunshot detection by law enforcement and national security agencies can have serious impacts on privacy and equality, particularly for some marginalised groups.

Associated Press found that in the USA, the widely used ShotSpotter system ‘is usually placed at the request of local officials in neighborhoods deemed to be the highest risk for gun violence, which are often disproportionately Black and Latino communities’.[47]

The private sector must ‘respect’ human rights,[48] for example avoiding discrimination by using AI-based employment tools, which is also required by specific equality and anti-discrimination laws in many countries, such as the UK’s Equality Act 2010.[49]

This may be a more likely outcome where sectoral regulators are enforcing these duties – for example, in the UK finance sector compared to the human resources sector – even where cross-sectoral regulators are in place, such as the UK Information Commissioner’s Office (ICO) and the Equality and Human Rights Commission (EHRC).[50]

Some of these issues are starting to be specifically addressed at the international level in the Council of Europe’s draft AI Convention,[51] building on regional human rights instruments such as the European Convention on Human Rights.

In the EU, civil society groups have called for the extension of the AI Act’s outright prohibition on AI systems that pose an unacceptable risk to fundamental rights, including ‘social scoring systems; remote biometric identification in publicly accessible spaces (by all actors); emotion recognition [and] systems to profile and risk-assess in a migration context’.[52]

Distinctive features of AI supply

Distinctive features of AI supply chains that are potentially of interest to regulators include items such as components’ complexity, speed of change or opacity, and their diffuse impact on people and society.

Features of models and systems

The novelty, complexity and speed of ongoing change and adaptation of AI models will make it difficult to standardise or even precisely specify their features – hence the importance of transparency tools like transparency registers, model cards, datasheets and other methods for sharing information about a system.[53]

AI systems can be highly opaque, leading to a lack of standardisation and societal understanding. Even before the proliferation of AI systems, researchers noted ‘the lack of means, legal or technical, for uncovering the nature of the supply chains on which online services rely.’[54]

Countries including France and the Netherlands are partly addressing this by setting up algorithm registers and regulators (in these two cases, within their data protection authorities).[55] Spain is setting up an independent agency to monitor the public and private sector’s compliance with the EU’s forthcoming AI Act, which will require ‘high-risk’ systems to be registered in a public database.[56]

Seven European city administrations (Barcelona, Bologna, Brussels Capital Region, Eindhoven, Mannheim, Rotterdam and Sofia) have been developing an Algorithmic Transparency Standard, which will ‘help people understand how the algorithms used in local administrations work, and what their purpose is.’[57]

The UK has also introduced a public-sector Algorithmic Transparency Standard for use by public sector organisations.[58] Notably, public sector organisations will not be legally required to use this standard, under the latest language of the Data Protection and Digital Information Bill (DPDIB).

Opacity is an issue, because it can be reinforced by companies to protect commercial secrets. An Associated Press review of potential serious miscarriages of justice resulting from the use of one company’s gunshot detection system in the USA found that it ‘guards how its closed system works as a trade secret, a black box largely inscrutable to the public, jurors and police oversight boards’.

Even in court cases, it ‘has shielded internal data and records revealing the system’s inner workings, leaving defence attorneys no way of interrogating the technology to understand the specifics of how it works’.[59] The EU’s proposed AI Liability Directive will enable courts to order disclosure of evidence from a provider or user of a high-risk system subject to the AI Act, if the system is ‘suspected of having caused damage’ (Article 3(1)).[60]

Impact on companies, people and society

More broadly, AI systems can impose significant, diffuse external costs on a range of downstream actors, including intermediary companies and members of the public (such as the impact of a proliferation of disinformation on the quality of democratic debate). These impacts can extend beyond the direct users of such systems.

It can be difficult to attribute responsibility and legal liability for harms resulting from complex supply chains, given the potentially significant effects in some systems of even small changes by one node in the chain (the ‘many hands’ problem).[61]

Some emerging policy proposals have sought to address this issue. European policymakers have debated following ‘established practices in other sectors, such as pharmaceutical and chemical products, where producers’ liability is normally exempted in cases of misuse, but producers are increasingly prompted to think about “reasonably foreseeable misuses”.’[62]

In the broader case of platform regulation, researchers have suggested the idea of ‘cooperative responsibility’, with a division of responsibility between actors depending on ‘the capacities, resources, and knowledge of both platforms and users, but also on economic and social gains, incentives and arguments of efficiency, which vary from sector to sector and case to case.’[63]

In the closely related area of software liability, the US’s Biden administration has adopted a cybersecurity strategy which will ‘ask more of the most capable and best-positioned actors’.[64] The USA will move towards ‘preventing manufacturers and service providers from disclaiming liability by contract, establishing a standard of care, and providing a safe harbour to shield from liability those companies that do take reasonable measurable measures to secure their products and services’.[65]

Under EU law, scholars have noted that AI system providers are ‘not protected by the E-Commerce Directive from liability for their customers’ activities, since they are not mere conduits, caches or hosts, and anyway cannot be said to be operating without knowledge of their customers’ activities.’[66]

Other scholars warn that US and EU regulatory initiatives do not cover the risk of ‘black swan’ events, which are potentially catastrophic but low probability. These include ‘high-impact accident risks from general purpose AI systems; the uncontrolled proliferation and malicious use of AI systems; and applications of AI that could cause long-term systemic harm to social and political institutions’.[67]

To address these risks, policymakers and regulators need accelerated feedback loops along AI supply chains. These should identify harms, errors or issues, and should include a mechanism for feedback up the supply chain, for action by the relevant actor.

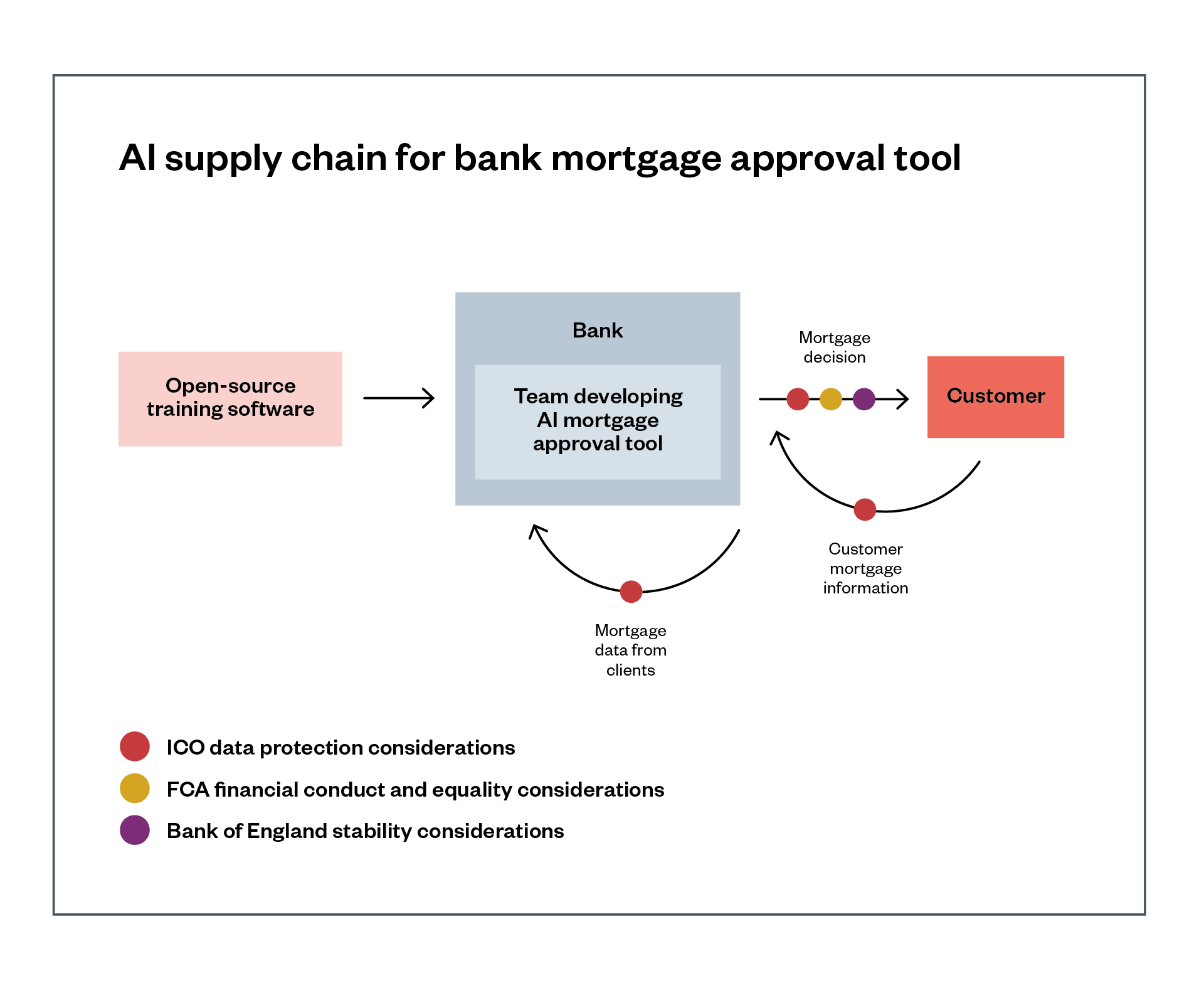

A regulator could put in place ex ante requirements for the design and testing of an AI system and conduct ex post evaluations of a system’s actual performance. For example, the Financial Conduct Authority (FCA) and/or Equalities and Human Rights Commission (EHRC) could place requirements in relation to fairness testing of mortgage assessment processes, and take ex post enforcement action if the system were found to still be biased. A regulated bank may need to work with its suppliers, potentially all the way up a supply chain, to ensure it can meet those requirements.

Research by the UK’s Centre for Data Ethics and Innovation found industry participants were keen to understand how the use of assurance tools such as impact assessments and certifications would help meet regulatory obligations, which would be a ‘key motivator for industry engagement’ in the continued use of these tools.[68]

Examples of different kinds of AI supply chains

In a key analysis, a research team at the Centre for European Policy Studies identified the following seven basic configurations making up AI supply chains, containing one, two or three developers/suppliers, in increasing order of complexity.[69] These supply chains vary in terms of who has the skills, power and access needed to assess and mitigate risks. In more complex supply chains with multiple suppliers or developers, these responsibilities can be less clear.

Throughout this chapter, we make a distinction between deployers of an AI system (those who actually use it) and developers of an AI system (those responsible for training and creating the system), although elements of these roles can merge. End users refer to people or businesses who are ultimately affected by a deployed system.

Below, we distinguish between three broad categories of AI supply chains:

- systems built in-house

- systems relying on an API

- systems built for a customer (or fine-tuned for one).

Systems built in-house

1. [One actor] A company develops AI systems in-house, using their own staff, software and data. This provides the company with the maximum level of control over the system, and clear responsibility to assess and mitigate risks. This configuration is most likely for specialised use cases with limited economies of scale in production and operation. This scenario implies that the company will have the staff needed to monitor and mitigate resulting risks, if those staff are retained beyond initial deployment (and this monitoring and evaluation is not outsourced to a third party).

Systems relying on an API

2. [Three or more actors] A developer company buys AI systems and components from several other companies, integrating them into a complete system of its own before supplying it to companies and end users directly or via an API. The developer/deployer company may have a high-level understanding of these systems, but may not have specialist AI expertise to monitor and mitigate resulting risks.

3. [Two actors] A company develops and trains an AI system which a second company accesses by sending queries via a limited API. This gives the developer a high level of control over how its system is used, including the potential to include technical as well as legal restrictions on prohibited uses. The customer/deployer company may have a high-level understanding of the system, but may not have specialist AI expertise to monitor and mitigate resulting risks, or to correct errors in the underlying model.

Systems built for a customer (or fine-tuned for one)

4. [Two actors] A company deploys a system custom-developed by another company under contract. This gives the deploying company a high level of control but presents some challenges in its ability to assess and monitor for risks. The contracting/developer company may specify permitted and prohibited uses of the system in the contract – although may have limited resources to monitor and enforce how the deploying company uses it. The customer/deployer company will be likely to have a high-level understanding of the system but may not have specialist AI expertise to monitor and mitigate resulting risks.

5. [Two actors] A developer company sells a complete AI system to a second company, which inputs its own data to enable the system to undertake additional training, and deploys it. This provides the first developer company with some higher level of control over the use of the resulting system. This scenario implies that the deploying company will have the staff needed to monitor and mitigate resulting risks, if they are retained beyond initial deployment.

6. A developer company sells a complete AI system, including direct access to the underlying model(s), which the second company can access and train using its own data. The developer has a lower level of knowledge and control over the use of the resulting model. This scenario implies that the deploying company will have the staff needed to monitor and mitigate resulting risks, if they are retained beyond initial deployment.

7. An AI system developer sells code to a deploying company, which uses it along with its own data to train and deploy a specific type of model. The system developer has a lower level of knowledge and control over the use of the resulting model by the deploying company. This scenario implies that the deploying company will have the staff needed to monitor and mitigate resulting risks, if they are retained by the deploying company beyond initial deployment.

Open-source components

In all these cases, companies may incorporate resources (including data, software and models) released under open-source licences. These licences grant companies and researchers the freedom to use, examine and modify these resources as they wish (discussed further below).

Where the use of such resources is business-critical, companies may choose to pay for external support from specialist providers of open-source tools such as Red Hat.[70] Where companies have the expertise, they will be able to modify open-source components directly to fix faults – and (if they choose, or are required to by the licence) contribute those fixes back to the community using and maintaining the component. This can create some ambiguity about which parties are providers/developers of an AI system and which are the deployers.

Assurance intermediaries

A final consideration for assigning responsibility for assessing and mitigating risks in an AI supply chain is whether a third-party organisation (such as a law firm, auditing agency or consultancy) has taken on a contractual obligation to manage some risks.

Third-party organisations can conduct independent certifications, audits and other processes to provide additional information about AI components, which would give assurances to the public, regulators and companies making use of them.[71]

Companies that perform these kinds of third-party evaluations of an AI system are a major part of the UK’s strategy for the development of trustworthy AI systems, with the Government’s Centre for Data Ethics and Innovation producing a roadmap towards an effective ecosystem conducting AI assurance.[72]

A conceptual framework for regulators to apply to AI supply chains in their sector

Policymakers and regulators must make difficult choices when determining where to assign distinct responsibilities for addressing the risks that can arise throughout an AI system’s supply chain. Below, we provide an initial conceptual framework that regulators can build from to determine where responsibilities might apply, which relies on four principles:

- Transparency: what information can each actor in a supply chain provide to enable risks to be identified and addressed.[73]

- Incentivisation: who is best incentivised to address these risks, and how can regulators create those incentives while minimising the overall costs of fixing problems.

- Efficacy: who is best positioned to most effectively address the risks that can emerge from an AI system (potentially multiple parties working together).

- Accountability: how can the use of legal contracts assign responsibilities, and what are the limitations of this method.

Transparency

To ensure effective regulation, regulators and policymakers will need to incentivise transparency and information flow across the supply chain.

This will allow information about, and evaluation of, systems and potential risks to travel up and down chains, supporting remediation of identified problems.

Mechanisms needed to ensure this flow of information, including via contractual terms and regulatory requirements on all actors in a supply chain, include:

- Transparency and accountability processes, including mechanisms such as model cards and datasheets which provide information on an AI model’s architecture and the data it was trained on. These ‘have the potential to increase transparency and accountability within the machine learning community, mitigate unwanted societal biases in machine learning models, facilitate greater reproducibility of machine learning results, and help researchers and practitioners to select more appropriate datasets for their chosen tasks’.[74]

- Certifications, audits, impact assessments, technical standards and similar mechanisms, which give organisations reliable evidence on the trustworthiness of AI systems.[75] These mechanisms seek to establish standardised processes for organisations to evaluate and monitor the behaviour of their AI systems, and other aspects that are important for fulfilling their regulatory duties, and are important to their end users. For example, the Centre for Data Ethics and Innovation found respondents from the connected and automated vehicle sector were keen to see the development of certification and kite-marking mechanisms ‘to demonstrate their compliance to customers’.[76]

- Requirements for sector-specific information sharing, like the UK’s Cyber Security Information Sharing Partnership. Similar efforts for AI could potentially be facilitated by regulators. Fora like these could also develop voluntary sectoral codes of conduct, building on those envisaged in the GDPR’s Articles 40 and 41, and developing standards for certifications.[77]

- Requirements to share data with insurers and regulators, that are modelled on other domains like cybersecurity. A US review found ‘a lack of data, a lack of expertise, and an inability to scale rigorous security audits have rendered cyber insurers unable to play a significant deterrent role in reducing cybersecurity incidents or exposure to cyber risks.’ The review highlights the approach of the Singaporean government in improving this issue.[78]

- Mechanisms for reporting and remedying faults. Researchers from Stanford’s Human-Centered AI project suggested: ‘If downstream users have feedback, such as specific failure cases or systematic biases, they should be able to publicly report these to the developer, akin to filing software bug reports. Conversely, if a model developer updates or deprecates a model, they should notify all downstream users’ including deployers or end users whose products and services rely on that model.[79] This would require a mechanism to keep track of all such users, which may not be straightforward where models or components may be downloaded and used without specific notification to their developer. However, the GDPR’s Article 17(2) attempts to deal with this issue (in this case, in terms of secondary use of personal data), by mandating that the main party takes reasonable steps to inform other parties.

More broadly, it may be most efficient for a government body to play a cross-sectoral role for information-sharing and learning.[80] In the Netherlands, for example, an algorithm regulator, situated within the Data Protection Authority, ‘will identify cross-sector risks related to algorithms and AI and will share knowledge about them with the other regulators. It will also, in cooperation with already existing regulators, publish and share guidance related to algorithms and AI with market parties, clients and governments.’[81]

Transparency in a supply chain: Supply Chain 1

A developer company sells a complete AI system to a second company, which inputs its own data to enable the system to undertake additional training. It then deploys the system. This provides the first developer company with some higher level of control over the use of the resulting system. This scenario implies that the deploying company will have the staff needed to monitor and mitigate resulting risks, if they are retained by the company beyond initial deployment.

——-

Using this example, the deployer has modified the system in ways that might explain some of its behaviour. However, to fully assess these risks, it is likely that the deployer will still require access to information about how the developer’s model was trained, what data it used, and how it was tested. Without this information, it will be challenging for either the deployer or the developer to assess this system holistically for potential risks and mitigate them. If the developer has used transparency mechanisms like model cards and datasheets to share critical information about how the underlying model was trained, that may be enough for the deployer to take on more responsibility for addressing or mitigating potential risks. Conversely, if the deployer spots an issue in the developer’s model, transparency mechanisms could enable that information to pass back up the supply chain and for the developer to address the risk.

Regulators can play an important role by incentivising information transfer and transparency practices in AI supply chains, while noting its limitations: making information transparent does not necessarily mean it will be acted upon.

These bodies can collaborate internationally in venues such as the Organisation for Economic Co-operation and Development and Council of Europe. Centre for Data Ethics and Innovation research found participants were keen for regulators to coordinate internationally, to encourage consistency and ‘to ensure the alignment of national and international standards objectives’.[82]

The EU’s AI Act will significantly rely on the production of technical standards for AI systems by bodies such as CEN and CENELEC. However, there are problems with regulatory regimes relying too heavily on technical standards with a significant impact on fundamental rights, which the private organisations producing have little experience and less legitimacy in managing[83] (attempts to improve this have faced significant obstacles[84]).

So-called ‘explainable’ AI (XAI) systems may help with allocation of responsibility, in that developing systems that ‘can explain their “thinking” will let lawyers, policymakers and ethicists create standards that allow us to hold flawed or biased AI accountable under the law.’[85] However, some researchers have noted the limitations of current XAI approaches, which demonstrate how explanations can be brittle and their meaning can change over time.[86]

Relatedly, research suggests that the most important accountability mechanism for AI systems will be preserving a snapshot of the state an AI system at the time when a harm occurs. AI systems can change with new inputs or tweaks to their architecture. This means saving time-stamped versions of systems so that the cause of harms can be examined later, as happens already with self-driving vehicles.[87]

Finally, regulators and policymakers must acknowledge the limits of transparency. Simply making information about AI systems, data or risks available does not mean that information will be acted on by relevant parties. Regulation must create proportionate incentives and penalties for them to do so.

Incentives, penalties and value chains

Regulators can also incentivise those who are best placed to address emerging risks in an AI supply chain. This approach reduces the risk of a ‘diffusion of responsibility’ across a supply chain, which can potentially lead to an insufficient consideration of risks by any actor.[88]

Current corporate practices often do not align with incentives to produce systems that prioritise societal benefit. In interviews with 27 AI practitioners, scholars found a ’deeply dislocated sense of accountability, where acknowledgement of harms was consistent but nevertheless another person’s job to address, almost always at another location in the broader system of production, outside one’s immediate team… Current responsible AI interventions, like checklists, model cards, or datasheets ask practitioners to map the technology to their end use. They attempt to put “out of scope” harms back in scope, but here we show how contemporary software production practice works against that attempt, leaving developers in a bind between countervailing cultural forces.’[89]

Moving away from thinking about a supply chain (the resources and actors that move a product from supplier to customer), towards a value chain model (which considers how value can be added along this supply chain for different actors) could be beneficial for regulators. This shift in thinking would encourage regulators to consider how to set particular incentives and penalties for addressing harms within a supply chain.

One suggested approach for strengthening collaboration to address harms along a supply chain is to use a communication tool, such as a model card or a datasheet, that can help create this shift towards a value chain. In this scenario, a model card ‘is not a one-and-done affair, but a place where partiality comes together and relations occur, making the relationship less like a supply chain and more like a value chain where collaborators are working together to collectively address the problem’.[90]

Incentives in a value chain: Supply Chain 2

An AI system developer sells components of an AI system (such as code) to a deploying company, which uses it along with its own data to train and deploy a specific type of model. The developer has a lower level of knowledge and control over the use of the resulting model by the deploying company. This scenario implies that the deploying company will have the staff needed to monitor and mitigate resulting risks, if they are retained by the deploying company beyond initial deployment.

—

In this example, regulators could focus on which actors – the developer and the deploying company – can be incentivised to proactively assess risks and take action to mitigate them. While the deployer in this case has more information about the final AI system’s architecture and data, some risks may be a result of an error or issue in the upstream developer’s code.

Regulators may want to incentivise both parties to undertake risk assessments and model evaluations, and engage in transparency mechanisms like datasheets or model cards. To do this, with statutory authority, regulators could require the deployer to ensure that both they, and any upstream developers, have undertaken these risk assessments.

Conversely, if they had legal authority to do so, regulators could place the onus on the upstream code developer. The upstream code developer could require the downstream deployer to only use this code if they agree to undertake specific risk assessments and evaluations of the final AI system.

In a UK scenario, it is likely that deployers will be UK-based and developers could be outside the jurisdiction of UK regulators, raising questions of enforcement against the latter.

Interfaces along a supply chain could be strengthened through the use of contracts that specify clear responsibilities and increase communication between non-developers: ‘Those playing customer roles in the supply chain might routinize asking suppliers for model cards, if the data it was trained on was properly consented, if crowd workers labelling the data were paid an appropriate wage, etc., which is commonplace in supply chains for physical goods’.[91]

Companies and regulators should pay attention to what information and/or responsibility could potentially be lost between the nodes in a supply chain, particularly as a supply chain becomes more complex. As some scholars have noted, ‘Thinking interstitially means moving away from the binary question of “do I have full control, or not?” and reasoning in probabilities and frictions. What does the technology make easier or harder, faster or slower, and what do contractual obligations or marketing messages make easier or harder?’.[92]

Efficacy up and down the AI supply chain

Regulators and policymakers must also consider who in a value chain can most easily identify risks, and who is best-placed to take action to mitigate them.[93]

In an open letter describing how the proposed EU AI Act’s ex ante legal requirements for assessing the risk and quality of an AI system should apply to complex AI systems, a group of European civil society organisations argue that shifting the obligations entirely to downstream users in a supply chain ‘would make these systems less safe.’[94]

This is because downstream companies are likely to lack the capacity, skills and access to make any changes. However, the letter also argues that downstream companies deploying the system are best placed to comply with other requirements of the act like ‘human oversight, but also any use case specific quality management process, technical documentation and logging, as well as any additional robustness and accuracy testing.’[95] This is because downstream deployers are closer in proximity to the final context in which the system is operating.

Considering efficacy in the supply chain: Supply Chain 3

A company develops and trains an AI system which a second company accesses by sending queries via a limited API. This gives the developer a high level of control over how its system is used, including the potential to include technical as well as legal restrictions on prohibited uses. The customer/deployer company may have a high-level understanding of the system, but may not have specialist AI expertise to monitor and mitigate resulting risks.

—

In this example, a regulator could consider which actor – the company developing the system or the deployer who uses it – can most easily identify and take actions to address risks. In this case, the developer is the only party with control over the system’s data and model architecture, meaning that any tweaks or changes to the system will have to be made by them.

The use of an API also gives the developer greater control to prohibit certain uses and even monitor actual uses by the deployer. By considering the principle of efficacy, a regulator could assign responsibility for identifying and addressing risks primarily to the developer – but this would require a regulator to have the necessary powers to do so.

Sometimes it may not be possible for the parties closest to the person affected by an AI system to deal with risks in a manageable way. This is not a new problem: in a case that later became influential across the USA, the New York Court of Appeals found in 1916 that Buick Motor Company ‘was not at liberty to put [a car] on the market without subjecting the component parts to ordinary and simple tests’ (MacPherson vs Buick, 217 NY 382) since ‘neither the consumer nor the local dealership’ they acquired it from ‘had meaningful insight into or control over the manufacturing process or material supply chain’.[96]

Some researchers have made a more recent assessment of the decision’s relevance to regulation of 5G networks, which also has echoes for AI regulation, although these products are at a much earlier stage of development: ‘The decision firmly placed the risk assessment and mitigation responsibility with the corporation in the best position to know details regarding assembled sub-systems and to control the processes that would address risk factors.’[97]

The US legal approach to AI is likely to evolve via ‘multiple specific (and often simultaneous) theories of liability that can be asserted in a products liability claim, including negligence, design defects, manufacturing defects, failure to warn, misrepresentation, and breach of warranty’.[98]

In US state law, ‘risk-utility tests have long been employed in products’ liability lawsuits to evaluate whether an alleged design defect could have been mitigated “through the use of an alternative solution that would not have impaired the utility of the product or unnecessarily increased its cost”.’[99]

Other researchers have expanded on these tests to cover more complex networks of liability, concluding that a ‘strict liability regime is the best-suited regime to apply when AI causes harm and will provide indicators to identify the entity who should be held strictly liable.’[100]

Another scholar suggests: ‘This same [risk utility] test can be applied in relation to AI as well; however, the mechanics of applying it will need to consider not only the human-designed portions of an algorithm, but also the post-sale design decisions’ and aspects of a system that automatically update as new data is fed into it.[101] These tests may offer a way for regulators to make clearer allocations of responsibility.

Finally, when considering efficacy, regulators may need to pay special attention to the jurisdiction where a company or supplier is operating. In some supply chains, it may be easier for regulators to create incentives for suppliers that sit within their own jurisdiction.

Accountability through contracts

Companies offering products and services to the market that contain or are based on AI components will generally bear the legal liability of doing so. Where courts or regulators fine or order compensation payments against such companies, they will in turn need to examine whether their suppliers should be responsible for some (or all) of these remedies.

As researchers have observed: ‘Apportioning blame within the supply chain will involve not only technical analysis regarding the sources of various aspects of the AI algorithm, but also the legal agreements among the companies involved, including any associated indemnification agreements.’[102]

Accountability through contracts: Supply Chain 4

[Two actors] A company deploys a system developed by another company under contract. This gives the deploying company a high level of control but presents some challenges in their ability to assess and monitor for risks. The contracting/developer company may specify permitted and prohibited uses of the system in the contract, although they may have limited resources to monitor and enforce how the deploying company uses these. The customer/deployer company will be likely to have a high-level understanding of the system, but may not have specialist AI expertise to monitor and mitigate resulting risks.

—

Using this example, the use of a contract can allow both parties to agree who is responsible for assessing, mitigating and being held accountable for certain risks. This contract can also create a structure for the flow of information (for example, documentation about the model or datasets used) between the two companies. In this case, the deployer may be best placed to monitor for potential errors or issues, but the developer could be contractually obligated to address those issues once reported back to them. However, it may be the case the developer is larger and more powerful than the deployer; and the deployer may have limited ability to negotiate custom contracts. Regulators must be attentive to these power imbalances.

At a minimum, those companies will need to use contract law to ensure they have all the data they need about the models and systems they make use of to do so effectively.[103] Japan’s government is encouraging this by issuing interpretive guidance on AI contracts.[104] In turn, companies’ suppliers will need to ensure they can do the same with all of the components making up the systems they are offering. Similarly, those contracts will need to provide mechanisms by which companies using AI can notify suppliers and request remediation of problems, all the way up the supply chain.

Debate in EU institutions has also highlighted ‘the belief that original AI developers will often be larger entities such as tech giants. These larger entities can be assumed to possess more resources and greater knowledge compared to the (arguably smaller) companies that will eventually become the providers, as they will place the high-risk AI systems on the market.’[105]

Upstream suppliers will often be larger and more powerful, and downstream deployers may have limited ability to negotiate custom contracts – as already seen with cloud services. This may leave small and medium-sized enterprises (SMEs) in a weak position to determine important aspects of contracts, which could, for example, determine joint controllership under data protection law (and thus create more liability).[106] Regulators must carefully consider these issues, and may find it beneficial to issue guidance on the use of contracts in AI supply chains.

Foundation models

Foundation models are worth considering as a separate element of an AI supply chain, as they can make it harder for regulators to assign responsibilities, and more challenging for sectoral regulators to identify the boundaries of their remit.

Foundation models, sometimes called ‘general purpose AI/GPAI systems’, are characterised by their training on especially large datasets to perform many tasks, making them particularly well suited for adaptation to more specific tasks through transfer learning. These models – especially those used for natural language processing, computer vision, speech recognition, simulation, and robotics – have become more foundational in many commercial and academic AI applications.’[107]

OpenAI’s chief scientist Ilya Sutskever has commented: ‘These models are […] becoming more and more potent. At some point it will be quite easy, if one wanted, to cause a great deal of harm with those models.’[108]

The supply chains of foundation models are similar to Supply Chain 3 described earlier, but differ in a crucial way – a single model can be adapted (or ‘fine-tuned’) for a wide variety of applications, which means:

- It becomes harder for upstream providers of a foundation model to understand how it will be used and to mitigate its risks.

- A much wider number of sectoral regulators will have to evaluate its use.

- A single point of failure by the developer (for example, an error in the training data) could create a cascading effect that causes errors for all subsequent downstream users. As European civil society groups have noted: ‘A single GPAI system can be used as the foundation for several hundred applied models (e.g. chatbots, ad generation, decision assistants, spambots, translation, etc.) and any failure present in the foundation will be present in the downstream uses.’[109]

In this section, we discuss some of the active, relevant debates in EU and US policy circles around how to regulate foundation models, and how the regulation of these systems is further complicated by the dynamics of ‘open source’ models.

Supply chains and market dynamics for foundation models

Some foundation models have been released on a cloud computing platform and made accessible to other developers via an API but (unlike Supply Chain 3 above) with the capability to fine-tune models using their own data. Many end users will also likely experience products built using foundation models, which may be built into existing products and services such as operating systems, web browsers, voice assistants and workplace software (such as Microsoft Office and Google Workspace).

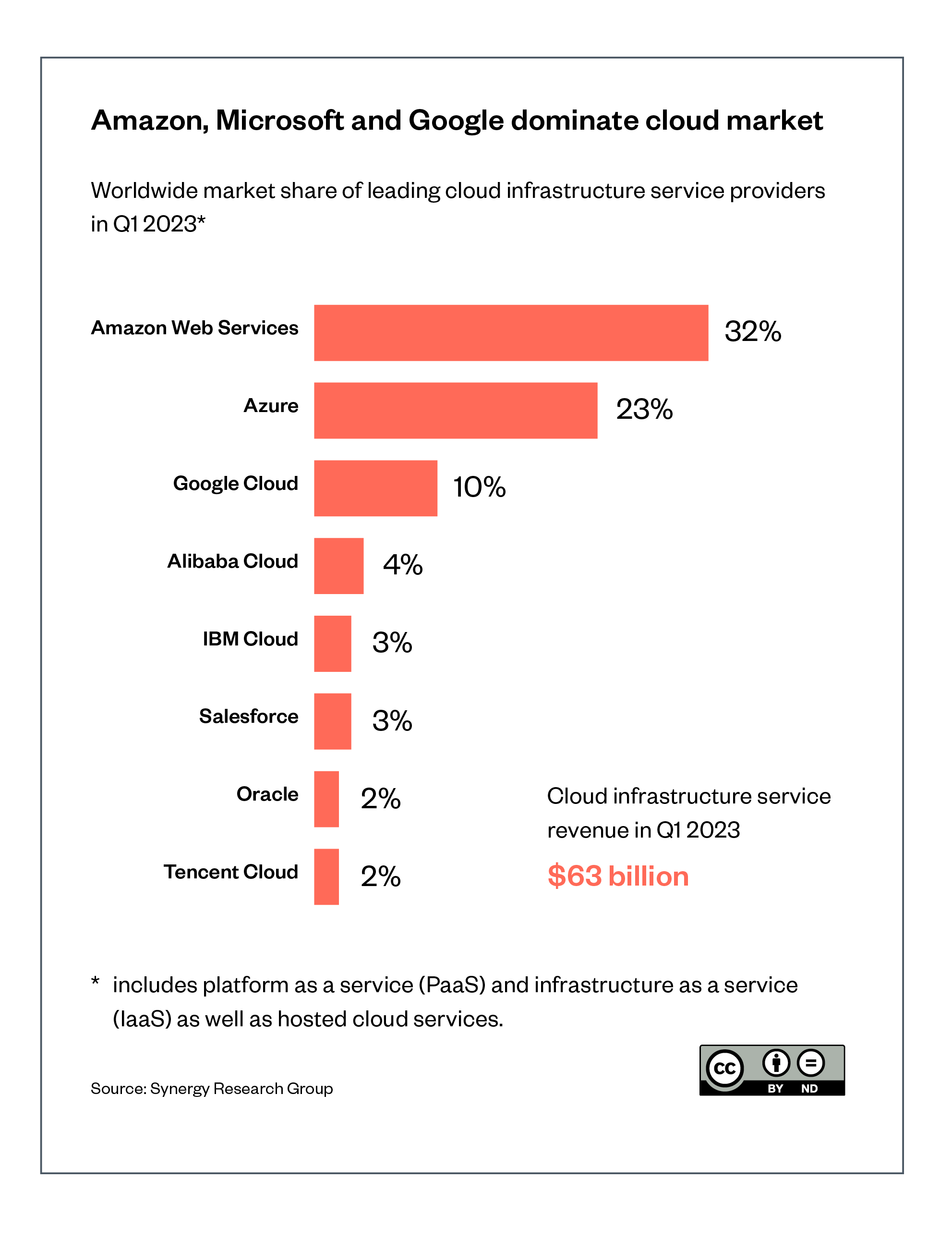

Figure 3 shows the current market structure of cloud computing, where Amazon and Microsoft (and to a lesser extent Google’s parent company, Alphabet) already have large market shares,[110] with substantial investments into machine learning research and development, and global computing and communications infrastructure.

It therefore seems likely that these three companies will also become highly successful in offering foundation models on their platforms. These companies already offer a range of AI/machine learning services to clients, such as Google’s AI Infrastructure and Microsoft’s Azure AI Platform. They are already able to ‘offer their services at lower cost, broader scale, greater technical sophistication, and with potentially easier access for customers than many competitors.’ [111]

Figure 3: Amazon, Microsoft and Google’s dominance of the global cloud market[112]

A product briefing leaked from OpenAI has described the platform Foundry, with tiers of pricing based on the computational load and model sophistication, starting from hundreds to millions of dollars per year.[113] Specialised microchip manufacturer NVIDIA has announced a similar platform.[114]

However, scholars have noted that ’the fact that AIaaS operates at scale as an infrastructure service does offer potential points of legal and regulatory intervention. Given AI services will likely be widely used in future, then regulating at this infrastructural level could potentially be an effective way to address some of the potential problems with the growing use of AI’.[115] This would mean focusing regulatory attention on the large providers of these foundational models.

A contrary view has been provided in an internal note allegedly leaked from Google, which assesses the gap between proprietary and open source AI models (discussed further below) is ‘closing astonishingly quickly’.

The note describes how Meta released the model weights for its large language model LLaMa in March 2023, which caused open source developers to quickly recreate the model and build novel applications from it. The note concludes: ‘The barrier to entry for training and experimentation has dropped from the total output of a major research organization to one person, an evening, and a beefy laptop.’[116]

How EU regulators are assigning responsibility to foundation models

Other jurisdictions (notably the various EU institutions developing the AI Act) are planning to go further than the UK and place (non-contractual) regulatory requirements on suppliers higher up the AI supply chain, including for foundation models and systems (especially where those are assessed as high risk).

These EU regulatory requirements could include transparency mechanisms around the data and model architecture of the model. This would enable academics, civil society groups and the media to more effectively scrutinise those systems for public-interest concerns such as fairness and non-discrimination. Regulators could also set baseline requirements for the information downstream developers building on foundation models must acquire from upstream developers of the system.

Considerations for assigning responsibility for foundation models

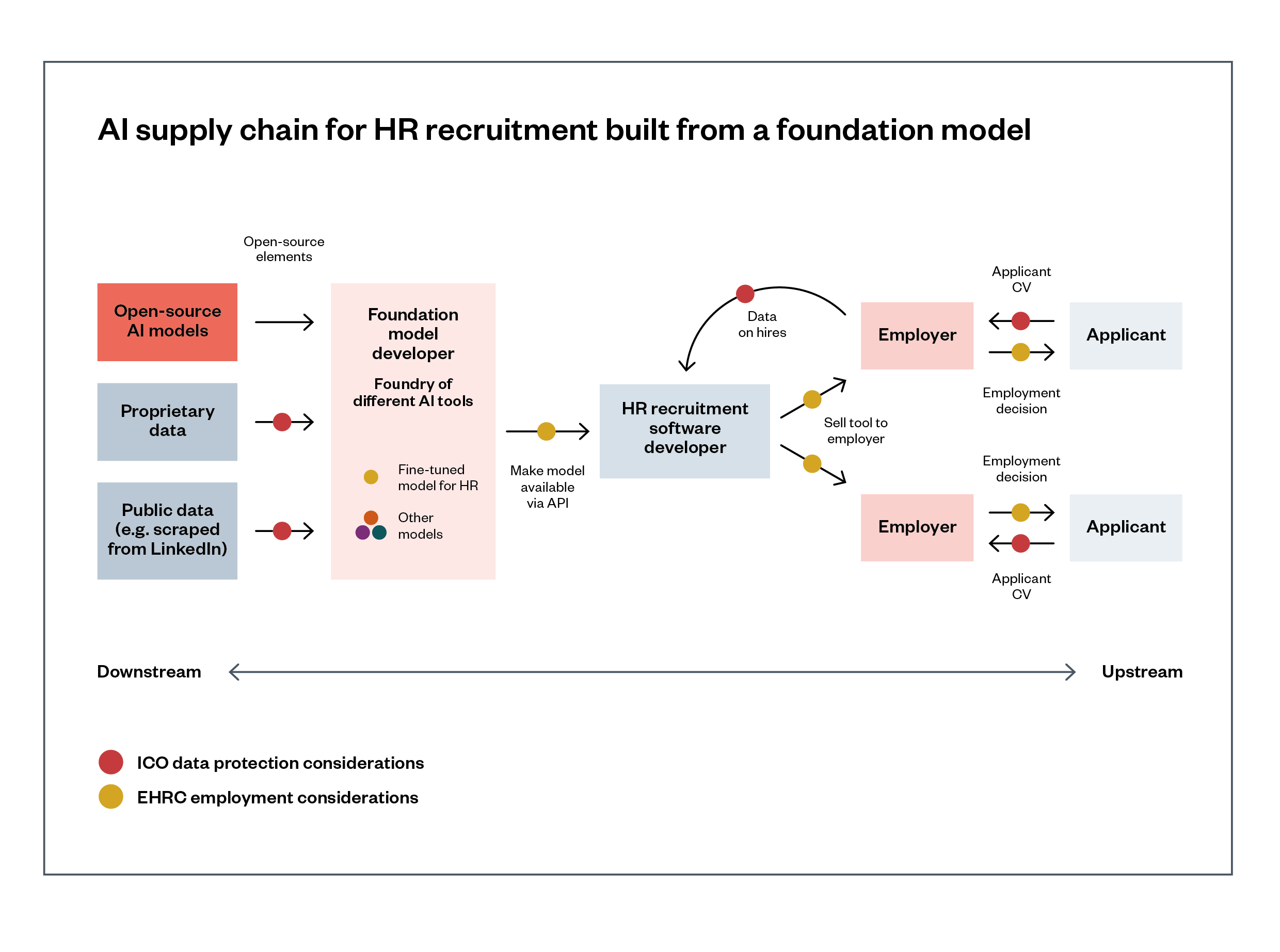

Below, we include an example supply chain of a foundation model that has been fine-tuned to provide an HR recruitment service. Figure 4 shows how different sectoral regulators may need to intervene at different points.

Figure 4: AI supply chain for HR recruitment built from a foundation model

Description of Figure 4: A foundation-model system developer has built a ‘foundry’ of AI tools using proprietary data, public and scraped data, and open-source AI models. An HR recruitment software developer has fine-tuned an HR-specific version of the foundation model via an API, using their own proprietary data from their clients, and created an HR recruitment online service. That service is then procured by downstream employers and recruitment agencies, which use it to contribute to decisions about potential job applicants. Employers and agencies must have regard to the EHRC’s Statutory Code of Practice on Employment

The foundation model provider updates the fine-tuned model’s data sheet and model card regularly, which the HR tool developer and its customers can retrieve on demand to show the Equality and Human Rights Commission (EHRC) they are not discriminating between candidates based on protected characteristics or proxies for them. These organisations can also share the data sheet and model card with the Information Commissioner’s Office (ICO) if there are questions about the fairness of processing, or other data protection law compliance issues.

Drawing on our framework and the principles of efficacy and transparency, it may be more efficient to deal with risks such as bias in suppliers that are higher upstream in supply chains, if their models/systems are being used by large numbers of downstream deployers and developers. Otherwise, ‘excluding [GPAI] models could potentially distort market incentives, leading companies to build and sell GPAI models that minimise their exposure to regulatory obligations, leaving these responsibilities to downstream applications’.[117]

In the HR supply chain example (Figure 4) above, this would mean placing requirements to evaluate for issues of bias and performance on the foundation model provider, as only they would have the access and proximity to assess for bias in that model.

That information could be made available for downstream developers via a model card. Similar obligations could be placed on the downstream HR recruitment service tool developer as they further refine the tool for use by specific employers.

There are concerns that SMEs building systems on top of foundation models will not have the resources to address many risks. This will present problems because ‘shifting responsibility to these lower-resourced organizations […] simultaneously exculpates the actors best placed to mitigate the risks of general purpose systems, and burdens smaller organizations with important duties they lack the resources to fulfil’.[118]

Locating responsibility with foundation model developers higher up the supply chain would enable them to ‘control several levers that might partially prevent malicious use of their AI models. This includes interventions with the input data, the model architecture, review of model outputs, monitoring users during deployment, and post-hoc detection of generated content.’

But it will not create a perfect system, rather: ‘the efficacy of these efforts should be considered more like content moderation, where even the best systems only prevent some proportion of banned content.’[119] Scholars suggest mechanisms that are already familiar from the EU Digital Services Act and UK Online Safety Bill: ‘notice and action mechanisms, trusted flaggers, and, for very large [generative AI model] developers, comprehensive risk management systems and audits concerning content regulation’[120]

The US Federal Trade Commission has announced a potentially far-reaching approach under its consumer protection authority, warning businesses creating generative AI systems they should ‘consider at the design stage and thereafter the reasonably foreseeable – and often obvious – ways it could be misused for fraud or cause other harm. Then ask yourself whether such risks are high enough that you shouldn’t offer the product at all.’[121]

It goes on to say that companies should take ’all reasonable precautions before [such a system] hits the market’, and adds: ‘Merely warning your customers about misuse or telling them to make disclosures is hardly sufficient to deter bad actors. Your deterrence measures should be durable, built-in features and not bug corrections or optional features that third parties can undermine via modification or removal. If your tool is intended to help people, also ask yourself whether it really needs to emulate humans or can be just as effective looking, talking, speaking, or acting like a bot.’

However, as AI software and models become more generalisable and have potentially more users, it becomes harder for their developers to consider customer-specific contexts and potential harms.

As scholars have pointed out, ‘AI practitioners encounter difficulty in engaging with downstream marginalized groups in large scale deployments. Even where a company is working directly with a client to develop a system for them, it may be unable to know what the customer later did with that system after the initial prototype phase, as follow up work does not scale’.[122] Some responsibilities for foundation model supply chains must be placed on deployers who are using the system in a specific context.

Other scholars suggest that systems such as ChatGPT are so general-purpose and usable in so many contexts they should be regulated as a specific category. This would place a duty on developers to actively monitor and reduce risks, in a similar manner to the obligations on platforms of the EU Digital Services Act (Article 34) and the UK Online Safety Bill.[123]

Scholars also suggest regulators should monitor the ‘fairness, quality and adequacy of contractual terms and instructions’ between providers and end-users, as is also considered for platforms under the Online Safety Bill.[124] Researchers suggest a specific category of regulation, which imposes limited transparency obligations on generative AI developers, but imposes the duty to implement a risk-management system on companies using such a system in high-risk applications.[125]

In the EU approach, so-called ‘providers’ (or developers) of high-risk AI systems would face ‘provisions on a risk management system, data governance, technical documentation, record keeping (when possible), transparency requirements, accuracy and robustness […] and go through the formal legal registration process, including performing an ex ante conformity assessment procedure, registering the high-risk AI system in the EU-wide database, establishing an authorised representative as a point of contact for regulators, and demonstrating conformity upon the request of regulatory agencies’.[126]

In 2022, the Council of the EU proposed that GPAI models are addressed as a stand-alone category of AI system. They propose the exact obligations to be placed on GPAI developers are decided via an ‘implementing act’ (a piece of secondary legislation that allows the European Commission to take 18 months to address this question.)

In May 2023, the European Parliament followed suit by also proposing tailored requirements for GPAI[127], ‘foundation models’[128] and ‘generative AI’.[129] They conceptualise foundation models and generative AI as sub-categories of GPAI, and set different rules for each:

- GPAI providers will be required to share information downstream in order to support downstream providers (e.g. fine-tuners) to comply, if deploying the GPAI in a high-risk area.

- Foundation model providers will have obligations at the design and development phase, and throughout the lifecycle. The requirements focus on risk and quality management, data governance measures, and testing the model for predictability, interpretability, corrigibility, safety and cybersecurity. These rules are aimed to be ‘broadly applicable’, i.e. independent of distribution channels, modality, or development method.

- Finally, generative AI providers will be compelled to follow transparency obligations to make clear to end users that they are interacting with an AI model, and will also have to document and make publicly available a summary of the use of training data protected under copyright law.

The Council of the EU and the European Parliament will therefore regulate GPAI – including foundation models and generative AI – in some form, but the exact requirements will be dependent on the inter-institutional ‘trilogue’ negotiations, which will conclude by the end of 2023 or early 2024.