Ethics and accountability in practice

Developing tools, mechanisms and processes that ensure AI systems work for people and society.

The context

From education to healthcare, finances to employment, AI and data-driven technologies are increasingly used to make important decisions about our everyday lives. As these technologies become more ubiquitous throughout society, there is a pressing need to develop and implement new mechanisms, processes and tools to ensure they are developed with proper oversight and scrutiny.

In the last few years, public– and private–sector organisations have invested an enormous amount of resources into developing an extensive number of high-level AI ethics principles designed to address the risks AI systems may pose. But it remains to be seen how these principles can be translated into operational practices that developers, policymakers and others can implement.

‘Accountability’ is a common principle discussed in the AI ethics discourse, and can be defined both as a normative virtue that developers of AI systems strive for, and as an institutional mechanism for holding a developer of these systems to account.1 Ada’s work focuses on the latter definition, in which accountability refers to a relationship between an ‘actor’ and a ‘forum’. According to this definition of accountability, the actor must explain and justify their conduct to the forum, which can pose questions and pass judgment, and the actor may face consequences.

Ada’s approach

The Ethics and accountability in practice programme seeks to answer several key questions:

- What does meaningful accountability look like in the context of developing and integrating AI systems?

- How can we establish incentive structures that address AI ethics?

- Who are the different actors and forums when it comes to the research, development, procurement and deployment of AI systems?

- What kinds of consequences, methods, tools, mechanisms and governance processes can developers implement to create meaningful accountability with those impacted by those systems?

- Are these practices effective? What kinds of externalities and outcomes do they achieve in different contexts?

This programme uses a range of methods to answer these questions including surveys, convenings, interviews, ethnography and case studies. We work with a wide range of actors, including industry, practitioners, civil society members, academics, policymakers and regulators. Examples of our work include:

Defining key terms, synthesis and conducting high-level surveys of the field

- In March 2020, we published Examining the Black Box, a seminal report outlining different methods for assessing algorithmic systems.

- In collaboration with AI Now and the Open Government Partnership, we co-published a survey of the first wave of algorithmic accountability policy mechanisms in the public sector.

- In December 2021, we published a survey of technical methods for auditing algorithmic systems.

Building evidence and case studies

- We worked with NHSX and several healthcare startups to develop an algorithmic impact assessment framework for firms to use when applying for access to a medical image dataset.

- We work with developers of AI systems to consider novel approaches to their design, and which support the best interests of people and society. Examples include a project with the BBC that explored how recommendation engines can be designed with public-service values in mind, and a project exploring participatory methods for data stewardship.

Convening experts and building capacity

- We work with regulators, civil society organisations and members of the public to deepen their understanding of accountability practices. For example, we have pushed forward novel thinking on frameworks for transparency registers.

- We’ve convened several workshops to bring together experts from industry, academia and government around key topics. These include a workshop series on the challenges that research ethics committees are grappling with in their reviews of AI and data science research, and a workshop series on regulatory inspection and auditing of AI systems.

The impact we seek

Our Ethics and accountability in practice programme enables us to achieve our strategic goals in the following ways:

- We have anticipated transformative innovations in approaches to algorithmic accountability, publishing the first synthesis of emerging terms and practices, and the first global survey of algorithmic accountability policies in the public sector.

- We are rebalancing power over data and AI through developing, trialling and testing accountability mechanisms to ensure they are designed and deployed in ways that consider their impact on a range of different communities and ensure their benefits are fairly and equitably distributed.

- We are promoting sustainable data stewardship by suggesting concrete mechanisms for developing best practices in data stewardship – responsible and trustworthy data governance and practice.

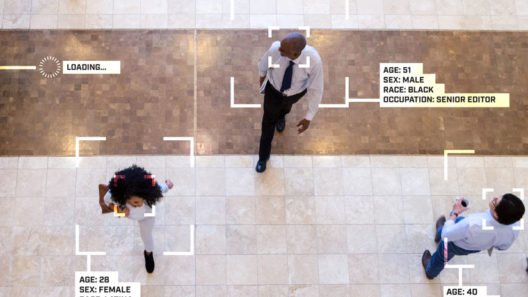

- We are interrogating inequalities caused by data and AI by keeping a clear focus on the emergence of bias and discrimination in AI and algorithmic systems, and suggesting sociotechnical mechanisms for identifying and mitigating the impact of AI systems on inequalities.

Projects

Exploring the role of public participation in commercial AI labs

What public participation approaches are being used by the technology sector?

Supporting AI research ethics committees

Exploring solutions to the unique ethical risks that are emerging in association with data science and AI research.

The ethics of recommendation systems in public service media

Exploring the ethical implications of public service media use of recommendation systems.

Exploring principles for data stewardship

An open set of case studies exploring principles for data stewardship.

Participatory data governance

Enabling a participatory approach to data use, access, sharing and governance where beneficiaries can control and oversee the use of their data.

Regulatory inspection of algorithmic systems

Establishing mechanisms and methods for regulatory inspection of algorithmic systems, sometimes known as 'algorithm audit'.

Reports

Looking before we leap

Expanding ethical review processes for AI and data science research

Inform, educate, entertain… and recommend?

Exploring the use and ethics of recommendation systems in public service media

Algorithmic impact assessment: a case study in healthcare

This report sets out the first-known detailed proposal for the use of an algorithmic impact assessment for data access in a healthcare context

Technical methods for regulatory inspection of algorithmic systems

A survey of auditing methods for use in regulatory inspections of online harms in social media platforms

Participatory data stewardship

A framework for involving people in the use of data

Algorithmic accountability for the public sector

Research with AI Now and the Open Government Partnership to learn from the first wave of algorithmic accountability policy.

Inspecting algorithms in social media platforms

Joint briefing with Reset, giving insights and recommendations towards a practical route forward for regulatory inspection of algorithms

Examining the Black Box

Identifying common language for algorithm audits and impact assessments

Events

Inform, educate, entertain… and recommend?

Exploring the use and ethics of recommendation systems in public service media

Looking before we leap?

Ethical review processes for AI and data science research

Voices in the Code: A Story about People, Their Values, and the Algorithm They Made

David G. Robinson in conversation with Professor Shannon Vallor

A culture of ethical AI

What steps can organisers of AI conferences take to encourage ethical reflection by the AI research community?

From principles to practice: what next for algorithmic impact assessments?

We are convening experts from policy, industry, healthcare and AI ethics to discuss our recent case study and the future of AIAs.

Responsible AI research: challenges and opportunities

Exploring the foundational premises for delivering ‘world-leading data protection standards’ that benefit people and achieve societal goals

Redesigning fairness: concepts, contexts and complexities

Exploring the foundational premises for delivering ‘world-leading data protection standards’ that benefit people and achieve societal goals

Lessons learned from COVID-19: how should data usage during the pandemic shape the future?

Exploring the foundational premises for delivering ‘world-leading data protection standards’ that benefit people and achieve societal goals

Responsible innovation: what does it mean and how can we make it happen?

Exploring the foundational premises for delivering ‘world-leading data protection standards’ that benefit people and achieve societal goals

Exploring participatory mechanisms for data stewardship – report launch event

Involving people in the design, development and use of data and AI systems

From the Ada blog

Understanding AI research ethics as a collective problem

Changing the culture on AI-driven harms through Stanford University’s Ethics and Society Review

The Ada Lovelace Institute in 2022

Ada’s Director Carly Kind reflects on the last year and looks ahead to 2023

- AI and data ethics

- AI policy

- Algorithm impact assessment

- Biometric technologies

- Biometrics

- Biometrics regulation

- Contact tracing

- Data governance

- Data regulation

- Digital vaccine passports

- Enabling a responsible AI ecosystem

- Ethics and accountability in practice

- Europe

- Facial recognition technology

- Health data

- Health data and COVID-19 tech

- Health technology

- JUST AI

- Public attitudes

- Public-sector use of data & algorithms

- Recommendation systems

- The future of regulation

How will data-driven climate change technologies affect global equality?

The role of data-driven adaptation technologies in the climate crisis

Getting under the hood of big tech

Auditing standards in the EU Digital Services Act

Realising the potential of algorithmic accountability mechanisms

Seven design challenges for the successful implementation of algorithmic impact assessments

The role of the arts and humanities in thinking about artificial intelligence (AI)

Reclaiming a broad and foundational understanding of ethics in the AI domain, with radical implications for the re-ordering of social power

How does structural racism impact on data and AI?

Why we need to acknowledge that structural racism is a fundamental driver and cause of the data divide

Why PETs (privacy-enhancing technologies) may not always be our friends

How privacy-enhancing technologies can exacerbate rather than ameliorate technology and data governance concerns

Disambiguating data stewardship

Why what we mean by ‘stewarding data’ matters

Accountability for algorithms: a response to the CDEI review into bias in algorithmic decision-making

Reviewing bias is welcome, and stopping the amplification of historic inequalities is essential.

The ethical case for data fiduciaries

Trust, vulnerability and trustworthiness, and ensuring that the interests of big tech are aligned with the interests of their users.

Data stewardship: an archival perspective

Archives as datasets? What can we learn from archivists in data preservation and sharing?

Strength in numbers: a case study for building a data-based community in cystic fibrosis

Now, more than ever, data can be used to bring people together as well as numbers.

Working with the CARE principles: operationalising Indigenous data governance

Shifting the focus of data governance from consultation to values-based relationships to promote equitable Indigenous participation in data processes.

Algorithms in social media: realistic routes to regulatory inspection

Establishing systems, powers and capabilities to scrutinise algorithms and their impact.

Common governance of data: appropriate models for collective and individual rights

From Elinor Ostrom’s design principles for governing the commons to mechanisms that ensure collective and individual data rights: what steps to take?

Practising data stewardship in India, early questions

How could data stewardship help to rebalance power towards individuals and communities?

Meaningful transparency and (in)visible algorithms

Can transparency bring accountability to public-sector algorithmic decision-making (ADM) systems?

Doing good with data: what does good look like when it comes to data stewardship?

Data can help tackle the world’s biggest challenges, if we ask the right questions about governance and apply principles for stewardship.

Can algorithms ever make the grade?

The failure of the A-level algorithm highlights the need for a more transparent, accountable and inclusive process in the deployment of algorithms.