How does structural racism impact on data and AI?

Why we need to acknowledge that structural racism is a fundamental driver and cause of the data divide

5 May 2021

Reading time: 13 minutes

In recent weeks, the report of the Commission on Race and Ethnic Disparities (the ‘Sewell report’) has engendered a healthy and productive societal debate about inequality.

The Sewell report has provoked a range of responses from authoritative individuals and institutions: the Equalities and Human Rights Commission supports the report’s wish to learn from successes, while others have critiqued its underlying assumptions on a number of legitimate grounds.

The Joseph Rowntree Foundation both supports and criticises the report, for acknowledging labour market inequities but denying institutional racism. From the perspective of health, Sir Michael Marmot, the UK’s leading expert on health inequalities, and the British Medical Journal (BMJ) felt compelled by the report to reiterate that structural racism is both a fundamental driver and cause of ethnic disparities in health.

That contention holds true for data and AI, and in this article we set out the interdisciplinary evidence to demonstrate the unique, dynamic and intimate connections between structural racism and the cultural, historic and social components of data collection, management and use.

Our proposition is that, unless we acknowledge that structural racism is a fundamental driver and cause of the data divide – which affects who can benefit equitably and fairly from AI and algorithmic systems – we cannot evidence and understand inequalities caused by data.

At the Ada Lovelace Institute, we define a ‘data divide’ as the gap between those who have access to – and feel they have agency and control over – data-driven technologies that include AI and algorithmic systems, and those who do not. Here, we explain how ‘the data divide’ can engender an ‘algorithmic divide’ and explore implications for developers, policymakers and others developing data and AI systems that do not work equitably across society, and particularly work to disadvantage minoritised groups.

Beyond ‘objective’ data – data is determined by the power holder

Data is not, and has never been, neutral – or, as the Sewell report suggests, ‘objective’. Data practices have social practices ‘baked in’, so when we talk about data we are also talking about the sociotechnical structures around its capture, use and management. It is not possible to meaningfully engage with issues such as ‘missing data’ or unequal outcomes from data and AI systems unless we understand what structural racism is, and remain conscious of its durable and lasting effects.

Sociologists such as Ruha Benjamin expand on this by articulating how framings of ‘objectivity’ and ‘neutrality’ serve only to mask or conceal discriminatory practices when it comes to data. Angela Saini has written extensively about the pitfalls of assuming that research and data can be ‘objective’, informed by her study of eugenics, which posited itself as a neutral science.

We are always in the process of constructing data, and our relationship with it is dynamic and often unequal. The production and use of data reflects distributions of power. This means that what appear to be – or can be characterised as – choices about what is prioritised and valued by society, actually reflect power holders’ prioritisation of particular values or justification of particular uses of data that are conditioned by power asymmetries.

The process of ‘datafication’ of society (when individuals’ activity, behaviour and experiences are recorded in quantified data that makes them open to analysis) is controlled largely by power-holding institutions. They bring the means, technical capabilities and legitimacy to do so, compounded by historical precedents that people in those institutions have not traditionally reflected everyone in society.

Historically, this datafying role was held by the state, as with the collection of national statistics or the census. In a more contemporary context, organisations with technical capabilities such as large technology companies are also capable of generating data of this kind.

As Jeni Tennison at the Open Data Institute argues, ‘responsible and informed use of data requires a more critical approach than that suggested in the framing of the Sewell report.’ And this encompasses participation: far from lived experience undermining the ‘objectivity’ of data as the report suggests, lived experience can enhance our ability to analyse data systems critically from the perspective of those most affected by them – often racialised and minoritised groups and people.

Our work at the Ada Lovelace Institute recognises the centrality of participatory practices that engage people actively in the data systems that relate to them, as a central mechanism towards levelling the playing field, creating more equitable and inclusive data systems, and helping to build confidence in the effectiveness of data systems.

Structural racism engenders unequal data systems – and we cannot address one without recognising the other

The Oxford and Cambridge Dictionaries define structural racism as ‘laws, rules, or official policies in a society that result in and support a continued unfair advantage to some people and unfair or harmful treatment of others based on race’. This definition does not require some people to directly experience unfairness or harm – it is enough that some people experience an unfair advantage over others.

Choices about the collection and categorisation of data are politicised by this definition of structural racism – where data systems ‘see’ and affirm the identities of some groups more than others, that can be read as an extension of official policies or practices that can result in and support a continued unfair advantage.

The Aspen Institute takes a broader, historic and cultural lens, arguing that structural racism ‘identifies dimensions of our history and culture that have allowed privileges associated with “whiteness” and disadvantages associated with “color” to endure and adapt over time. Structural racism is not something that a few people or institutions choose to practice. Instead it has been a feature of the social, economic and political systems in which we all exist.’

This second definition points to how structural racism can be made invisible and can be unconsciously practised, through the assumptions or prevailing historic and cultural orthodoxies that are passed on from generation to generation. It is these cultural, historic and social aspects of data collection, management and use that might engender advantage or harm, for instance, the historic practices of over surveillance, criminalisation and marginalisation of minorities contribute in the contemporary era towards unequal data relations.

The report acknowledges the complexity that AI systems can introduce in critical sectors like hiring, policing, insurance pricing, loan administration, and others. We wholeheartedly agree with the report’s findings that AI systems ‘can improve or unfairly worsen the lives of people they touch’. One live example of historical bias in which a dataset is inherently biased against a particular ethnic group because it reflects inherent systemic biases is loan data. The long history of racial bias in the lending sector can evidence why a loan dataset is biased against Black lenders. This is not a question of making a dataset fairer to reflect reality – the dataset evidences a historic bias.

To take a contemporary example, choices about whether the recently conducted UK census ‘sees’ those who are intersex or non-binary, for instance, or about how the census chooses to ‘see’ people who are White, or Black, or from a range of faiths, are legacies of historic practices that are encoded in the data collected, but also open to revisiting and reconstruction going forward.

The Sewell report recommends revisiting the much-criticised, definitional term ‘BAME’. This could be an important step in improving the granularity of data collected about minority ethnic people and communities. But to truly and meaningfully revisit or reconstruct terms requires first understanding and acknowledging structural racism and how it impacts every context – and in this case, data and AI – in complex ways.

If we look at ethnicity data, categorising people as ‘BAME’ forces us to ‘see’ people in a particular way (as part of a ‘homogenous grouping’ – BAME, that is, not a self-defined grouping), by forcing us to not see them in another way (their uniqueness as different groups, communities and individuals that make up the plurality of modern-day Britain – with overlapping experiences and privileges, described by some as intersectional identities), and also by ‘overseeing’ or profiling this group – through the practice of ‘othering’ them from an imperfectly defined ‘White majority’.

How poorly designed data systems can hamper visibility and contribute to structural racism

The metaphor of ‘seeing’ helps us to understand how data systems can engender inequalities. A practice of seeing rarely takes place from a neutral standpoint – rather, who it is that sees and for what reasons and purposes will influence that which is seen – illustrating that data cannot be posited as ‘objective’ but almost always as contextual.

Take, for instance, the heckler that critiqued a policy expert, shouting, ‘That’s your bloody GDP, not ours!’, highlighting the gap between her lived experience and the rosy picture of economic growth depicted. That is not to say data lacks value – but rather, that we must approach the value of and benefit of data with a more critical lens if we are to use it most effectively.

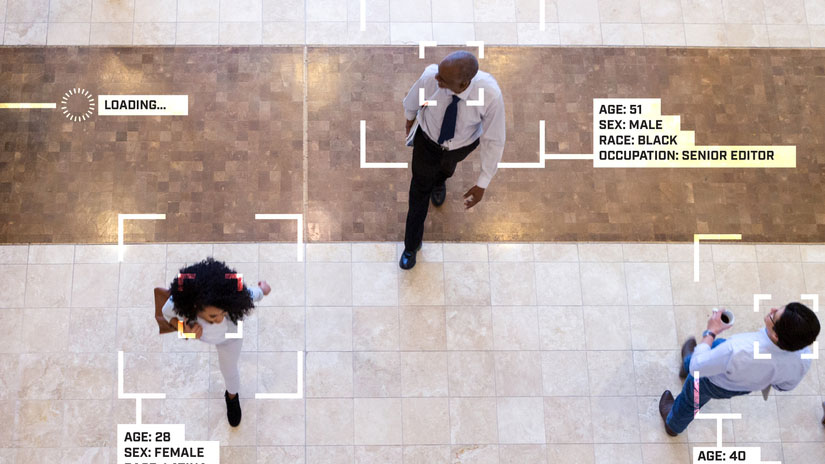

But we can take the metaphor further. A good example of how data systems can create and perpetuate inequality is through the differential practice and treatment of those who are seen and not seen, as well as the distance between the body who is doing the observing and those who are being observed. There is also a third category – those who are overseen by the data system.

Those who are considered part of the ‘majority’ are usually more seen – in a way that enhances their sense of agency, power and control, and confirms and reaffirms their sense of identity. This can be contrasted with those who are ‘unseen’, or otherwise ‘racialised’, ‘otherised’ or ‘minoritised’ groups – either because their sense of identity resists choices about categorisation and definition, or because choices about categorisation and definition only very loosely describes their sense of self – a point that became very apparent in our participatory research on the impact of biometrics on gender identity and ethnicity.

In some instances, where those who are ‘unseen’ are perceived to require active control, management or oversight, data-collection bodies are at risk of overcorrecting – resulting in previously ‘unseen’ groups being at risk of being subjected to increased practices of surveillance and control, thus being ‘overseen’ – as is highlighted by Virginia Eubanks’ work on automating inequality. Eubanks’ research highlights the risks of digital systems that disproportionately profile, target and act punitively towards the poor when embedded in public-service systems stretched by increased demand and scarce resources – what she describes as the ‘digital poorhouse’.

The ‘data divide’s onward effect – for AI and algorithmic systems

The Sewell report identifies three ways that bias can be introduced in an AI system – data, the model and decisions. But, in taking a narrow, technical lens specifically on AI, and separating it from a wider analysis of data systems, the report ignores several other forms of bias that can be introduced before data is collected, in data processing and in intentional choices about the model designs.

The report overlooks the effects of algorithmic bias on human decision-making – when a biased, data-trained algorithm produces a recommendation that affects a human decisionmaker’s judgement. For example, one study found a human judge’s decision making was heavily swayed by the introduction of a ‘risk score’ system, that itself was impacted by historic inequities in the data. This issue is addressed at length in a recent review into bias from the UK Government’s Centre for Data Ethics and Innovation. AI and algorithmic systems depend on good data for fair outcomes – meaning that the data divide risks creating an algorithmic divide. Despite these dependencies, the Sewell report frames the risks of AI to exacerbate racial disparities solely in terms of technical questions around fairness and bias. While these are certainly valid concerns, the report’s framing ignores much deeper questions of justice and power.

As the speculative fiction paper ‘A Mulching Proposal’ illustrates through a hypothetical case study of a technical system that turns the elderly into a nutritious mulched slurry, an AI system may be fair, transparent and accountable – yet still unjust, harmful and discriminatory. What the paper misses is the role that algorithmic systems play in entrenching structural racism, and what steps are needed for such systems to achieve racially just outcomes.

This role goes beyond whether a system is fair and speaks to questions about the intentions and goals of a system and whether those are serving the interests of the benefactors of that service. Is an algorithm to identify potential benefits fraud intended to help those who receive benefits, or to cut costs by cutting benefits to individuals who are suspected (but not proven) of fraud? Is the goal of an algorithm to determine ‘suspect nationalities’ and determine risk based on nationalities – adding the perception of an ‘objective’ lens to a discriminatory approach?

The Sewell report also overlooks system bias. Even if an algorithmic decision-making tool produces ‘fair’ results in lab settings at the time of an audit, it may be used in unfair or harmful ways in deployment. To take an example, facial recognition systems used in real situations demonstrate how the introduction of a system could lead it to be used in unintended ways that caused harmful outcomes. It is not just that facial recognition systems work worse on darker-skinned faces – even when fair, they may be used in ways to oversurveil communities of colour and the poor.

In police trials in Romford of a live facial recognition system, officers began to stop individuals who covered their face and prevented them from applying a scan. Audits and evaluations of fairness must look beyond technical specifications and at their ultimate impact in the wild. It is essential that studying impacts focuses on the equalities impacts of the system as a whole. Indeed many impact assessment frameworks focus on this holistic evaluation of a system’s impact.

Conclusions and next steps

The report’s conclusions that ‘an automated system may be imperfect, but a human system may be worse’ warrants particular critical scrutiny. Most, if not all, automated systems are affected by inequities of practice, which are historically and socially constructed by unequal practices of data collection.

Data collection is only one aspect of enabling effective data systems, and the ‘missing ethnicity data’ problem cannot be resolved by simply ‘filling in the gap’, or by taking a technical or mathematical approach, as at times the Sewell Report seems to suggest. Denying the existence or impact of structural racism won’t prevent it being encoded in the data that trains and underpins the data-driven systems that impact on people’s lives now.

Unless explicitly designed to audit for and address racial inequalities and bias, automated systems can reinforce structural and historic racism, and can also render already opaque human processes further opaque, adding in layers of technical jargon and complexity into a system that further removes the possibility for lay people to understand and challenge such decisions. We describe this at the Ada Lovelace Institute as a risk of creating a ‘double-glazed glass ceiling’ for racialised and minoritised groups to overcome.

In order to truly address this challenge, we would encourage public bodies to acknowledge historic and cultural reasons as to why it is difficult for some groups and underrepresented voices to share, and for UK institutions to collect, sensitive data such as ethnicity data. That, in some instances, data collection practices have been used to surveil, target or profile minorities. And in other instances, minorities may be excluded from the practice of data collection because of lack of access to physical infrastructure (like housing) or other digital infrastructure, as evidenced in our recent report, The data divide.

All these considerations point to the need for meaningful regulatory oversight and governance of data and AI systems, in ways that acknowledge structural racism and have measures built in to mitigate its effects in the future. These will include mechanisms for ensuring data collected and used for these systems reflects the wishes and intents of data subjects or those who are affected by those systems, as well as participatory and democratic measures of data governance that enable racialised and minoritised groups to exercise agency over the use of these systems – as many nations are doing, working with Indigenous communities to enable data sovereignty.

Related content

International Women’s Day: celebrating the Black women tackling bias in AI

This year’s International Women’s Day theme ‘Each for Equal’ has particular resonance for Black women who experience discrimination.

Black data matters: how missing data undermines equitable societies

We joined the CogXtra session on The Tech We Want: An Ethical Approach to Innovation.

Society and people: systemic racial injustice and cleaning up our own house

What should the Ada Lovelace Institute do to address systemic racial injustice?

Making visible the invisible: what public engagement uncovers about privilege and power in data systems

Lived experience insights at Citizens’ Biometrics Council and Community Voice workshops show technology can mediate power asymmetries and privilege.