Public demands stronger regulation for all biometric technologies, the Ada Lovelace Institute finds

The Ada Lovelace Institute's Citizens' Biometrics Council concludes that biometric technologies require stronger regulation

30 March 2021

Reading time: 5 minutes

Government, and other public bodies including the police, must act to make biometric technologies publicly acceptable, reports the Ada Lovelace Institute’s Citizens’ Biometrics Council.

- The Ada Lovelace Institute reports the findings of the first major convening in the world aimed at bringing public perspectives into debates about biometrics

- 50 members of the public, throughout 2020, attended a series of facilitated workshops with experts in biometrics to consider evidence and develop informed opinions about biometric technologies

- The Council concluded that biometric technologies require stronger regulation, tougher oversight and clearer standards for best practice

- The Ada Lovelace Institute argues that in order for biometric technologies to be trustworthy, they require public debate alongside legal and ethical inquiry.

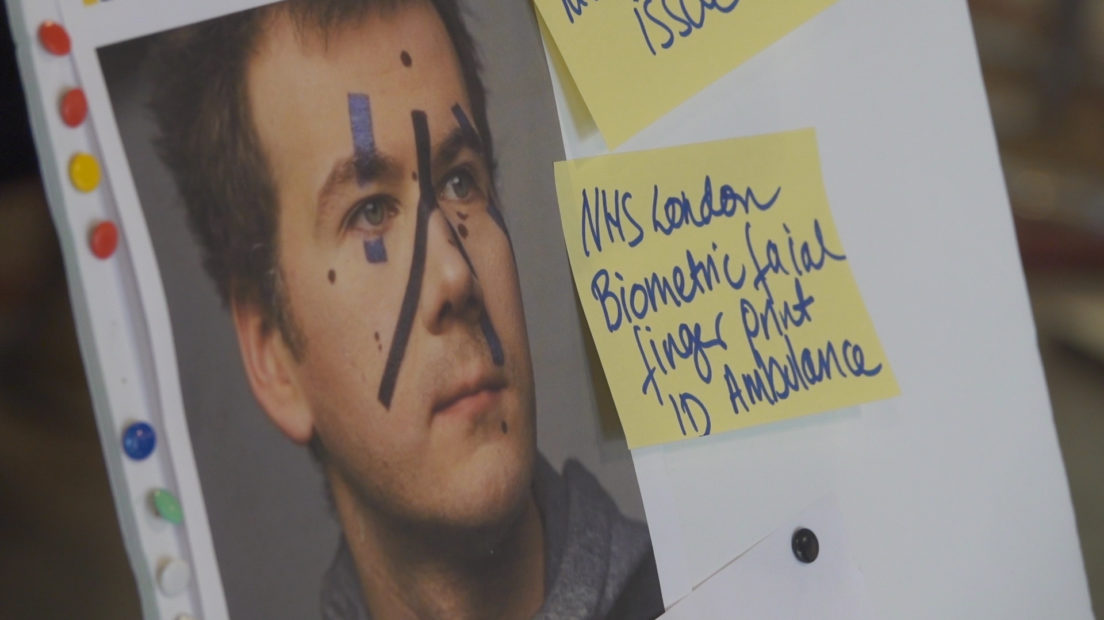

Biometric technologies such as facial recognition, voice recognition and digital fingerprinting, offer useful benefits for public safety and services, but they also pose urgent questions about what constitutes their acceptable, responsible and proportionate use.

These questions arise from the controversial role biometrics have played in perpetuating discrimination and the infringement of civil liberties. Facial recognition, in particular, has been involved in targeting minority groups such as the Uighur Muslims in China and mis-identifying Black people leading to their wrongful arrest, Critics argue that these technologies infringe on the privacy and data rights of everyone.

In the first major convening in the world that aimed to bring public perspectives into debates about biometrics, the Ada Lovelace Institute brought together 50 members of the public throughout 2020 to consider evidence and develop informed opinions about biometric technologies.

Through a series of facilitated workshops and in discussion with experts from industry, government, policing and campaign groups, the Council members deliberated on the question: ‘What is or isn’t ok when it comes to the use of biometric technologies?’

The Citizens’ Biometrics Council developed a set of recommendations to ensure that the use of biometrics is responsible, and that irresponsible uses are prohibited, that centre around three themes:

- Stronger and clearer regulation for all biometric technologies.

- Tougher oversight with a single independent and authoritative body to oversee that regulation.

- Clearer standards for the responsible development and deployment of biometrics.

The Ada Lovelace Institute argues that the potential for oversurveillance, for prejudice and bias, or for the infringement of liberties is not sufficiently limited by existing practice or regulation. Addressing this challenge requires public debate alongside legal and ethical inquiry.

The findings of the Citizens’ Biometrics Council are published today in a report by the Ada Lovelace Institute, and will be discussed in an online, public event at 14:00 BST. More information here.

The Council is part of a programme of work on biometrics at the Ada Lovelace Institute, which also includes an independent legal review of the governance of biometric data (the ‘Ryder Review’), which is due to report later in 2021.

Carly Kind, Director, Ada Lovelace Institute, said:

‘To ensure technologies work for people and society, decisions about how they are developed and deployed must be informed by and aligned with public values and priorities. The Citizens’ Biometrics Council aims to understand the conditions necessary for the beneficial, trustworthy and proportionate use of biometric technologies, and is a critical part of the Ada Lovelace Institute’s work on biometrics. The Council’s recommendations are a formative piece of evidence alongside an independent legal review of the governance of biometrics technologies, commissioned by Ada, which will report later this year.’

Chris Todd, Assistant Chief Constable, West Midlands Police and Board member, Ada Lovelace Institute said:

‘Understanding the public’s concerns, hopes and expectations for biometric data and technology is paramount to answering the ethical questions they raise, from surveillance and discrimination to consent and proportionality. Addressing these issues so that biometrics can be deployed in beneficial ways, and to prevent harmful uses, cannot be done without the voices of the people who are affected and in whose service these tools are used.’

ENDS

Contact: Hannah Kitcher on 07969 209652 or hkitcher@adalovelaceinstitute.org

Imogen Parker, Associate Director for Policy and Aidan Peppin, Senior Researcher for Public Engagement at the Ada Lovelace Institute will both be available for interview.

Notes

- The Ada Lovelace Institute (Ada) is an independent research institute and deliberative body with a mission to ensure data and AI work for people and society. It aims to: build evidence and foster rigorous research and debate on how data and AI affect people and society; convene diverse voices to create a shared understanding of the ethical issues arising from data and AI; and define and inform good practice in the design and deployment of data and AI.

- The Citizens’ Biometrics Council was delivered in partnership with public dialogue specialists, Hopkins Van Mil.

- Experts who spoke with the Council were:

- Fieke Jansen, Cardiff Data Justice Lab

- Griff Ferris, Big Brother Watch

- Robin Pharoah, Encounter Consulting

- Julie Dawson, Yoti

- Ali Shah, Information Commissioner’s Office

- Peter Brown, Information Commissioner’s Office

- Zac Doffman, Digital Barriers

- Kenny Long, Digital Barriers

- Paul Wiles, former Biometrics Commissioner

- Tony Porter, former Surveillance Camera Commissioner

- Lindsey Chiswick, Metropolitan Police Service

- Rebecca Brown, University of Oxford

- Elliot Jones, Ada Lovelace Institute

- Tom McNeil, West Midlands Police and Crime Commissioner’s Office

- The independent legal review of the governance of biometric data in the UK is led by Matthew Ryder QC, who is drawing on the guidance and advice of an independent advisory group of specialists in law, ethics, technology, criminology, genetics and data protection.

Related content

The Citizens’ Biometrics Council

Report with recommendations and findings of a public deliberation on biometrics technology, policy and governance

What is or isn’t OK when it comes to the use of biometric technologies?

Launch event for the final report of the Citizens' Biometrics Council – findings and recommendations

Beyond face value: public attitudes to facial recognition technology

First survey of public opinion on the use of facial recognition technology reveals the majority of people in the UK want restrictions on its use