Algorithmic impact assessment in healthcare

A research partnership with NHS AI Lab exploring the potential for algorithmic impact assessments (AIAs) in an AI imaging case study.

This project proposes a process for algorithmic impact assessments (AIAs) in a healthcare context, which aims to ensure that algorithmic uses of public-sector data are evaluated and governed to produce benefits for society, governments, public bodies and technology developers, as well as the people represented in the data and affected by the technologies and their outcomes.

The final report sets out the first-known detailed proposal for the use of an algorithmic impact assessment for data access in a healthcare context – the UK National Health Service (NHS)’s proposed National Medical Imaging Platform (NMIP).

Project background

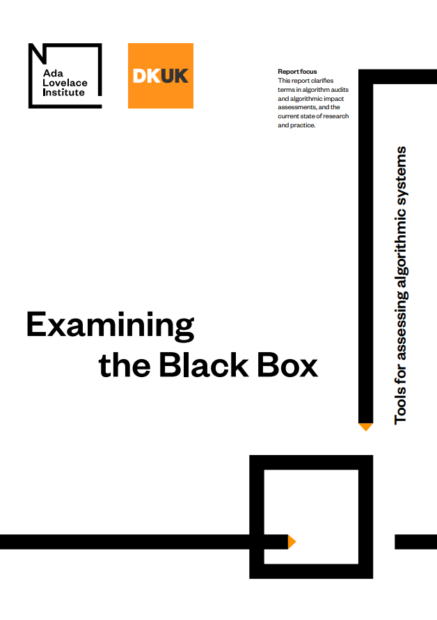

From automated diagnostics to personalised medicine, the healthcare sector has seen a surge in the application of data-driven technologies (including AI) to deliver health outcomes. To increase the rate of innovation in this space, public-health agencies have sought to make health data they control more accessible to researchers and private sector firms.

But while data-driven technologies have the potential to bring enormous benefits to healthcare, they also come with serious risk of harm to the people, the environment and society, including perpetuating algorithmic bias, impeding transparency and public scrutiny and creating risks to individual privacy.

There is therefore a pressing need to understand and mitigate the potential impacts of AI and data-driven systems in healthcare before they are developed and deployed, and to address serious risks, including, but not limited to:

- the perpetuation of ‘algorithmic bias’, exacerbating health inequalities by replicating entrenched social biases and racism in existing systems;

- inaccessible language or lack of transparent explanations can make it hard for clinicians, patients and the public to understand the technologies undermining public scrutiny and accountability;

- the collection of personal data, tracking and the normalisation of surveillance, creating risks to individual privacy.

Understanding the potential impacts of data-driven systems before they are developed is the best way to mitigate these potential harms. While there is a growing academic literature around how public-sector agencies can conduct algorithmic impact assessments (AIAs),1 there remains a lack of case studies of these frameworks in practice, particularly in a healthcare setting.

Project overview

Building on Ada’s existing work on assessing algorithmic systems, this project identified a case-specific impact assessment model for use in the NHS AI Lab‘s National Medical Imaging Platform (NMIP), a proposed large-scale dataset of high-quality chest X-rays (CXRs), MRIs, skin, ophthalmology and other images, made available to researchers and private companies to test, train and validate medical AI products.

The NHS plans to trial the use of this assessment as part of the work of the NHS AI Lab. The framework will be used in a pilot to support researchers and developers in assessing the possible risks of an algorithmic system before they are granted access to NHS patient data. By implementing this research, the NHS in England is set to be the first health system in the world to use this new approach to the ethical use of AI.

This research provides actionable steps on how to implement the NMIP AIA for the NHS AI Lab, as well as helped to inform wider AIA research and practice at the intersection of health and data science, and in the public and private sectors.

The NMIP is a novel case study as it sits at the intersection of two audiences for impact assessment: industry developers and the public sector. Existing research has tended to focus on either public sector procurers of systems (such as the Canadian AIA), or the developers of new technology themselves (such as use of human rights impact assessments in industry). This case study offers a new lens through which to examine and develop algorithmic impact assessment at the intersection of public sector and industry development.

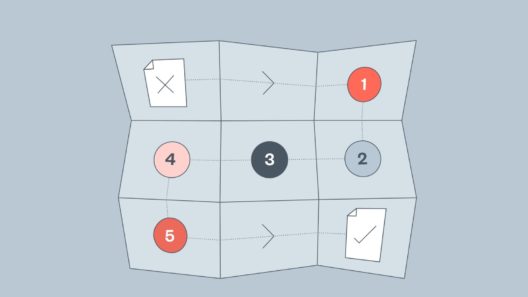

As the outputs of this project, we have built a detailed, step-by-step algorithmic impact assessment model for the NHS AI Lab to use as a requirement of access to the NMIP, including the artefact of the AIA, the NMIP algorithmic impact assessment template. To support the implementation of this AIA, we also designed an end-to-end walkthrough of the process from the perspective of an NMIP applicant, the NMIP algorithmic impact assessment user guide.

In our report Algorithmic impact assessment: a case study in healthcare we introduce the AIA process, a synthesis of the algorithmic impact assessment literature and the healthcare AI context and ‘Seven operational questions’ that policymakers and researchers interested in building, adopting or mandating AIAs to consider. This study provides a missing piece of the puzzle in practical evidence on the use of algorithmic impact assessments.

This research was funded by a grant of £66,000 from NHS AI Lab and is governed by a memorandum of understanding (MOU), which is available here.

Further work and opportunities

If you are interested in this work, you can keep up to date on our website, follow us on Twitter and subscribe to our fortnightly newsletter.

Image credit: poba

Project publications

Algorithmic impact assessment: a case study in healthcare

This report sets out the first-known detailed proposal for the use of an algorithmic impact assessment for data access in a healthcare context

Algorithmic impact assessment: AIA template

This template is part of our wider work exploring algorithmic impact assessments (AIAs) in healthcare

Algorithmic impact assessment: user guide

This user guide is part of our wider work exploring algorithmic impact assessments (AIAs) in healthcare

Related content

Examining the Black Box

Identifying common language for algorithm audits and impact assessments

The foundations of fairness for NHS health data sharing

How do the public expect the NHS, and third-party organisations to steward their data?

Turn it off and on again: lessons learned from the NHS contact tracing app

The decision to delay the app’s launch is the right one.