What is or isn’t OK when it comes to biometrics?

Reflections from round one of the Citizens' Biometric Council.

26 February 2020

Reading time: 6 minutes

The Ada Lovelace Institute is convening the Citizens’ Biometrics Council to bring public voice into the debate about biometrics technologies. In February the Council met for the first time to discuss biometrics technology and policy. Attending workshops in either Bristol or Manchester, council members began to explore the potential of new technologies and the societal concerns they raise.

Transparency, convenience, proportionality, accuracy, trust, truth. Data management, surveillance, human rights, and societal versus individual benefit. Good governance, marginalised groups, discrimination. Power. Social value, economic value, accessibility and inclusion. The future of our society and the impact on our environment.

Not everyone decides to spend their weekend discussing topics like this. But that’s exactly what the Citizens’ Biometrics Council did across two workshops in February. The list above captures only a snapshot of the breadth of issues explored, reflecting the sheer size of this topic and hinting at what priorities may emerge throughout this process.

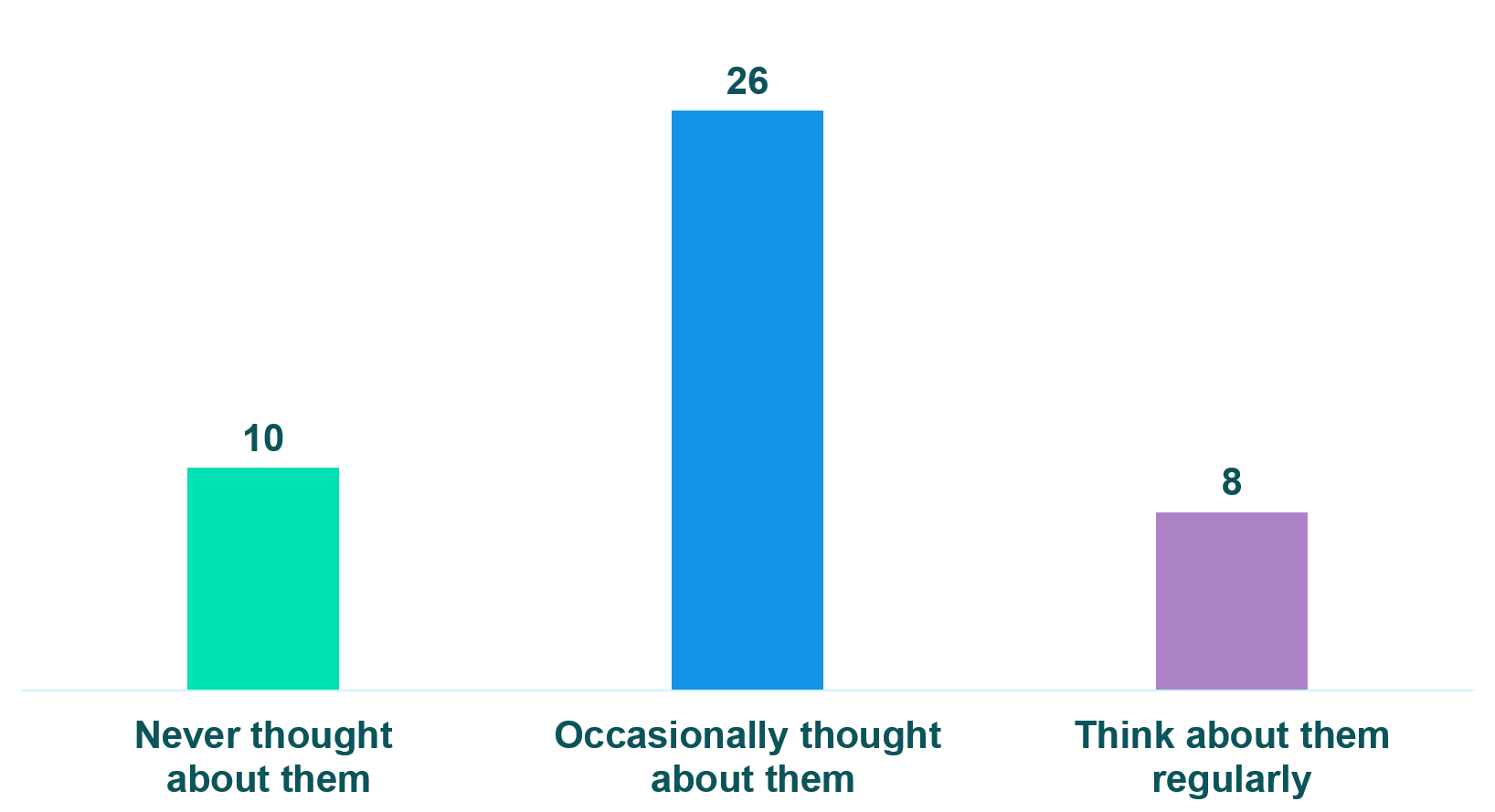

To what extent have you thought about biometric technologies before you were invited to join the Citizens’ Biometrics Council?

The majority of council members in both Bristol and Manchester had only thought about biometric technologies occasionally prior to joining the Citizens’ Biometric Council.

The majority of council members in both Bristol and Manchester had only thought about biometric technologies occasionally prior to joining the Citizens’ Biometric Council.

These first workshops provided council members with an opportunity to consider biometrics technologies in depth; many hadn’t thought much about them before. Nevertheless, the council members brought constructive thoughtfulness to their task, often drawing from their own perspectives. Some shared how their children use fingerprints to pay for school meals, others how their bank is implementing voice-recognition security. This raised questions like: how will this affect young people’s skill with money? What do the banks do with the data being collected?

Council members also considered the experiences of others. Concerns were raised about how certain groups might be excluded – like those less digitally literate, or people living with disabilities – as we increasingly rely on standardised biometrics technologies. Where discrimination already exists in society, will these technologies reduce or exacerbate injustice and inequality?

More questions were raised than answered across the first round of workshops, but this was to be expected. Speakers – including representatives from the ICO, police forces, technology companies and civil society – addressed some of the council members’ questions as they arose. We gave more information about how the GDPR might apply in a particular context, for example, and provided added detail on how certain technologies work.

But as more subjective questions arose, they were turned back to the Council. The Hopkins Van Mil team facilitated the discussions, making sure everyone’s voice was heard as we considered these thorny questions. Questions which regulators, technologists, policymakers and legal scholars don’t yet have answers to, such as:

- What’s the right level of information which must be provided when deploying voice recognition?

- What’s an acceptable use of facial recognition technology in a public space?

- What are the appropriate standards which must be upheld for emerging biometrics technologies?

The Citizens’ Biometrics Council exists to give public perspective on exactly these kinds of questions.

The key question

Our aim for the Council is to give an understanding of an informed public’s expectations, conditions for trustworthiness and redlines when it comes to the use of biometrics technologies and data. We turned this into a question and presented it to the Council, who fed back that it was too complex to be addressed. Quite rightly, they identified the overly complicated language and how it was at least two questions in one.

Among the feedback, they made suggestions for better questions, one of which is the title of this piece: simply, ‘what is or isn’t OK when it comes to the use of biometrics?’ Based on this feedback, we’re creating a clearer question to address.

What next?

There are two more rounds of workshops for the Citizens’ Biometrics Council. Round two will explore some of these complex societal, ethical and political dimensions in more depth. And we’ll invite speakers who work in these fields, present case-studies which provide tangible content to digest and facilitate sessions where the Council can pick these issues apart.

Round three, in April, will give council members space to reflect on all the information and conversations, and articulate their own recommendations, advice or expectations. The format these outputs take will be up to the Council.

The questions council members raised in February show how tough the challenge is. But it also shows they are up to the task. It’s an exciting start to an important conversation.

What happened at the workshops?

Unless you’ve taken part in a deliberative process, it can be hard to get a sense of what’s involved. Here’s an outline of what happened in February’s workshops.

Saturday

Participants arrived, registered, got their name badge and pack, and found their tables. Tea, coffee and biscuits were plentiful, of course.

Once everyone had arrived, the facilitation team (from Hopkins Van Mil) began introductions, discussed the ways of working, and made sure everyone felt comfortable. Then, we began the first group discussions. Council members had each brought an image, newspaper cutting, webpage or similar which had something to do with biometrics. On each table they shared what they’d brought and their initial thoughts and feelings. Facilitators made sure everyone could contribute and captured key points on post-its and flipcharts.

Then, the first batch of presentations. One from the Ada Lovelace Institute team, introducing what we mean when we say ‘biometrics.’ Another from the Information Commissioner’s Office, talking about the current legal frameworks for biometrics data and what work they’re doing to consider the future of biometrics. In Bristol, we also heard from professionals in law enforcement, describing their work to explore the use of voice and facial recognition technologies. The council members then discussed in groups, before a Q&A session with each of the speakers.

Council members spent the afternoon discussing the aim of the Council and the key questions they’d be tackling. They explored a set of case studies which illustrate some examples of where biometrics technologies are currently being developed and deployed, like online fraud detection, police facial recognition or age estimation in supermarkets.

Finally, they reflected on all they’d heard throughout the day.

Sunday

Sunday featured fewer presentations and left more space for council members to explore issues with each other. They started the morning by sharing thoughts on what makes technology trustworthy or not. Following this, the facilitators presented some fictional scenarios to illustrate different places biometrics technologies might appear: facial recognition to enter a gym, fingerprint security to access bank accounts, and gait analysis at airports. Council members discussed how each scenario made them feel, what concerned them and what benefits they could see.

Before lunch, there was a short session to think about data privacy from the different perspectives of data subjects and data processors. After lunch, the council heard from two representatives of companies developing biometrics technologies: Yoti and Digital Barriers.

After Q&A, there was time to reflect across the whole weekend and look ahead to the next round.

(In Manchester, we had to amend the plan to account for travel disruption caused by Storm Ciara.)

Interested to know more?

We’ll write posts about rounds two and three, and share more via our newsletter and social media.

Follow the conversation on Twitter: @adalovelaceinst #BiometricsCouncil

Related content

Emerging approaches to the regulation of biometrics: The EU, the US and the challenge to the UK

What we can learn from international developments in the governance and regulation of biometric technologies