Why the COVID-19 shielded patient list might both compound and address inequalities

Wicked problems in the use of data-driven systems

18 February 2021

Reading time: 7 minutes

A substantial expansion in the number of people asked to shield in England (the ‘shielded patient list’), illustrates that data-driven systems and technologies can be both unequal in their impacts and an equalising force.

Incorporating sensitive data – about a person’s ethnicity, their likely experience of deprivation by location, their age and their weight – alongside data about existing health conditions brings new risks (and potential benefits) and poses a greater set of challenges relating to the risk of discriminatory impact, while increasing the ethical complexity of the data and its uses.

The shielded patient list is a record of patients thought to be at high risk of complications from COVID-19. In place for much of the pandemic, it drew largely on data about people’s clinical vulnerability to help identify potential risk levels, using existing data about clinical conditions (those who are defined as ‘extremely clinically vulnerable’) to determine who is placed on the list.

This week a new predictive model, the new Oxford QCovid algorithm commissioned by Chief Medical Officer Chris Whitty, was used to expand the list. The predictive model takes into account existing health conditions held by a patient and considers additional factors that have been identified as contributing to patients’ risk levels, drawing on broader data, such as ethnicity and location deprivation data (based on people’s postcode), to understand whether people are likely to need to shield. This expands the shielded patient list by 1.7 million, from an initial 2.3, up to 4 million.

This new, resulting dataset has profound ramifications for those who are on the shielded patient list. Inclusion on the list means that – ostensibly for their own protection – millions of people are being requested by the Government to severely limit their movements. Unnecessary inclusion on the list therefore could result in increased hardship for individuals and their families, if advised to act in a more restrictive manner for months, with potential harms to mental health, fitness etc. Inclusion, in this case, leads to exclusion.

However, non-inclusion of someone with an unidentified a high risk also means individuals unwittingly put themselves in greater danger, especially as restrictions lift. Not only is there a risk of exposure to the virus. there are particular risks, for instance, that people who are digitally excluded, or not ‘visible’ on existing data systems, are not able to access the support or vaccine prioritisation that they would otherwise be able to access through being on the list.

This generates a tension – what is often described as a ‘wicked problem’ in the use of data-driven decision-making systems in the pandemic, when it comes to targeting support to those who need it the most. The intention is certainly to ensure benefits outweigh harms; but in practice there is a risk that the harms outweigh the benefits.

The issues raised by the shielded patient list case is a ‘wicked problem’ because it surfaces the complex challenges that social policy issues generate. This is a ‘problem that is difficult or impossible to solve because of incomplete, contradictory and changing requirements that are often difficult to recognise,’ demanding a creative, system wide and collaborative approach from a range of actors, who understand the scale and the nature of the challenge. There is no simple ‘quick fix’ or easy solution.

The wicked problem, or the ‘tension’ at the heart of this particular approach to data governance about people can be revealed if we consider the risks of both unnecessary inclusion and unnecessary exclusion. This has the effect of demonstrating the ‘double-edged sword’ nature of the types of challenges that Chris Whitty and his team are faced with in using data during the pandemic response.

The risks of unnecessary inclusion

Inclusion on the shielded patient list constrains individuals to work from home and/or not to work. In compensation, the Government can offer statutory sick pay or employment and support allowance, and/or support through the Government-funded Access to Work programme. The Government has also confirmed that those on the list will be prioritised for vaccine allocation.

The obvious advantage to those shielding includes the very significant benefits for them in terms of ensuring their protection from a public-health perspective while accessing monetary support during the pandemic. However, the shielded patient list is also at risk of reinforcing, compounding and exacerbating further health and social inequalities by restricting clinically vulnerable people, and/or those identified at risk due to their age, ethnicity, location and other predictive factors, from being able to participate fully in the workplace (unless they are otherwise able to work from home); and by using those factors known by public bodies about people’s identities (particularly sensitive characteristics such as race and age) to identify levels of risk.

The risk of unnecessary exclusions

On the flip side, there is also, separately, the risk of missing data – the risk that there might be extremely clinically vulnerable people who are so marginalised and underrepresented that they fall below the ‘data line’ and are, in effect, invisible – and therefore not entitled to the kind of support and assistance that the shielded patient list provides.

We can consider that the list can also have great potential to target support to those who are identified as requiring it the most. Not being on the shielded patients list could mean missing out on vital support – illustrating that the ‘double-edged sword’ nature of a data system can often play towards both addressing and contributing to existing social and healthcare inequalities.

As much as the shielded patient list might compound and be at risk of perpetuating inequalities, it also is a good example of an initiative that is using access to data to try to address them. Examples of the types of support available to those on the shielded patient list, beyond support in lieu of employment and furlough support, include priorities for vaccine allocation, giving those already receiving mental health support proactive contact and additional support from mental health providers, or the ability to request to have their details shared with supermarkets to receive priority online food delivery slots.

The tensions revealed through thinking through the detail of this use case demonstrate precisely the challenge for policymakers and those using data to engender wider societal benefit. On the one hand, it is imperative that those who are shielding are visible, to receive the support they need in order to protect both themselves and others. But on the other hand, there may be great risk inherent in giving those people visibility by placing them on the list (particularly if the process is at risk of being inaccurate or overcorrecting, of overprofiling people based on their known ethnicity or likely experience of deprivation, and is likely to restrict some people’s liberties more than others disproportionately as a consequence).

Ordinarily, in thinking about approaches such as these, there is no obvious ‘right thing’ to do – rather – there are ‘better’ or ‘worse’ things to do. Wicked problems require a nuanced approach, acknowledging the necessarily incomplete nature of the policy and public-health response in any particular instance, and some complex exercising of judgement.

Wicked problems require ‘wicked solutions’ – what have been described as a ‘systems approach’ to tackling complex solutions. It is only when we take the broader systems lens or view that we recognise these tensions as part of the overall structures, patterns and cycles in the system, rather than individual elements of the system and individual impacts of the system.

In researching use cases such as the shielded patient list, we at the Ada Lovelace Institute will aim to gain a deeper understanding of these ‘wicked problems’ – how they have played out in relation to the pandemic and the various data systems and technologies used in response. Specifically, we are working on a two-year research and public-engagement partnership with the Health Foundation, tackling health and social inequalities in the use of pandemic technologies and data-driven systems. We aim to take a nuanced, non-binary approach to the impact that technologies and data-driven systems can have on health and social inequalities.

As the shielded patient list illustrates, data-driven systems and technologies can be simultaneously (and in a contradictory fashion) unequal in their impacts, and an equalising force.

Image credit: damircudic

Related content

Living online: the long-term impact on wellbeing

The Ada Lovelace Institute and Health Foundation’s response to the House of Lords COVID-19 Committee’s call for evidence

Health datafication, digital phenotyping and the ‘Internet of Health’

A new report from the Ada Lovelace Institute explores the datafication of health, how it manifests and the consequences for people and society.

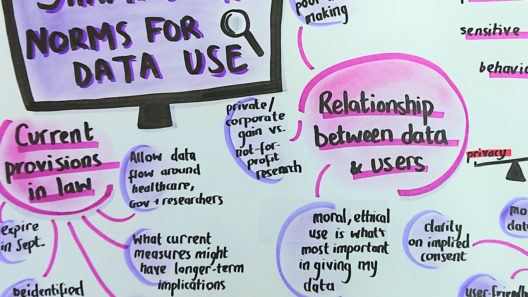

The foundations of fairness for NHS health data sharing

How do the public expect the NHS, and third-party organisations to steward their data?

A rapid online deliberation on COVID-19 technologies: building public confidence and trust

Considering the question: ‘What would help build public confidence in the use of COVID-19 exit strategy technologies?’