Regulate to innovate

A route to regulation that reflects the ambition of the UK AI Strategy

29 November 2021

Reading time: 165 minutes

A report which sets out how regulation provides clear, unambiguous rules, which are necessary if the UK is to embrace AI on terms that will be beneficial for people and society.

Regulate to innovate provides evidence for how the UK might develop its approach to AI regulation, which is in line with its ambition for innovation – as set out in the UK AI Strategy – as well as recommendations for the Office for AI’s forthcoming White Paper on the regulation and governance of AI.

Executive summary

In its 2021 National AI Strategy, the UK Government laid out its ambition to make the UK an ‘AI superpower’, bringing economic and societal benefits through innovation. Realising this goal has the potential to transform the UK’s society and economy over the coming decades, and promises significant economic and societal benefits. But the rapid development and proliferation of AI systems also poses significant risks.

As with other disruptive and emerging technologies,1 creating a successful, safe and innovative AI-enabled economy will be dependent on the UK Government’s ability to establish the right approach to governing and regulating AI systems. And as the UK AI Council’s Roadmap, published in January 2021, states, ‘the UK will only feel the full benefits of AI if all parts of society have full confidence in the science and the technologies, and in the governance and regulation that enable them.’2

The UK is well placed to develop the right regulatory conditions for AI to flourish, and to balance the economic and societal opportunities with associated risks,3 but urgently needs to set out its approach to this vital, complex task.

However, articulating the right governance and regulatory environment for AI will not be easy.

By virtue of their ability to develop and operate independently of human control, and to make decisions with moral and legal consequences, AI systems present a uniform set of general regulatory and legal challenges concerning agency, causation, accountability and

control. At the same time, the specific regulatory questions posed by AI systems vary considerably across the different domains and industries in which they might be deployed.

Regulators must therefore be able to find ways of accounting consistently for the general properties of AI while also attending to the peculiarities of individual use cases and business models. While other states and economic blocs are already in the process of engaging with tough but unavoidable regulatory challenges through new draft legislation, the UK has still to commit to its regulatory approach to AI.

In September 2021, the Office for AI pledged to set out the Government’s position on AI regulation in a White Paper, to be published in early 2022. Over the course of 2021, the Ada Lovelace Institute convened a cross-disciplinary panel of experts to explore approaches to AI regulation, and inform the development of the Government’s position. Based on this, and Ada’s own research, this report sets out how the UK might develop its approach to AI regulation in line with its ambition for innovation. In this report we:

- explore some of the aims and objectives of AI regulation that might have been considered alongside economic growth

- outline some of the challenges associated with regulating AI

- review the regulatory toolkit, and options for rules and system design, which address technologies, markets and use-specific issues

- identify and evaluate some of the different tools and approaches that might be used to overcome the challenges of AI regulation

- assess the institutional and legal conditions required for the effective regulation of AI

- raise outstanding questions that the UK Government will have to answer in setting out and realising its approach to AI regulation.

The report also identifies a series of conclusions for policymakers, as well as specific recommendations for the Office for AI’s White Paper on the regulation and governance of AI. To present a viable roadmap for the UK’s regulatory ecosystem, the White Paper will need to make clear commitments in three important areas:

- The development of new, clear regulations for AI.

- Improved regulatory capacity and coordination.

- Improved transparency standards and accountability mechanisms.

The development of new, clear regulations for AI

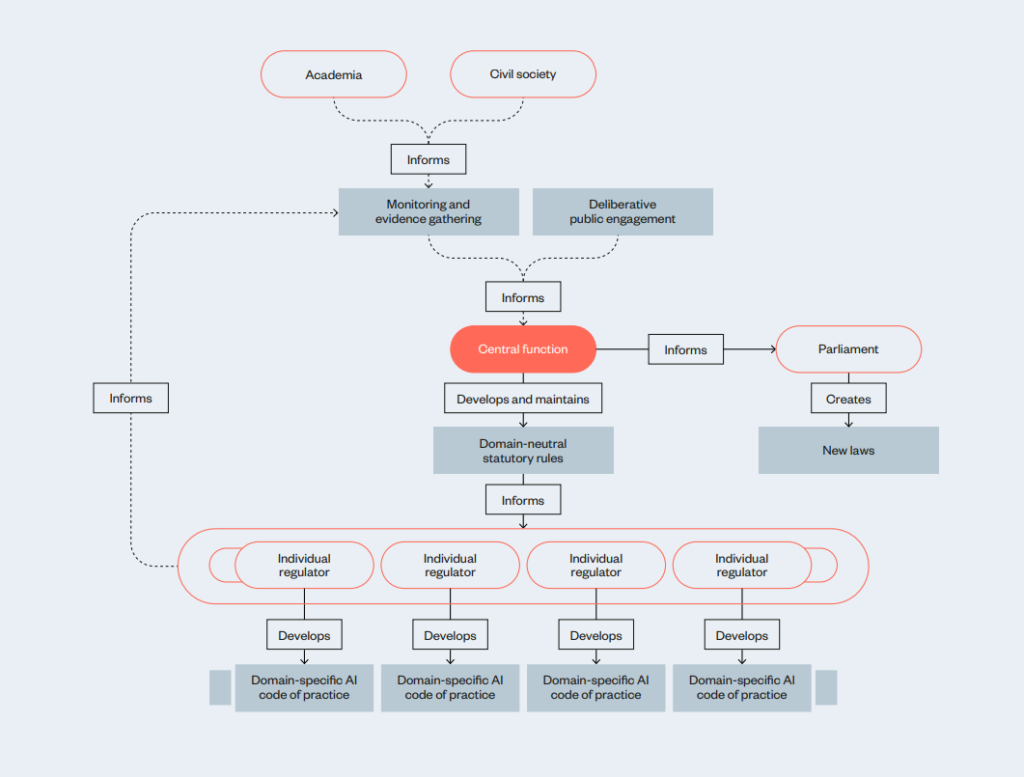

We make the case for the UK Government to:

- develop a clear description of AI systems that reflects its overall approach to AI regulation, and criteria for regulatory intervention

- create a central function to oversee the development and implementation of AI-specific, domain-neutral statutory rules for AI systems that are rooted in legal

and ethical principles - require individual regulators to develop sector-specific codes of practice for the regulation of AI.

Improved regulatory capacity and coordination

We argue that there is a need for:

- expanded funding for regulators to help them deal with analytical and enforcement challenges posed by AI systems

- expanded funding and support for regulatory experimentation and the development of anticipatory and participatory capacity within individual regulators

- the development of formal structures for capacity sharing, coordination and intelligence sharing between regulators dealing with AI systems

- consideration of what additional powers regulators may need to enable them to make use of a greater variety of regulatory mechanisms.

Improving transparency standards and accountability mechanisms

The impacts of AI systems may not always be visible to, or controllable by, policymakers and regulators alone. As such, regulation and regulatory intelligence gathering will have to be complemented by, and coordinated with extra-regulatory mechanisms such as standards,

investigative journalism and activism. We argue that the UK Government should consider:

- using the UK’s influence over international standards to improve the transparency and auditability of AI systems

- how best to maintain and strengthen laws and mechanisms to protect and enable journalists, academics, civil-society organisations, whistleblowers and citizen auditors to hold developers and deployers of AI systems to account.

Overall, this report finds that, far from being an impediment to innovation, effective, future-proof regulation will provide companies and developers with the space to experiment and take risks without being hampered by concerns about legal, reputational or ethical exposure.

Regulation is also necessary to give the public the confidence to embrace AI technologies, and to ensure continued access to foreign markets.

The report also highlights how regulation is an indispensable tool, alongside robust industry codes of practice and judicious public-funding and procurement decisions, to help navigate the narrow path between the risks and harms these technologies present.

We propose that the clear, unambiguous rules that regulation can provide are necessary if

the UK is to embrace AI on terms that will be beneficial in the long term.

To support this approach, we should resist the characterisation that regulation is the enemy of

innovation: modern, relevant, effective regulation will be the brakes that allow us to drive the UK’s AI vehicle successfully and safely into new and beneficial territories.

Finally, this research outlines the major questions and challenges that will need to be addressed in order to develop effective and proportionate AI regulation. In addition to supporting the UK Government’s thinking on how to become an ‘AI superpower’ in a manner that manages risk and results in broadly felt public benefit, we hope this report will contribute to live debates on AI regulation in Europe and the rest of the world.

How to read this report

This report is principally aimed at influencing the emerging policy discourse around the regulation of AI in the UK, and around the world.

- In the introduction we argue that regulation represents the missing link in the UK’s overall AI strategy, and that addressing this gap will be critical to the UK’s plans to become an AI superpower.

- Chapter 1 sets out the aims and objectives UK AI regulation should pursue, in addition to economic growth.

- Chapter 2 reviews the generic regulatory toolkit, and sets out the different ways that regulatory rules and systems can be conceived and configured to deal with different kinds of problems, technologies and markets.

- Chapters 3 and 4 review some of the specific challenges associated with regulating AI systems, and set out some of the tools and approaches that have the potential to help overcome or ameliorate these difficulties.

- Chapter 5 articulates some general lessons for policymakers considering how to regulate AI in a UK context.

- Chapter 6 sets out some specific recommendations for the Office for AI’s forthcoming White Paper on the regulation and governance of AI.

If you’re a UK policymaker thinking about how to regulate AI systems

We encourage you to read the recommendations at the end of this report, which set out some of the key pieces of guidance we hope the Office for AI will incorporate in their forthcoming White Paper.

If you’re from a regulatory body

Explore the mechanisms and approaches to regulating AI, set out in chapter 3, which may provide some ideas for how your organisation can hold these systems more accountable.

If you’re a policymaker from outside of the UK

Many of the considerations articulated in this report are, despite the UK framing, applicable to other national contexts. The considerations for regulating AI that are set out in chapters 1, 2 and 3 are universally applicable.

If you’re a developer of AI systems, or an AI academic

The introduction and the lessons for policymakers section set out why the UK needs to take a new approach to the regulation of AI.

A note on terminology: Throughout this report, we use ‘regulation’ to refer to the codified ‘hard’ rules and directives established by governments to control and govern a particular domain or technology. By contrast, we use the term ‘governance’ to refer to non-regulatory means by which a domain or technology might be controlled or influenced, such as norms, conventions, codes of practice and other ‘soft’ interventions.

The terms ex ante (before the event) and ex post (after the event) are used throughout this document. Here, ‘ex ante’ regulation typically refers to regulatory mechanisms intended to prevent or ameliorate future harms, whereas ‘ex post’ refers to mechanisms intended to remedy harms after the fact, or to provide redress.

Introduction

In its 2021 National AI Strategy, the UK Government outlines three core pillars for setting the country on a path towards becoming a global AI and science superpower. These are:4

- investing in the long-term needs of the AI ecosystem

- supporting the transition to an AI-enabled economy

- ensuring the UK gets the national and international governance of AI technologies right to encourage innovation, investment and protect the public and ‘fundamental values’.5

As part of its third pillar, the strategy states the Office for AI will set out a ‘national position on governing and regulating AI’ in a White Paper in early 2022. This report seeks to help the Office for AI develop this forthcoming strategy, setting out some of the key challenges associated

with the regulation of AI, different options for approaching the task and a series of concrete recommendations for the UK Government.

The publication of the new AI strategy represents an important articulation of the UK’s ambitions to cultivate and utilise the power of AI. It provides welcome detail on the Government’s proposed approach to AI investment, and their plans to increase the use of AI systems throughout different parts of the economy. Whether the widespread adoption of AI systems will increase economic growth remains to be seen, but it is a belief that underpins this Government’s strategy, and this paper does not seek to explore that assumption.6

The strategy also highlights some areas that will require further policy thinking and development in the near future. The chapter ‘Governing AI effectively’, notes some of the challenges associated with governing and regulating AI systems that are top of mind for this Government and surveys some of the different regulatory approaches that could be taken, but remains agnostic on which might work best for the UK.

Instead, it asks whether the UK’s current approach to AI regulation is adequate, and commits to set out ‘the Government’s position on the risks and harms posed by AI technologies and our proposal to address them’ in a White Paper in early 2022. In making a commitment to set out the UK’s ‘national position on governing and regulating AI’, the Government has set itself an ambitious timetable for articulating how it intends to address one of the most important gaps in current UK AI policy.

This report explores how the UK’s National AI Strategy might address the regulation and governance of AI systems. It is informed by the Ada Lovelace Institute’s own research and analysis into mechanisms for regulating AI, as well as two expert workshops that the Institute convened in April and May 2021. These convenings brought together academics, public and civil servants, regulators and representatives from civil society organisations to discuss:

- How the UK’s regulatory and governance mechanisms may have to evolve and adapt into order to serve the needs and ambitions of the UK’s approach to AI.

- How Government policy can support the UK’s regulatory and governance mechanisms to undergo these changes.

The Government is already in the process of drawing up and consulting on plans for the future of UK data regulation and governance, much of which relates to the use of data for AI systems.7 While relevant to AI, dataprotection law does not holistically address the kinds of risks and impacts AI systems may present – and is not enough on its own to provide AI developers, users and the public with the clarity and protection they need to integrate these technologies into society with confidence.

Where work to establish a supporting ecosystem for AI is already underway, the Government has so far focused primarily on developing and setting out AI-governance measures, such as the creation of bodies like the Centre for Data Ethics and Innovation (CDEI), with less attention

and activity on specific approaches to the regulation of AI systems.8

To move forward, the UK Government will have to answer fundamental questions on the regulation of AI systems in the forthcoming White Paper, including:

- What should the goal of AI regulation be, and what kinds of regulatory tools and mechanisms can help achieve those objectives?

- Do AI systems require bespoke regulation, or can the regulation of these systems be wrapped into existing sector-specific regulations, or a broader regulatory package for digital technologies?

- Should regulating AI require the creation of a single AI regulator, or empower existing regulatory bodies with the capacity and resources to regulate these systems?

- What kinds of governance practices work for AI systems, and how can regulation incentivise and empower these kinds of practices?

- How can regulators best address some of the underlying root causes of the harms associated with AI systems?9

For the UK’s AI industry it will be vital that the Government provides actionable answers to these questions. Creating a world-leading AI economy will require consistent and understandable rules, clear objectives and meaningful enforcement mechanisms.

Other world leaders in AI development are already establishing regulations around AI. In April 2021, the European Commission released a draft proposal for the regulation of AI (part of a suite of regulatory proposals for digital markets and services), which proposes a risk-based

model for establishing certain requirements on the sale and deployment of AI technologies.10 While this draft is still subject to extensive review, it has the potential to set a new global standard for AI regulation that other countries are likely to follow.

In August 2021, the Cyberspace Administration of China passed a set of draft regulations for algorithmic systems,11 which includes requirements and standards for the design, use and kinds of data that algorithmic systems can use.12 The USA is taking a slower and more fragmented route to the regulation of AI, but is also heading towards establishing its own approach.13

Throughout 2021, the US Congress has introduced several pieces of federal AI governance and data-protection legislation, such as the Information Transparency and Personal Data Control Act, which would establish similar requirements to the EU GDPR.14 In October 2021, the White House Office of Science and Technology Policy announced its intention to develop a ‘bill of rights’ to ‘clarify the rights and freedoms [that AI systems] should respect.’15 Moreover, it is looking increasingly likely that geostrategic considerations will push the EU and the USA into closer regulatory proximity over the coming years, with EU President von der Leyen having recently pushed for the EU and the USA to start collaborating together on the promotion and governance of AI systems.16

As the positions of the world’s most powerful states and economic blocs on the regulation of AI become clearer, more developed and potentially more aligned, it will be increasingly incumbent on the UK to set out its own plans, or risk getting left behind. Unless the UK carves out its own approach towards the regulation of AI, it risks playing catch-up with other nations, or having to default to approaches developed elsewhere that may not align with the Government’s particular strategic objectives. Moreover, if domestically produced AI systems do not align with regulatory standards adopted by other major trade blocs, this could have significant implications for companies operating in the UK’s domestic AI sector, who could find themselves excluded from non-UK markets.

As well as trade considerations, a clear regulatory strategy for AI will be essential to the UK Government’s stated ambitions to use AI to power economic growth, raise living standards and address pressing societal challenges like climate change. As the UK has learned from a variety of different industries, from its enduringly strong life-sciences sector,17 to recent successes in fintech,18 a clear and robust regulatory framework is essential for the development and diffusion of new technologies and processes. A regulatory framework would ensure developers and deployers of AI systems know how to operate in accordance with the law and protect against the kinds of well-documented harms associated with these technologies,19 which can undermine public confidence in their development and use.

The need for clear and comprehensive AI regulation is pressing. As a complex, novel technology, the benefits of AI are yet to be evenly distributed to all members of society, yet there is a growing body of evidence around the ways they can cause harm.20 Across the world, AI systems are being increasingly used in high-stakes settings such as determining which job applicants are successful,21 what public benefits residents are eligible to claim,22 what kind of loan a prospective financial-services client can receive,23 or what risk to society a person may potentially pose.24 In many of these instances, AI systems have not yet been proven capable of addressing these kind of tasks fairly or accurately; in others, they have not been properly integrated into the complex social environments in which they have been deployed.

But building such a regulatory framework for AI will not be easy. In virtue of their ability to develop and operate independently of human control, and to make decisions with moral and legal consequences, AI systems present a uniform set of regulatory and legal challenges

concerning agency, causation, accountability and control.25

At the same time, the specific regulatory questions posed by AI systems vary considerably across the different domains and industries in which they might be deployed. Regulators must find ways of accounting consistently for the general properties of AI, while also attending to the

peculiarities of individual use-cases and business models.

In these contexts, AI systems raise unprecedented legal and regulatory questions, such as their ability to automate morally significant decision-making processes in ways that can be difficult to predict, and their capacity to develop and operate independently of human control.

AI systems are also frequently complex and opaque, and often fail to fall neatly within the contours of existing regulatory systems – they either straddle regulatory remits, or else fall through the gaps in between them. And they are developed for a variety of purposes in different domains, where their impacts, benefits and risks may vary considerably.

These features can make it extremely difficult for existing regulatory bodies to understand if, how and in what manner to intervene.

As a result of this ubiquity and complexity, there is no pre-existing regulatory framework – from finance, medicine, product safety, consumer regulation or elsewhere – that can be reworked to readily apply to an overall, cross-cutting approach to UK AI regulation, nor any that look capable of playing such a role without substantial modifications. Instead, a coherent, effective, durable regulatory framework for AI will have to be developed from first principles, borrowing and adapting regulatory techniques, tools and ideas where they are relevant and developing new ones where necessary.

Difficulties posed by the intrinsic features of AI systems are compounded by the current nature of the business practices of many companies that develop AI systems. The developers of AI systems often fail to sit neatly within any one geographic jurisdiction, and face few

existing regulatory requirements to disclose details of how and where their systems operate. Moreover, the business models of many of the largest and most successful firms that develop AI systems tend towards market dominance, data agglomeration and user disempowerment.

All this makes the Office for AI’s task of using their forthcoming White Paper to set out the UK’s position on governing and regulating AI a substantial challenge. Even if the Office for AI limits itself to the articulation of a high-level direction of travel for AI regulation, doing so will involve adjudicating between competing values and visions of the UK’s relationship to AI, as well as between differing approaches to addressing the multiple regulatory challenges posed by the technology.

Over the course of 2021, the Ada Lovelace Institute has undertaken multiple research projects and convened expert conversations on many of issues relevant to how the UK should approach the regulation of AI.

These included:

- two expert workshops exploring the potential underlying goals of a regulatory system for AI in the UK, the different ways it might be designed, and the tools and mechanisms it would require

- workshops considering the EU’s emerging approach to AI regulation

- research on algorithmic accountability in the public sector and on transparency methods of algorithmic decision-making systems.

Drawing on the insights generated, and on our own research and deliberation, this report sets out to answer the following questions on how the UK might go about developing its approach to the regulation of AI:

- What might the UK want to achieve with a regulatory framework for AI?

- What kinds of regulatory approaches and tools could support such outcomes?

- What are the institutional and legal conditions needed to enable them?

As well as influencing broader policy debates around AI regulation, it is our hope that these considerations are useful in informing the development of the Office for AI’s White Paper, the publication of which presents a critical opportunity to help ensure that regulation delivers on its promise to help the UK live up to its ambitions of becoming an ‘AI superpower’ – and ensuring that such a status delivers economic and societal benefits.

Expert workshops on the regulation of AI

In April and May 2021, the Ada Lovelace Institute (Ada) convened two expert workshops, bringing together academics, AI researchers, public and civil servants and civil-society organisations to explore how the UK Government should approach the regulation of AI. The insights gained from these workshops have, alongside Ada’s own research and deliberation, informed the discussions presented in this report.26

These discussions were initially framed around the approach of the UK’s National AI Strategy to AI regulation. In practice, they became broader dialogues about the UK’s relationship to AI, what the goals of Government policy regarding AI systems should be and the UK’s approach to their regulation.

- Workshop one: Explored the underlying goals and aims of UK AI policy, particularly with regards to regulation and governance. A key aim here was to establish what long-term objectives, alongside economic growth, the UK should aspire to achieve through AI policy.

- Workshop two: Concentrated on identifying the specific mechanisms and policy changes that would be needed for the realisation of a successful, joined-up approach to AI regulation. Participants were encouraged to consider the challenges associated with the different objectives of AI policy, as well as broader challenges associated with regulating AI. They then discussed what regulatory approaches, tools and techniques might be required to address them. Participants were also invited to consider whether the UK’s regulatory infrastructure itself may need to be adapted or supplemented.

The workshops were conducted under Chatham House rules. With the exception of presentations given by expert participants, none of the insights produced by these workshops are attributed specifically to individual people or organisations.

Expert participants are listed out in full in the acknowledgements section at the end of the report.

Representatives from the Office for AI also attended the workshops as observers.

UK AI strategies and regulation

The UK Government’s thinking on the regulation of AI has developed significantly over the past five years. This box sets out some of the major milestones in the Government’s position on the regulation and governance of AI over this time, with the aim of putting the 2021 UK AI Strategy into the context of recent history.

2017-19 UK AI strategy

The original UK AI strategy (called the UK AI Sector Deal), published in 2017 and updated in 2019, makes relatively little mention of the role of regulation.27 In discussing how to build trust in the adoption of AI and address its challenges, the strategy is limited to calls for the creation of the Centre for Data Ethics and Innovation (CDEI) to ‘ensure safe, ethical and ground-breaking innovation in AI and data-driven technologies’. The report also calls for the creation of the Office for AI to help the UK Government implement this strategy. The UK Government has since created guidance on the ethical adoption of data-driven technologies and the mitigation of potential harms, including guidelines, developed jointly with the Alan Turing Institute, for ethical AI use in the public sector,28 a review into bias in algorithmic decision-making29 and an adoption guide for privacy-enhancing technologies.30

2021 UK AI roadmap

In January 2021, the AI Council, an independent-expert committee that provides advice to the Office for AI on the AI ecosystem and its AI strategy implementation, published a roadmap with 16 recommendations for how the UK can develop a revised national AI strategy.31

The roadmap states that:

- A revised AI strategy presents an important opportunity for the UK Government to develop a strategy for the regulation and governance of AI technologies produced

and sold in the UK, with the goal improving safety and public confidence in their use. - The UK must become ‘world-leading in the provision of responsible regulation and governance’.

- Given the rapidly changing nature of AI’s development, the UK’s systems of governance must be ‘ready to respond and adapt more frequently than has typically been true of systems of governance in the past’.

The Council recommends ‘commissioning an independent entity to provide recommendations on the next steps in the evolution of governance mechanisms, including impact and risk assessments, best-practice principles, ethical processes and institutional mechanisms that will increase and sustain public trust’.

2021 Scottish AI strategy

Some parts of the UK have further articulated their approach to the regulation of AI. In March 2021, the Scottish Government released an AI strategy that includes five principles that ‘will guide the AI journey from concept to regulation and adoption to create a chain of trust throughout the entire process.’32These principles draw on the Organisation for Economic Cooperation and Development’s (OECD’s) five complementary values-based principles for the responsible stewardship of trustworthy AI. These are:33

- AI should benefit people and the planet by driving inclusive growth, sustainable

development and wellbeing. - AI systems should be designed in a way that respects the rule of law, human rights,

democratic values and diversity, and they should include appropriate safeguards –

for example, enabling human intervention where necessary – to ensure a fair

and just society. - There should be transparency and responsible disclosure around AI systems to

ensure that people understand AI-based outcomes and can challenge them. - AI systems must function in a robust, secure and safe way throughout their life cycles

and potential risks should be continually assessed and managed. - Organisations and individuals developing, deploying or operating AI systems should

be held accountable for their proper functioning in line with the above principles.

The Scottish strategy also calls for the Government to ‘develop a plan to influence global AI

standards and regulations through international partnerships’.

2021 Digital Regulation Plan

In July 2021, the Department for Digital, Culture, Media, and Sport (DCMS) released a policy paper outlining their thinking on the regulation of digital technologies, including AI.34 The paper provides high-level considerations, including the establishment of three principles that should guide future plans for the regulation of digital technologies. These are:

- Actively promote innovation: Regulation should ‘be designed to minimise unnecessary burdens on businesses’, be ‘outcomes-focused’, backed by clear evidence of harm, and consider the effects on innovation (a concept the paper does not define). The Government’s approach to regulation should also consider non-regulatory interventions like technical standards first.

- Achieve forward-looking and coherent outcomes: This section states regulation should be coordinated across regulators to reduce undue burdens or duplicating existing regulation. Regulation should take a ‘collaborative approach’ by working with businesses to test out new interventions and business models. Approaches to regulation should ‘address underlying drivers of harm rather than symptoms, in order

to protect against future changes’. - Exploit opportunities and address challenges in the international arena: Regulation should be interoperable with international regulations, and policymakers should ‘build in international considerations from the start’, including via the creation of international standards.

The Digital Regulation Plan includes several mechanisms for putting these principles into practice, including plans to create more regulatory coordination and cooperation, engagement in international forums, and plans to embed these principles across government. However, this policy paper stops short of providing specific recommendations, approaches or frameworks for the regulation of AI systems, and provides only a broad set of considerations that are top of mind for this Government. It does not address specific regulatory tools, mechanisms or approaches the UK should consider towards AI, nor does it provide specific guidance for the overall approach the UK should take towards regulating these technologies.

2021 UK AI Strategy

Released in September 2021, the most recent UK AI Strategy sets out three pillars to lead the UK towards becoming an AI science superpower, including:

- investing in the long-term needs of the AI ecosystem

- supporting the transition to an AI-enabled economy

- ensuring the UK gets the national and international governance of AI technologies right to encourage innovation, investment and protect the public and fundamental values.

Sections one and two of the strategy include plans to launch a National AI Research and Innovation (R&I) programme to align funding priorities across UK research councils, plans to publish a Defence AI Strategy articulating military uses of AI, and other investments to expand investment in the UK’s AI sector. The third pillar on governance includes plans to pilot an AI Standards Hub to coordinate UK engagement in AI standardisation globally, fund the Alan Turing Institute to update guidance on AI ethics and safety in the public sector, and increase the capacity of regulators to address the risks posed by AI systems. In discussing AI regulation, it makes references to embedding values such as fairness, openness, liberty, security, democracy, the rule of law and respect for human rights.

Chapter 1: Goals of AI regulation

Recent policy debates around AI have emphasised cultivating and utilising the technology’s potential to contribute to economic growth. This focus is visible in the newly published AI strategy’s approach to regulation, which stresses the importance of ensuring that the regulatory system fosters public trust and a stable environment for businesses without unduly inhibiting AI innovation.

Although it is prominent in the current Government’s AI policy discussions, economic growth is just one of several underlying objectives for which the UK’s regulatory approach to AI could be

configured. As experts in our workshops pointed out, policymakers may also, for instance, want to stimulate the development of particular forms of AI, single out particular industries for disruption by the technology, or avoid particular consequences of the technology’s development and adoption.

Different underlying objectives will not necessarily be mutually exclusive, but prioritisation matters – choices about which to explicitly include and which to emphasise will have a significant effect on downstream policy choices. This is especially the case with regulation, where new regulatory institutions, approaches and tools will need to be chosen and coordinated with broader strategic goals in mind.

The first of the two expert workshops identified and debated desirable objectives for the regulation of AI in addition to economic growth – and explored what adopting these would mean, in concrete terms, for the UK’s regulatory system.35

A clear point of consensus among the workshop participants, and an important recommendation of this report, was that the Government’s approach to AI must not be focused exclusively on fostering economic growth, and must consider the unique properties of how AI systems are developed, procured and integrated.

Rather than concentrating exclusively on increasing the rate and extent of AI development and use, expert participants stressed that the Government’s approach to AI must also be attentive to the technology’s unique features, the particular ways it might manifest itself, and the specific effects it might have on the country’s economy, society and power structures.

The need to take account of the unique features of AI is a reason for developing a bespoke, codified regulatory approach to the technology – rather than accommodating it within a broader, technology-neutral, industrial strategy. Perhaps more importantly, though, workshop

participants were keen to highlight that many of AI’s most significant opportunities can only be utilised, and many of its risks can only be mitigated, with the help of an overarching Government strategy that sets out intentions for the use, regulation and governance of these systems. By attending to AI’s specific properties, it will be easier for Government to steer the beneficial development and use of AI to address societal challenges, and for the potential risks posed by the technology to be effectively managed.

In light of the specific challenges and opportunity AI poses, expert participants identified four additional objectives that might be usefully built into any AI strategy (outlined below). A common theme cutting across the discussion was that the UK should build in as an objective the protection and advancement of human rights and societally important values, such as agency, democracy, the rule of law, equality and privacy.

Objective 1: Ensure AI is used and developed in accordance with specific values and norms

A common refrain among participants was that the UK AI policy should articulate a set of high-level norms or ethical principles to govern the country’s desired relationship with AI systems. As several experts pointed out, other countries’ national AI strategies, including that of Scotland, have articulated a set of values.36 The purpose of these principles would be to inform specific policy decisions in relation to AI, including the development of regulatory policy and sector-specific guidance and best practice.

The articulation of clear, universal and specific values in a prominent AI-policy document (such as an AI strategy) can help establish a common language and set of principles that could be referenced in future policy and public debates regarding AI. In this instance, the principles would set out how the Government should cultivate and direct the development of the technology, as well as how its use should be governed. They may also extend to the programming and decision-making architecture of AI systems themselves, setting out the values and priorities the UK public would want the developers and deployers of AI systems to uphold when putting them in operation.37

In its latest AI strategy, the UK Government makes brief references to several values, including fairness, openness, liberty, security, democracy, the rule of law and respect for human rights.38 While the values and norms articulated by a national AI strategy would not themselves be able to adjudicate between competing interests and views on specific questions, they do create a framework for weighing and justifying particular courses of action. Medical ethics is a good example of the value of a common language and framework, as it provides medical practitioners with a toolkit to think about different value-laden decisions they might encounter in their practice.39 In the AI strategy, the values are not well defined enough to underpin this function, nor are they translated into clearly actionable steps to support their being upheld.

There are already a number of AI ethics principles developed by national and international organisations that the UK could draw from to further define and articulate its values for AI regulation.40 One example mentioned by expert participants is the Organisation for Economic Cooperation and Development’s (OECD’s) five complementary values-based principles for the responsible stewardship of AI,41 which the Scottish AI strategy draws on heavily.42

Another idea raised by the expert participants was that UK AI policy (and industrial strategy more broadly), should aim to establish and support democratic, inclusive mechanisms for resolving value-laden policy and regulatory decisions. Here, expert participants suggested that

deliberative public-engagement exercises, such as citizens’ assemblies and juries, could be used to set high-level values, or to inform particularly controversial, value-laden policy questions. In addition, participatory mechanisms should be embedded in the development and oversight of governance approaches to AI and data – a topic explored in a recent Ada Lovelace Institute report on participatory data stewardship.43

Expert participants noted that sustained public trust in AI will be vital, and the existence of such processes could be a useful means of ensuring that policy decisions regarding AI are aligned with public values.

However, it is important to note that while ‘building public trust’ in AI is a common and valuable objective surfaced in AI-policy debates, this framing also places the burden of responsibility onto the public to ‘be more trusting’, and does not necessarily address the root issue: the trustworthiness of AI systems.

Public participation in UK AI policy must therefore be recognised as effective not only at framing or refining existing policies in ways that will be considered more acceptable to the public, but to define the fundamental values that underpin those policies. Without this, there

is a significant risk that AI will not align with public hopes, needs and concerns, and this will undermine trust and confidence.

Objective 2: Avoid or ameliorate specific risks and harms

Another commonly voiced view from workshop participants was that UK AI policy should be configured explicitly with a view to reduce, mitigate or completely avoid particular harms and categories of harms associated with AI and its business models. In outlining the particular kinds of harm that AI policy – and particularly regulation – should aim to address, reference was made to the following:

- harms to individuals and marginalised groups

- distributional harms

- harms to free, open societies.

Harms to individuals and marginalised groups

In discussing the potential harms to individuals and marginalised groups associated with AI, participants highlighted the fact that AI systems:

- Can exhibit bias, with the result that individuals may experience AI systems treating them unfairly or drawing unfair inferences about them. Bias can take many forms, and be expressed in several different parts of the AI product development lifecycle – including ‘algorithmic’ bias in which an AI system’s outputs unfairly bias human judgement.44

- Are often more effective or more accurate for some groups than for others.45 This can lead to various kinds of harm, ranging from individuals having false inferences made about their identity or characteristics,46 to individuals being denied or locked out of services due to the failure of AI systems to work for them.47

- Tend to be optimised for particular outcomes.48 There is a tendency on the part of those developing AI systems to forget, or otherwise insufficiently consider, how the outcomes for which systems have been optimised might affect underrepresented groups within society.

- Can cause, and often rely on, the violation of individual privacy rights.49 A lack of privacy can impede an individual’s ability to interact with other people and organisations on equal terms and can cause individuals to change their behaviour.50

Distributional harms

Many of the harms associated with AI systems relate to the capacity of AI and its associated business models to drive and exacerbate economic inequality. Workshop participants listed several specific kinds of distributional harms that AI systems can raise:

- The business models of leading AI companies tend towards monopolisation and concentration of market share. Because machine-learning algorithms base their outcomes on data, well-established AI companies that can collect proprietary datasets tend to have an advantage over newer companies, which can be self-perpetuating. In addition, the large amounts of data required to train some machine-learning algorithms present a high barrier of entry into the market, which can incentivise mergers, acquisitions and partnerships.51 As several recent critiques have pointed out, addressing the harms of AI must look at the wider social, political and economic power underlying the development of these systems.52

- Labour’s declining share of GDP. Related to the tendency of AI-business models towards monopolisation, some economists have suggested that one reason for labour’s declining share of GDP in developed countries is that ‘superstar’ tech firms, which employ relatively few workers but produce significant dividends for investors, have come to represent an increasing share of overall economic activity.53

- Skills-biased technological change and automation. Expert participants also cited the potential for automation and skills-biased technological change driven by AI to lead to greater inequality. While it is contested whether the rise of AI will necessarily lead to greater economic inequality in the long term, economists have argued that the short-term disruption caused by the transition from one ‘techno-economic paradigm’ to a new one will lead to significant inequality unless policy responses are developed to counter these tendencies.54

- AI systems’ capacity to undermine the bargaining power between workers and employers, and to exacerbate inequalities between participants in markets. Finally, participants cited the ability of AI systems to undermine worker power and collective-bargaining capacity.55 The use of AI systems to monitor and feedback on worker performance, and the application of AI to recruitment and pay-setting processes are two means by which AI could tip the balance of power further towards employers rather than workers.56

Harms to free, open societies

Our expert participants also pointed to the capacity of AI systems to undermine many of the necessary conditions for free, open and democratic societies. Here, participants cited:

- The use of AI-driven systems to distort competitive political processes. AI systems that tailor content to individuals based on their data profile or behaviour (mostly through social media or search platforms) can be used to influence voter behaviour and the direction of democratic debates. This is recognised as problematic because

access to these systems is likely to be unevenly distributed across the population and political groups, and because the opacity of content creation and sharing can undermine the democratic ideal of a commonly shared and accessible political discourse – as well as ideals about public debate being subject to public reason.57 - The use of AI-driven systems to undermine the health and competitiveness of markets. In the market sphere, AI-enabled functions such as real-time, A/B testing,58 hypernudge,59 and personalised pricing and search60 undermine the ability of consumers to choose freely between competing products in a market, and can significantly skew the balance of power between consumers and large companies.

- Surveillance, privacy and the right to freedom of expression and assembly. The ability of AI-driven systems to monitor and surveil citizens has the potential to create a powerful negative effect on citizens exercising their rights to free expression and discourse – negatively affecting the tenor of democracies.

- The use of AI systems to police and control citizen behaviour. It was noted that many AI systems could be used for more coercive methods of controlling or influencing citizens. Participants cited ‘social-credit’ schemes, such as the one being implemented in China, as an example of the kind of AI system that seeks to manipulate or enforce certain forms of social behaviour without adequate democratic oversight or control.61

Objective 3: Use AI to contribute to the solution of grand societal challenges

Another common view of workshop participants was that a country’s approach to AI regulation could be informed by its stated priorities and objectives for the use of AI in society. One of the common aims of many existing national AI strategies is to articulate how a country can leverage its AI ecosystem to develop solutions to, and means of addressing substantial, society-wide challenges facing individual nations – and indeed humanity – in coming decades.62

Candidates for these challenges range from decarbonisation and dealing with the effects of climate change, navigating potential economic displacement brought about by AI systems (and the broader context of the ‘fourth industrial revolution’), to finding ways to manage the difficulties, and make best use, of an ageing population – which is itself one of the UK’s 2017 Industrial Strategy grand challenges. Workshop participants also referred to the potential for AI to be deployed to address the long-term effects of the COVID-19 pandemic and it’s potential to ameliorate future public-health crises.

Workshop participants emphasised that the purpose of articulating grand-societal challenges that AI can address was to provide an effective way to think about the coordination of different industrial strategy levers, from R&D and regulatory policy, to tax policy and public-sector

procurement. This approach would sidestep the risk of an AI national strategy that commands more AI for the sake of AI, or a strategy that places too much hope on the potential benefit of AI to bring positive societal change across all economic and societal sectors.

By articulating grand challenges that AI can address, the UK Government can help establish funding and research priorities for applications of AI that show high reward and proven efficacy. As an example, the French national AI strategy articulates several grand challenges as areas of focus for AI, including addressing the COVID-19 pandemic and fighting climate change.63

A reservation to consider with the societal-challenge approach is that it absolves Government of articulating a sense of direction when it comes to the UK’s relationship to AI. Setting out that we want AI to be used to address particular problems, and how AI is to be supported and guided to develop in a manner conducive to their solution, does not provide any indication of the level of risk we are willing to tolerate, the kinds of applications of AI we may or may not want to encourage or permit (all else remaining equal) or how our industrial and regulatory policy

should address difficult, values-based trade-offs.

Objective 4: Develop AI regulation as a sectoral strength

A fourth suggestion put forward by some workshop participants was that the UK should seek to develop AI regulation as a sectoral strength. There was limited agreement on what this goal might entail in practice, and whether it would be feasible.

Despite the UK’s strengths in academic AI research, most participants agreed that, because of existing market dynamics in the tech industry – in which a combination of mostly US and Chinese firms dominate the market, it will be very difficult to the UK market to create the

next industry powerhouse.

However, an idea that emerged in the first workshop was that the UK could potentially become world leading in flexible, innovative and ethical approaches to the regulation of AI. The UK Government has expressed explicit ambitions to lead the world in tech and data ethics since at

least 2018.64 Workshop participants noted that the UK already has an established reputation for regulatory innovation, and that the country is potentially well placed to develop an approach to the regulation of AI that is compatible with EU standards, but more sophisticated and nuanced.

This idea received additional scrutiny in the second workshop, which saw a more sustained and critical discussion, detailed below, of what cultivating a niche in the regulation of AI might look like in practice, and of the benefits it might bring.

Why is leadership in AI regulation desirable?

Some participants challenged whether leadership in the regulation of AI would actually be desirable, and if so how.

It was noted that, in some cases, a country that drives the regulatory agenda for a particular technology or science will be in a good position to attract greater levels of expertise and investment. For instance, the UK is a world leader in biomedical research and technology, in large part because it has a robust regulatory system that ensures a high quality of accuracy, safety and public trust.65 It was cautioned, however, that the UK’s status with the regulation of biomedical technology is the product of the combination of demanding standards, a pragmatic approach to the interpretation of those standards and a rigorously enforced institutional regime.

Some expert panellists suggested that, despite the fact that many regulatory rules have been set at an EU level, the UK has become a leader in the regulation of the life sciences because it combined those high ethical and legal standards with sufficient flexibility to enable genuine innovation – rather than because it relaxed regulatory standards.

The UK can’t compete on regulatory substance, but could compete on some aspects of regulatory procedure and approach

There was a degree of scepticism among expert panellists about whether the model that has enabled the UK to achieve leadership in the regulation of the biomedical-sciences industry would be replicable or would yield the same results in the context of AI regulation. In contrast to the biomedical sciences – where there are strict and clearly defined routes into practice – it is difficult for a regulator to understand and control actors developing and deploying AI systems. The scale and the immediacy of the impacts of AI technologies also tends to be far greater

than in biomedical sciences, as is the number of domains in which AI systems could potentially be deployed.

In addition to this, it was noted that the EU also has ambitions to become a global leader in the ethical regulation of AI, as demonstrated by the European Commission’s proposed AI regulations.66 It is therefore unclear what the UK might leverage to position itself as a distinct leader, alongside a larger, geographically adjacent and more influential economic bloc with a good track record of exporting its regulatory standards, which also has ambitions to occupy this space. The EU’s proposal of a comprehensive AI regulation also means that the UK does not have a first-mover advantage when it comes to the regulation of AI.

Many participants of our workshops thought it was unlikely that the UK would be able to compete with the EU (or other large economic blocs) on regulatory substance, or the specific rules and regulations governing AI. Some workshop participants observed that the comparatively small size of the UK market would mean that approval from a UK regulatory

body is of less commercial value to an AI company than regulatory approval from the EU.

In terms of regulatory substance, some participants considered whether the UK could make itself attractive as a place to develop AI products by lowering regulatory standards, but other participants noted this would be undesirable and would go against the grain of the UK’s strengths in the flexible enforcement of exacting regulatory standards. Moreover, participants suggested that a ‘race to the bottom’ approach would be counter-productive, given the size of the UK market and the higher regulatory standards that are already developing elsewhere.

Adopting this approach could mean that UK-based AI developers would not be able to sell their services and products in regions with higher regulatory standards.

Despite the limited prospects for the UK leading the world in the development of regulatory standards for AI, some workshop participants argued that it may be possible for the UK to lead on the processes and procedures for regulating AI. The UK does have a good reputation for

following regulatory processes and for regulatory process innovation (as exemplified by regulatory sandboxes, a model that has been replicated by many other jurisdictions, including the EU).67

While sandboxes no longer represent a unique selling point for the UK, the UK may be able to make itself more attractive to AI firms by establishing a series of regulatory practices and norms aimed at ensuring that companies have better guidance and support in complying with

regulations than they might receive elsewhere. These sorts of processes are particularly appealing to start-ups and small- to medium-sized enterprises (SMEs), who may struggle to navigate and comply with regulatory processes more than their larger counterparts.

A final caveat that several expert participants made was that, although more supportive regulatory processes might be enough to attract start-ups and early-stage AI ventures to the UK, keeping such companies in the UK as they grow will also require the presence of the right financial, legal and a research-and-development supportive ecosystem. While this report does not seek to answer the question of what this wider ecosystem should look like, it is clear that a regulatory framework is a necessary condition for the realisation of the Government’s stated

ambition of developing a world-leading AI sector, closely coordinated with policies to nurture and maintain these other enabling conditions.

Chapter 2: Challenges for regulating AI systems

Given AI’s relative novelty, complexity and applicability across both domains and industries, the effective and consistent regulation of AI systems presents multiple challenges. This chapter details some of the most significant of these, as highlighted by our expert workshop

participants, and sets out additional analysis and explanation of these issues. The following chapter, ‘Tools, mechanisms and approaches for regulating AI’, details some ways these challenges might be dealt with or overcome. Additional details on some of the different considerations when designing and configuring regulatory systems, which may be a useful companion to these two chapters, can be found in the annex.

The table below maps the regulatory challenges identified with the relevant tools, mechanisms and approaches for overcoming them.

Regulatory challenges and relevant tools, mechanisms and approaches

| Challenges for regulating AI systems | Potentially useful approach, tool or mechanism |

| AI regulation demands bespoke, cross-cutting rules | Regulatory capacity building

Regulatory coordination |

| The incentive structures and power dynamics of AI-business models can run counter to regulatory goals and broader societal values | Regulatory capacity building

Regulatory coordination |

| It can be difficult to regulate AI systems in a manner that is proportionate | Risk-based regulation Professionalisation |

| Many AI systems are complex and opaque | Regulatory capacity building Algorithmic impact assessment Transparency requirements Inspection powers External-oversight bodies International standards Domestic standards (e.g. via procurement) |

| AI harms can be difficult to separate from the technology itself | Moratoria and bans |

AI regulation demands bespoke, cross-cutting rules

Perhaps one of the biggest challenges presented by AI is that regulating it successfully is likely to require the development of new, domain-neutral laws and regulatory principles. There are several, interconnected reasons for this:

- AI presents novel challenges for existing legal and regulatory principles

- AI presents systemic challenges that require a coordinated response

- horizontal regulation will help avoid boundary disputes and aid industry-specific policy development

- effective, cross-cutting legal and regulatory principles won’t emerge organically

- the challenges of developing bespoke, horizontal rules for AI.

1. AI presents novel challenges for existing legal and regulatory principles

One argument for developing new laws and regulatory principles for AI is that those in existence are not fit for purpose.

AI has two features that present difficulties for contemporary legal principles. The first is its tendency to fully or partially automate moral decision-making processes in ways that can be opaque, difficult to explain and difficult to predict. The second is the capacity of AI systems

to develop and operate independently of human control. For these reasons, AI systems can challenge legal notions of agency and causation as the relationship between the behaviour of the technology and the actions of the user or developer can be unclear, and some AI systems

may change independently of human control and intervention.

While these principles have been unproblematically applied to legal questions concerning other emerging technologies, it is not clear that they will apply readily to those presented by AI. As barrister Jacob Turner explains, in contrast to AI systems, ‘a bicycle will not re-design

itself to become faster. A baseball bat will not independently decide to hit a ball or smash a window.’68

2. AI presents systemic challenges that require a coordinated response

In addition to demanding new approaches to legal principles of agency and causation the effective regulation and governance of AI systems will require high levels of coordination.

As a powerful technology that can operate at scale and be applied in a wide range of different contexts, AI systems can manifest impacts at the level of the whole economy and the whole of society, rather than being confined to particular domains or sectors. Among policymakers

and industry professionals, AI is regularly compared to electricity, with claims that it can transform a wide range of different sectors.69 Whether or not this is hyperbole, the ambition to integrate AI systems across a wide variety of core services and applications raises risks of significant negative outcomes. If governments aspire to use regulation and other policy mechanisms to control the systematic impacts of AI, they will have to coordinate legal and regulatory responses to particular uses of AI. Developing a general set of principles to which all regulators must adhere when dealing with AI is a practical way of doing this.

3. Horizontal regulation will help avoid boundary disputes and aid industry-specific policy development

There are also practical arguments for developing cross-cutting legal and regulatory principles for AI. The gradual shift from narrow to general AI will mean that attempts to regulate the technology exclusively through the rules applied to individual domains and sectors will become increasingly impractical and difficult. A fully vertical or compartmentalised approach to the regulation of AI would be likely to lead to boundary disputes, with persistent questions about whether particular applications or kinds of AI fall under the remit of one regulator or another – or both, or neither.

4. Effective, cross-cutting legal and regulatory principles won’t emerge organically

Clear, cross-cutting legal and regulatory principles for AI will have to be set out in legislation, rather than developed through, and set out in common law. Perhaps the most important reason for this is that setting out principles in statute makes it possible to protect against the potential harms of AI in advance (ex ante), rather than once things have gone wrong (ex post) – something a common law approach would be incapable of doing. Given the potential gravity and scope of the sorts of harms AI is capable of producing, it would be very risky to wait until

harms occur to develop legal and regulatory protections against them.

The Law Society’s evidence submission to the House of Commons Science and Technology Select Committee summarises some of reasons to favour a statutory approach to regulating and governing AI:

‘One of the disadvantages of leaving it to the Courts to develop solutions through case law is that the common law only develops by applying legal principles after the event when something untoward has already happened. This can be very expensive and stressful for all those affected. Moreover, whether and how the law develops depends on which cases are pursued, whether they are pursued all the way to trial and appeal, and what arguments the parties’ lawyers choose to pursue. The statutory approach ensures that there is a framework in place that everyone can understand.’70

5. The challenges of developing bespoke, horizontal rules for AI

The need to develop new, domain-neutral, AI-specific law raises several difficult questions for policymakers. Who should be responsible for developing these legal and regulatory principles? What values and priorities should these principles reflect? How can we ensure that those developing the principles have a good enough understanding of the ways AI can and might develop and impact on society?

It can be difficult to regulate AI systems in a manner that is proportionate

Given the range of applications and uses of AI, a critical challenge in developing an effective regulatory approach is ensuring that rules and standards are strong enough to capture potential harms, while not being unjustifiably onerous for more innocuous or lower-risk

uses of the technology.

The difficulties of developing proportionate regulatory responses to AI are compounded because, as with many emerging technologies, it can be difficult for a regulatory body to understand the potential harms of a particular AI system before that system has become widely deployed or used. However, waiting for harms to become clear and manifest before embarking on regulatory interventions can come with significant risks. One risk is that harms may transpire to be grave, and difficult to reverse or compensate for. Another is that, by the time the harms of an AI system have become clear, these systems may be so integrated into economic life that ex post regulation becomes very difficult.71

The incentive structures and power dynamics created by AI-business models can run counter to regulatory goals and broader societal values

Several expert participants also noted that an approach to regulation must acknowledge the current reality around the market and business dynamics for AI systems. As many powerful AI systems rely on access to large datasets, the business models of AI developers can be heavily

skewed towards accumulating proprietary data, which can incentivise both extractive data practices and restriction of access to that data.

Many large companies now provide AI ‘as a service’, raising the barrier to entry for new organisations seeking to develop their own independent AI capabilities.72 In the absence of strong countervailing forces, this can create incentive structures for businesses, individuals and the public sector that are misaligned with the ultimate goals of regulators and the values of the public. Expert participants in workshops and follow-up discussions identified two of these possible perverse incentive structures: data dependency and the data subsidy.

Data dependency

The principle of universal public services under democratic control is undermined by the public sector’s incentives to rely on large, private companies for data analytics, or for access to data on service users. These services promise efficiency benefits, but threaten to disempower

the public-service provider, with the following results:

- Public-service providers may feel incentivised to collect more data on their service users that they can use to inform AI services.

- By relying on data analytics provided by private companies, public services give up control of important decisions to AI systems over which they have little oversight or power.

- Public-service providers may feel increasingly unable to deliver services effectively without the help of private tech companies.

The data subsidy

The principle of consumer markets that provide choice, value and fair treatment is undermined by the public’s incentives to provide their data for free or cheaper services (the ‘data subsidy’). This can result in phenomena like personalised pricing and search, which undermine consumer bargaining power and de facto choice, and can lead to the exploitation of vulnerable groups.

Many AI systems are complex and opaque

Another significant difficulty concerning the regulation of AI concerns the complexity and opacity of many AI systems. In practice, it can be very difficult for a regulator to understand exactly how an AI system operates, whether there is the potential for it to cause harm, and whether it has done so. The difficulty in understanding AI systems poses serious challenges, and in looking for solutions, it is helpful to distinguish between some of the sources of these challenges, which may include:

- regulators’ technical capacity and resources

- the opacity of AI developers

- the opacity of AI systems themselves.

1. Regulators’ technical capacity and resources

Firstly, many expert participants, including some from regulatory agencies, noted that existing regulatory bodies struggle to regulate AI systems due to a lack of capacity and technical expertise.

There are over 90 regulatory agencies in the UK that enforce legislation in sectors like transportation, public utilities, financial services, telecommunications, health and social services and many others. As of 2016, the total annual expenditure on these regulatory agencies was around £4 billion – but not all regulators receive the same amount, with some like the Competition and Markets Authority (CMA) or the Office of Communications (Ofcom) receiving far more than smaller regulators like the Equalities and Human Rights Commission (EHRC).73

Some regulators like the CMA and the Information Commissioner’s Office (ICO) already have some in-house employees specialising in data science and AI techniques, to reflect the nature of the work they do and kinds of organisations they regulate. But as AI systems become more widely used in various sectors of the UK economy, it becomes more urgent for regulators of all sizes to have access to the technical expertise required to evaluate and assess these systems, along with the powers necessary to investigate AI systems.

This poses questions about how regulators might best build their capacity to understand and engage with AI systems, or secure access to this expertise consistently.74

2. The opacity of AI developers

Secondly, many of the difficulties regulators have in understanding AI systems result from the fact that much of the information required to do so is proprietary, and that AI developers and tech companies are often unwilling to share information that they see as integral to their business model. Indeed, many prominent developers of AI systems have cited intellectual property and trade secrets as reasons to actively disrupt or prevent attempts to audit or assess their systems.75

While some UK regulators do have powers to inspect AI systems, where those systems are developed by regulated entities, the inspection of systems becomes much more difficult when those systems are provided by third parties. This issue poses questions about the powers regulators might need to require information from AI developers or users, along with standards of openness and transparency on the part of such groups.

3. The opacity of AI systems themselves

Finally, in some cases, there are also deeper issues concerning the ability of anyone, even the developers of an AI system, to understand the basis on which it may make decisions. The biggest of these is the fact that non-symbolic AI systems, which are the kind of AI responsible for some of the most recent impressive advances in the field, tend to operate as ‘black boxes’, whose decision-making sequences are difficult to parse. In some cases, it may be the case that certain types of AI systems may not be appropriate for deployment in settings where it is essential to be able to provide a contestable explanation.

These difficulties in understanding AI systems’ decision-making processes become especially problematic in cases where a regulator might be interested in protecting against ‘procedural’ harms, or ‘procedural injustices’. In these cases, a harm is recognised not because of the nature of the outcome, but because of the unfair or flawed means by which that outcome was produced.

While there are strong arguments to take these sorts of harms seriously, they can be very difficult to detect without understanding the means by which decisions have been made and the factors that have been taken into account. For instance, looking at who an automated credit-scoring system considers to be most and least creditworthy may not reveal any obvious unfairness – or at the very least will not provide sufficient evidence of procedural harm, as any discrepancies between different groups could theoretically have a legitimate explanation. It is only when considering how these decisions have been made, and whether the system has taken into account factors that should be irrelevant, that procedural unfairness can be identified or ruled out.

AI harms can be difficult to separate from the technology itself

The complexity of the ways that AI systems can and could be deployed means that there are likely to be some instances when regulators are unsure of their ability to effectively isolate potential harms from potential benefits.

These doubts may be caused by a lack of information or understanding of a particular application of AI. There will inevitably be some instances in which it is very difficult to understand exactly the level of risk posed by a particular form of the technology, and if and how the risks posed by it might be mitigated or controlled, without undermining the benefits of

the technology.

In other cases, these doubts may be informed by the nature of the application itself, or by considerations of the likely dynamics affecting its development. There may be instances where, due to the nature of the form or application of AI, it seems difficult to separate the harms it poses from its potential benefits. Regulators might also doubt whether particular high-risk forms or uses of AI can realistically be contained to a small set of heavily controlled uses. One reason for this is that the infrastructure and investment required to make limited deployments of a high-risk application possible create long-term pressure to use the technology more widely: the industry developing and providing the technology is incentivised to advocate for a greater variety of uses. Government and public bodies may also come under

pressure to expand the use of the technology to justify the cost of having acquired it.

Chapter 3: Tools, mechanisms and approaches for regulating AI systems

To address some of the challenges outlined in the previous section, our expert workshop participants identified a number of tools, mechanisms and approaches to regulation that could potentially be deployed as part of the Government’s efforts to effectively regulate AI systems at different stages of the AI lifecycle.

Some mechanisms can provide an ex ante pre-assessment of an AI system’s risk or impacts, while others provide ongoing monitoring obligations and ex post assessments of a system’s behaviour. It is important to understand that no single mechanism or approach will

be sufficient to regulate AI effectively – but that regulators will need a variety of tools in their toolboxes to draw on as needed.

Many of the mechanisms described below follow the National Audit Office’s Principles of effective regulation,76 which we believe may offer a useful guide for the Government’s forthcoming White Paper.

Regulatory infrastructure – capacity building and coordination

Capacity building and coordination

The 2021 UK AI Strategy acknowledges that regulatory capacity and coordination will be a major area of focus for the next few years. Our expert participants also proposed sustained and significant expansion of the regulatory system’s overall capacity and levels of coordination, to support successful management of AI systems.

If the UK’s regulators are to adjust to the scale and complexity of the challenges presented by AI, and control the practices of large, multinational tech companies effectively, they will need greater levels of expertise, greater resourcing and better systems of coordination.

Expert participants were keen to stress that calls for the expansion of regulatory capacity should not be limited to the cultivation of technical expertise in AI, but should also extend to better institutional understanding of legal principles, human-rights norms and ethics. Improving regulators’ ability to understand, interrogate, predict and navigate the ethical and legal challenges posed by AI systems is just as important as improving their ability to understand and scrutinise the workings of the systems themselves.77

Expert participants also emphasised some of the limitations of AI-ethics exercises and guidelines that are not backed up by hard regulation and the law78 – and cited this as an important reason to embed ethical thinking within regulators specifically.

There are different models for allocating regulatory resources, and for improving the system’s overall capacity, flexibility and cohesiveness, any model will need:

- a means to allocate additional resources efficiently, avoiding duplication of effort across regulators, and guarding against the possibility of gaps and weak spots in the regulatory ecosystem

- a way for regulators to coordinate their responses to the applications of AI across their respective domains, and to ensure that their actions are in accordance with any cross-cutting regulatory principles or laws regarding AI

- a way for regulators to share intelligence effectively and conduct horizon-scanning exercises jointly.