COVID-19, the gig economy and the hunger for surveillance

The hidden, and unfairly distributed, costs of convenience on gig economy workers

8 December 2020

Reading time: 12 minutes

COVID-19 has opened up many existing wounds in society: from its divides along the lines of gender, ethnicity, race and disability to its haves and have nots.

From lack of secure and stable employment to access to adequate health protection, the pandemic has brought to light the human cost of the convenience provided by digital platforms through gig workers. The price for a healthful and peaceful existence for some has proved to be high for others in society, as the burden of care falls unfairly and unequivocally on them.

From delivering food to self-isolating individuals, to driving the sick to hospital, gig workers have provided essential services during the pandemic. At the same time, COVID-19 has exposed the vulnerabilities they experience in their day-to-day lives. The situation is grim. There have been reports about gig workers having to continue working despite severe risk of infection, and some even losing their lives as a result of catching the virus on the job.

Platform workers have also suffered significant losses of pay, either because they could not continue their jobs during the lockdowns (e.g. many of domestic work, beauty and self-grooming platforms could not operate at the peak of the crisis) or because their rates significantly fell. Workers for platforms like Gojek in Indonesia have suffered a 70 per cent loss of income, and some Uber and Lyft drivers in the US have experienced 65 per cent reductions in their take-home rates.

While the vulnerabilities gig workers have been exposed to during the pandemic might sound similar to those in non-standard work arrangements, they also differ substantially due to the digital technologies used to facilitate, allocate and manage gig work. One of the most important aspects of the infrastructural function of digital technologies is that it gives platforms an unprecedented power to be able to make decisions about their workers at a large scale. Opaque automated decision-making systems also limit workers’ abilities to challenge these decisions.

During the COVID-19 pandemic, the data and algorithms that enable these automated management systems are also being further conflated with surveillance tools.

As platforms have maintained the policy of considering their workers to be independent contractors, and with independents often falling outside the remit of governmental protection schemes, gig workers have had little access to healthcare, sick pay, and other forms of income protection against these risks. The result is that workers are forced to choose between staying safe and continuing to earn an income, in an environment that is increasingly uncertain and risky.

In Fairwork’s report on the gig economy and COVID-19, we analysed the measures platforms were offering to protect their workers during the coronavirus crisis, across 191 platforms in 43 countries. What emerged was that workers’ lives and livelihoods are both at risk.

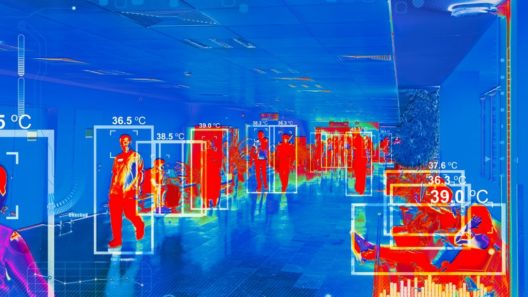

While some platforms have offered protection in the form of cashless delivery, and the provision of masks and sanitisers, these have not always been accessible. Platforms have introduced monitoring mechanisms to ensure health and safety and have established new control mechanisms to secure compliance, from temperature scans to requiring their workers send the platform selfies to prove that they have been wearing face masks.

However, our report found that the majority of these measures were intended to protect consumers, rather than the workers. Some platforms resorted to posting photos of the workers to their customers, while they were waiting to pick up orders in restaurants, to prove that they were following social distancing requirements.

These invasive data practices contribute to stopping the spread of the virus from workers to users. But they do little to ensure adequate working conditions and safety, especially when platforms do not offer access to health care services and sick pay, if and when workers become ill.

Behind the legitimate goal of expanding data collection to guarantee health and safety, platforms have been able to encroach on workers’ privacy and to increase workers’ surveillance, introducing ever-new forms of control and monitoring, with little discussion about their implications for data ethics, fairness and justice.

Harvested data can technically be reutilised for commercial purposes, providing platforms with financially valuable information about their workforce, with limited to no checks and balances. Or it can be used to punish workers and ultimately block their access to the platforms, if they test positive with the virus or their temperature is too high.

This leads to a situation where platforms exercise the same, if not a higher, degree of control over their workforce than traditional employers, without bearing any of the costs.

Through increased surveillance, platforms are more able than ever to control workers’ performance and improve customers’ experience. However, they have not provided any guarantee to workers that the newly adopted surveillance mechanisms will be discontinued after the crisis or reused for other purposes.

Below we analyse three of these developments in practices by gig economy platforms since the COVID-19 crisis:

- Temperature scans and selfies

- Antibody testing

- Contact tracing

Temperature scans and selfies

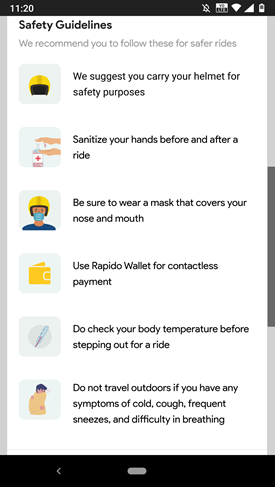

One of the policies rolled out by platforms during COVID-19 to ensure the safety of food deliveries was temperature scanning.1 Most platforms in India, for instance, are asking their workers to upload a daily temperature reading, accompanied by a selfie of themselves to prove that they are wearing a mask. Some also require a photo of their hands to demonstrate that they are wearing gloves. Pictures of restaurant staff wearing masks, sanitising kitchens, washing their hands, as well as their temperature readings, are all made available to the customers to assure them that ordering from the platform is safe. Notably, these practices are championed and promoted as ‘best safety measures’.

For instance, Swiggy, a food delivery platform, requires the chef who cooked the meal to share their temperature. Similarly, Dunzo, which offer hyperlocal delivery, asks their workers to upload selfies to demonstrate that they are wearing masks and gloves and report their temperature every day before they can begin to work.

In reality though, workers can upload pictures from their phone’s gallery and simply type their temperatures on the app. This means that platforms cannot verify the uploaded information and while they can be seen to improve health and safety for their customers, they only create an illusion of safety in order to continue operating.

Furthermore, most platforms do not provide sufficient financial assistance for workers to self-isolate when they are ill, thus offloading the financial burden of not working onto the individual worker. And, our study shows that accessing financial support from other sources is difficult, if not impossible, for self-isolating workers. When pressed with financial difficulties, workers feel the need to continue working, even if they may be unwell. Dunzo, for instance, issued a notification to workers to not ‘step out’ if they showed any of the COVID-19 symptoms but ultimately the decision whether to work or not during the pandemic boils down to whether a worker can expect to make ends meet.

Antibody testing

More and more platforms are suggesting workers are tested for antibodies to ensure that the spread of the virus can be contained, and vulnerable people are protected when lockdown measures are eased. Their argument is that, through antibody testing, platforms can determine whether a worker has already contracted the virus, and therefore is no longer at risk of contagion or of infecting others.

Alongside the current medical debate on the reliability of antibody testing, using these tests may have serious implications for many workers. If a platform decides to allow only individuals who have tested positive to work, this can expose those who have not contracted the virus to further financial vulnerabilities and for an unforeseeable period of time.

These implications may provide the perverse incentive for some workers to contract the virus in order to return to work, to lie about their test results or to find other ways to game the system. In one brief by the UK Government’s Scientific Advisory Group for Emergencies on antibody testing in the workplace, for example, experts warned that testing could prompt negative behaviours including increased risk-taking and seeking exposure to the virus.

The same report warned that practices of allocating work based on tests may constitute adverse discrimination, although it remains unclear whether the possibility of legal recourse would extend to gig economy workers, as they are classified as independent contractors.

Finally, requiring antibody testing takes for granted the wide availability of this type of testing kits available. But what if access is limited or if individuals have to pay a hefty sum to be tested? The risk of reinforcing existing socio-economic inequalities could be avoided only if platforms were to provide the tests in adequate quantities and free of charge, but it is not clear whether they would be able to shoulder the associated financial costs.

The question of whether platforms should be given such a role, which essentially turns them into border agencies for public health, is also one of ethics and fairness, as platforms would assume power over who can work and who cannot. Furthermore, precisely because many platforms do not regard themselves as employers, it remains questionable whether they should be entitled to test people that classify as contractors.

Contact tracing

Contact tracing via mobile apps is another policy suggested to be particularly beneficial for platforms as it may enable them to warn workers and customers if someone in their operations chain contracts COVID-19.

Once again, the Indian gig economy landscape constitutes an interesting case to look at. While several countries are developing and rolling out contact tracing apps, which raise separate questions about data ethics and data justice, in India, contact tracing applications are particularly connected to the gig economy.

The controversial Aarogya Setu application, developed by the Indian Government, for example, uses location and Bluetooth data from smartphones for contact tracing. The application was downloaded more than 100 million times within 40 days of its launch, even though it has raised serious concerns over the potential privacy implications for those registered on the app and the possible misuse of data collected at such a large scale.

The terms and conditions of the application do not seem to conform with Indian Cyberlaw and MIT’s COVID-19 tracing tracker scored the application a paltry 2 out of 5, failing on voluntary use, limited scope of data usage and compliance with data minimisation requirements.

Indian digital liberties organisation, The Internet Freedom Foundation, has warned about the lack of transparency with regards to whom the data collected through the app gets shared. Indeed, whereas similar applications in other countries specify that health authorities alone have access to the data, multiple authorities seem to be able to access data from Aarogya Setu and, needless to say, locational data could be also used for policing, rather than just tracing the spread of the virus.

The Indian Government initially mandated the use of the application for all private and public employees, but later changed its stance to a simple recommendation on a ‘best effort’ basis. However, platforms in different industries, from ride-hailing to food delivery to personal services to courier (e.g. Zomato, UrbanCompany, Swiggy, Dunzo, Grofers, Ola and Rapido), have already mandated the use of Aarogya Setu to their workers.

Platforms such as Amazon, Uber, Flipkart and BigBasket have put out advisories to encourage the use of the app, while some have threatened to block workers from using the platform or refuse to pay them if they fail to download and use it. It is worth noting that platform customers are neither mandated nor suggested to use any contact tracing app – overlooking the possibility that customers can equally spread the virus to the workers.

The Indian Federation of App-based Transport Workers (IFAT) has also raised concerns over the long-term consequences of sharing data collected by Aarogya Setu with platforms, that could identify workers with preexisting health conditions or those who have engaged in collectivisation activities if that is something the platform seeks to discourage.

Once again, when workers are asked to choose between privacy and making ends meet, they prioritise the latter, especially while recovering from the economic repercussions of lockdown. As such, it is questionable, whether or not they have any choice in resisting the platforms’ push for technologies, which are presented as health and safety measures, but have significant implications for heightened surveillance and control.

Conclusion

COVID-19 has revealed that workplace surveillance comes with a central logic: more data means better oversight; be it on the health and safety of the workers or on the efficiency of the platform. Data-hungry operational models of platforms entail that no data is too trivial to collect, and no information is too private to know.

As we have argued, some measures, such as temperature self-reporting, are likely to be merely performative, only aimed at reassuring costumers and ensuring they continue using their services. Others, like immunity testing, could be counter-productive and encourage risky behaviour. However, platforms appear to prioritise these practices, rather than ensuring the safety and wellbeing of workers through adequate personal protective equipment and benefits, like healthcare. It is also unclear to what extent the new control mechanisms we have reviewed constitute temporary measures or will stay in place after the health emergency is over.

It is particularly important to stress the power imbalance between platforms and workers at this point and the fact that increased data collection, during the COVID-19 pandemic, is deepening this imbalance in favour of platform owners, without balancing it out with any duty of care towards the workers. While it might be argued that workers (whether implicitly or explicitly) give their consent to platforms to collect data in return for working opportunities, questions remain with regards to issues of justice, fairness and ethics in how that data is collected, extracted, analysed and used.

The COVID-19 crisis has brought increased attention to the vulnerabilities gig workers face every day, but their data vulnerabilities, which are also becoming more apparent as a result of the pandemic, require similar attention. Gig work should not become the testing bed of controversial technologies, rolled out in the name of health and safety of the wider population, that could then be later normalised for wider society.

Image credit: alphaspirit

Related content

High visibility and COVID-19: returning to the post-lockdown workplace

In the workplace, technology has the potential to help us respond to the health pandemic – and causes concerns about data, privacy and power.

Can digital immunity certificates be introduced in a non-discriminatory way?

New forms of technology are coming. How do we ensure they’re deployed in a way that conforms to equality regulation?

Public health identities in the private sector

The third in our series of events addressing the nascent ‘public health identity’ systems developing around the world

Something to declare? Surfacing issues with immunity certificates

As the building of technical capacity for immunity apps and deliberation about deployment progresses, we surface six issues policymakers must consider